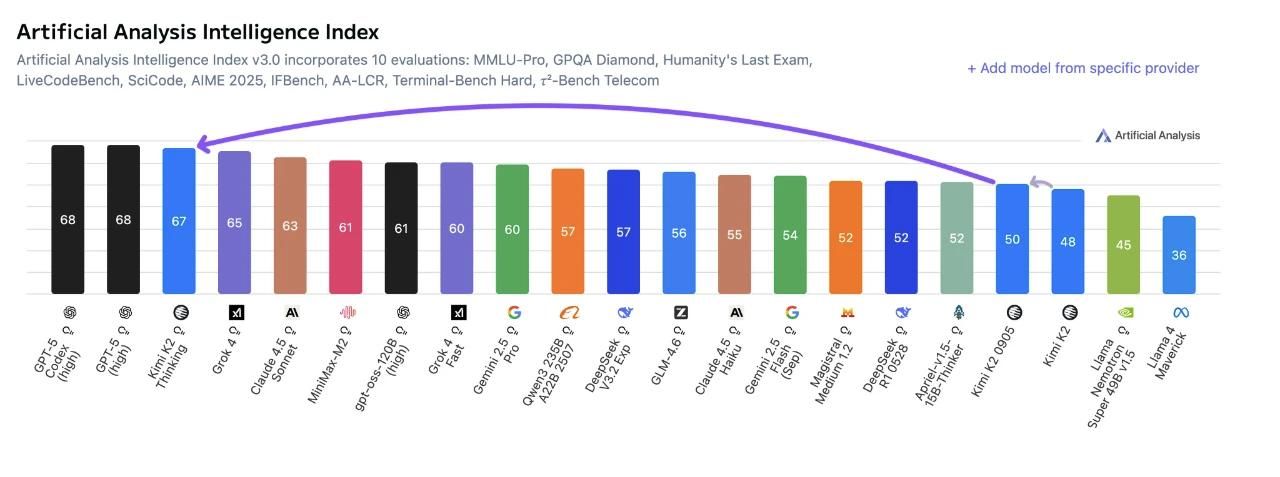

A brand new report from main AI analytics agency Synthetic Evaluation reveals that Kimi K2 Pondering has achieved the second-highest international rating—and the highest spot amongst open-source fashions—in its newest analysis of clever and agentic AI programs.

Robust Agentic and Reasoning Capabilities

Kimi K2 Pondering scored 67 factors on the AI Intelligence Index, outperforming all different open-source fashions corresponding to MiniMax-M2 (61) and DeepSeek-V3.2-Exp (57). It trails solely GPT-5, underscoring its spectacular reasoning and problem-solving capabilities.

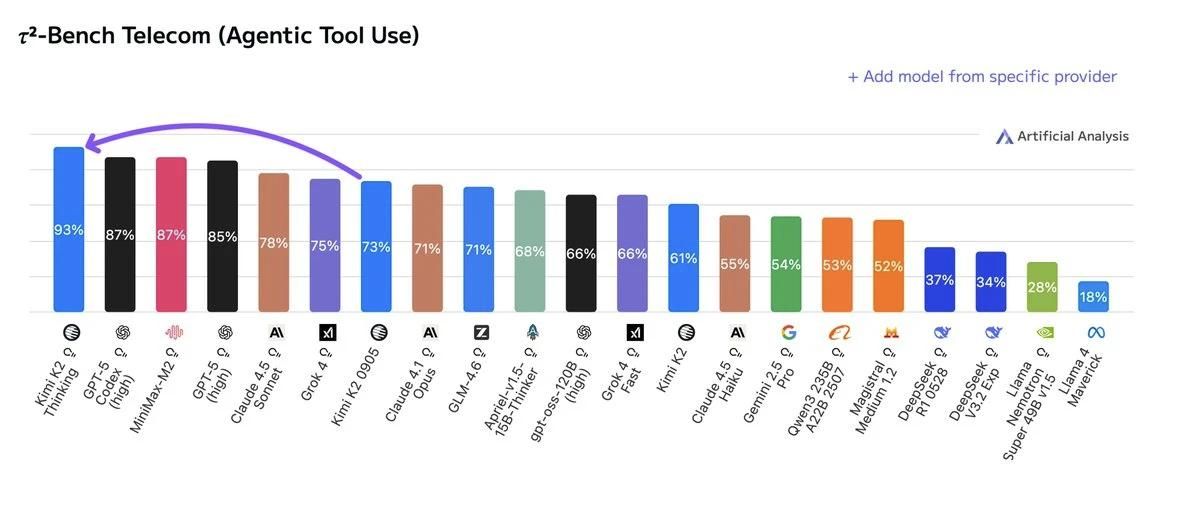

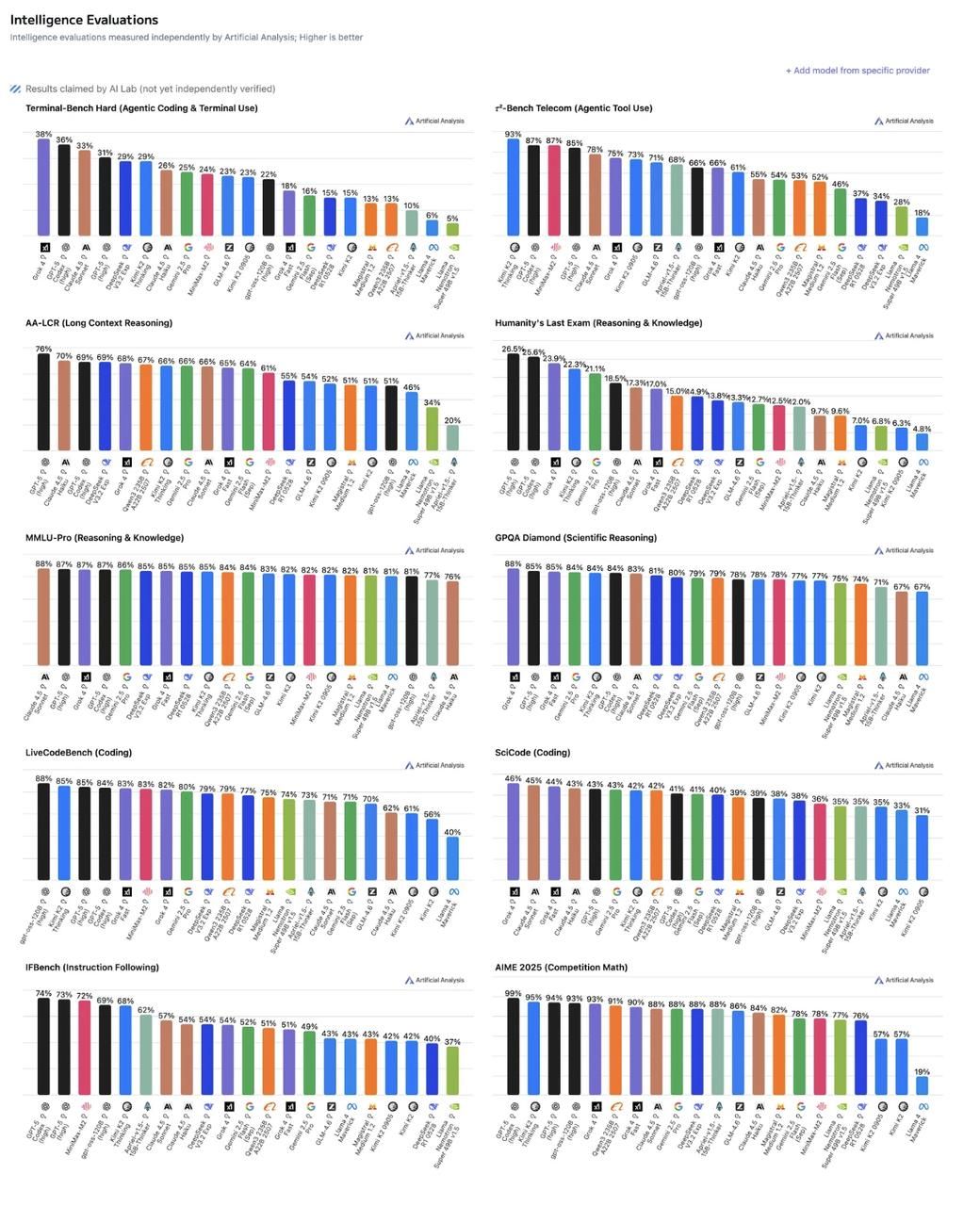

On the Agentic Benchmark, which measures efficiency in AI tool-use and autonomy, Kimi K2 Pondering ranked second solely to GPT-5, incomes a outstanding 93% on the 𝜏²-Bench Telecom take a look at—the best unbiased rating ever recorded by the agency.

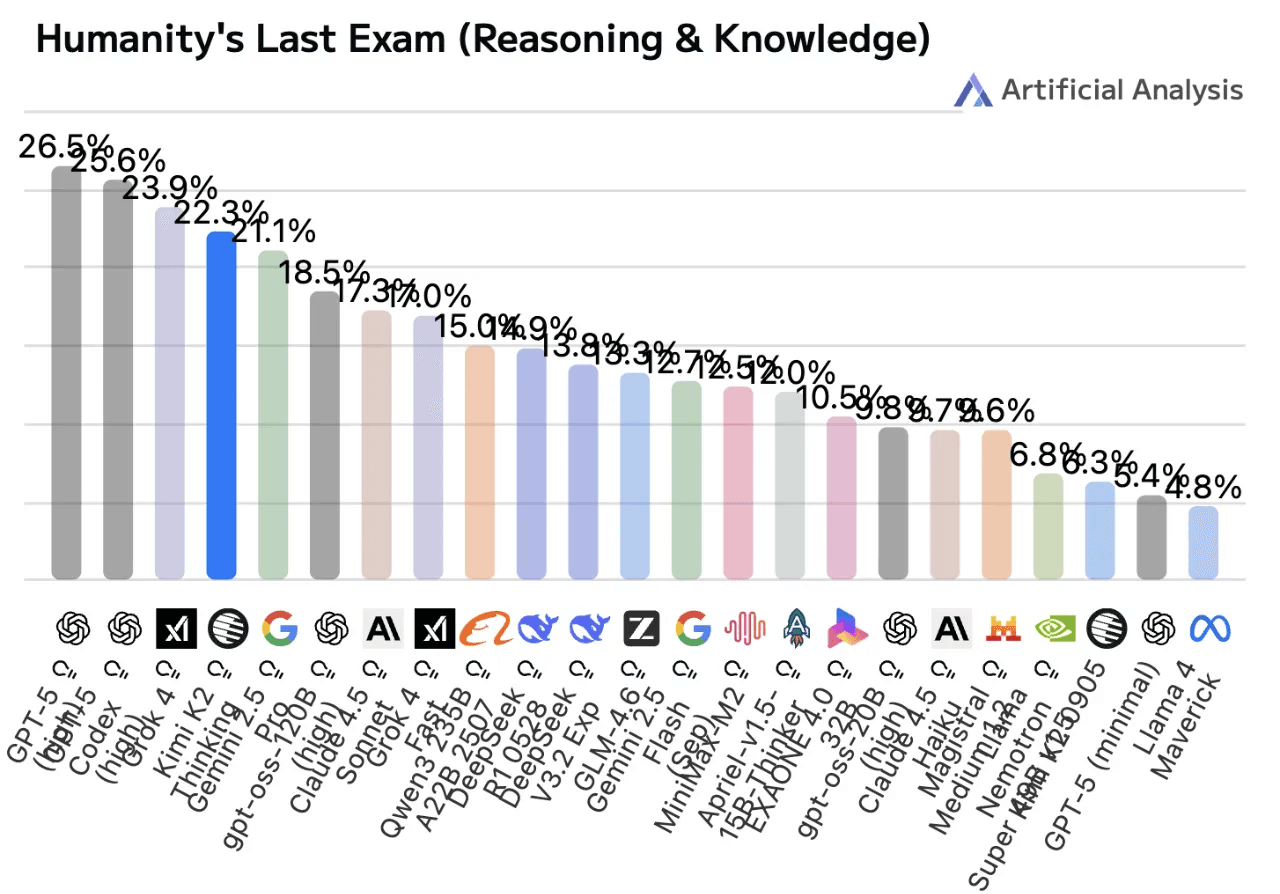

Within the Humanity’s Final Examination, a difficult take a look at of reasoning with out instruments, Kimi K2 Pondering reached 22.3%, setting a brand new file for open-source fashions and rating simply behind GPT-5 and Grok 4.

New Chief in Open-Supply Code Fashions

Whereas not the highest performer in each coding benchmark, Kimi K2 Pondering persistently positioned among the many highest, rating sixth on Terminal-Bench Laborious, seventh on SciCode, and 2nd on LiveCodeBench.

These outcomes topped it as the brand new open-source chief in Synthetic Evaluation’s Code Index, overtaking DeepSeek V3.2.

Technical Specs: 1 Trillion Parameters, INT4 Precision

Kimi K2 Pondering options 1 trillion whole parameters and 32 billion energetic parameters (~594GB), supporting a 256K context window with text-only enter.

It’s a reasoning variant of Kimi K2 Instruct, sustaining the identical structure however utilizing INT4 native precisioninstead of FP8.

This quantization—achieved via quantization-aware coaching (QAT)—reduces mannequin dimension by practically half, considerably bettering effectivity.

Tradeoffs: Excessive Verbosity, Price, and Latency

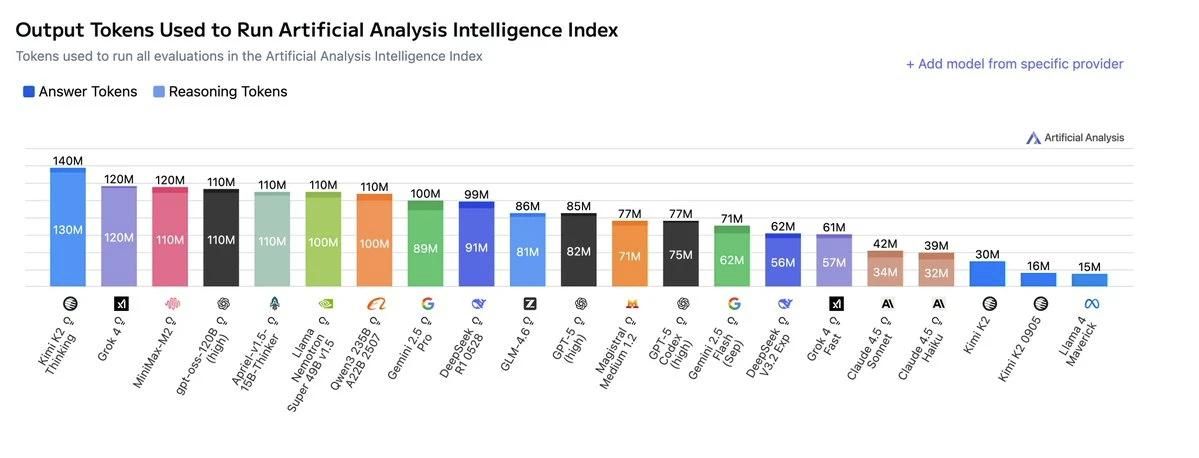

Kimi K2 Pondering was famous for being extraordinarily “talkative,” producing 140 million tokens throughout testing—2.5× DeepSeek V3.2 and a couple of× GPT-5.

Whereas this verbosity raises inference price and latency, the mannequin nonetheless gives aggressive pricing:

- Base API: $2.5 per million tokens (output), whole price $356 per analysis

- Turbo API: $8 per million tokens (output), whole price $1,172 — second solely to Grok 4 in expense

Processing speeds vary from 8 tokens/sec (Base) to 50 tokens/sec (Turbo).

The report concludes that post-training strategies like reinforcement studying (RL) proceed to drive vital efficiency positive aspects in reasoning and long-horizon tool-use duties.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s tendencies immediately: learn extra, subscribe to our publication, and turn out to be a part of the NextTech group at NextTech-news.com