Can a 3B mannequin ship 30B class reasoning by fixing the coaching recipe as a substitute of scaling parameters? Nanbeige LLM Lab at Boss Zhipin has launched Nanbeige4-3B, a 3B parameter small language mannequin household educated with an unusually heavy emphasis on knowledge high quality, curriculum scheduling, distillation, and reinforcement studying.

The analysis workforce ships 2 major checkpoints, Nanbeige4-3B-Base and Nanbeige4-3B-Pondering, and evaluates the reasoning tuned mannequin in opposition to Qwen3 checkpoints from 4B as much as 32B parameters.

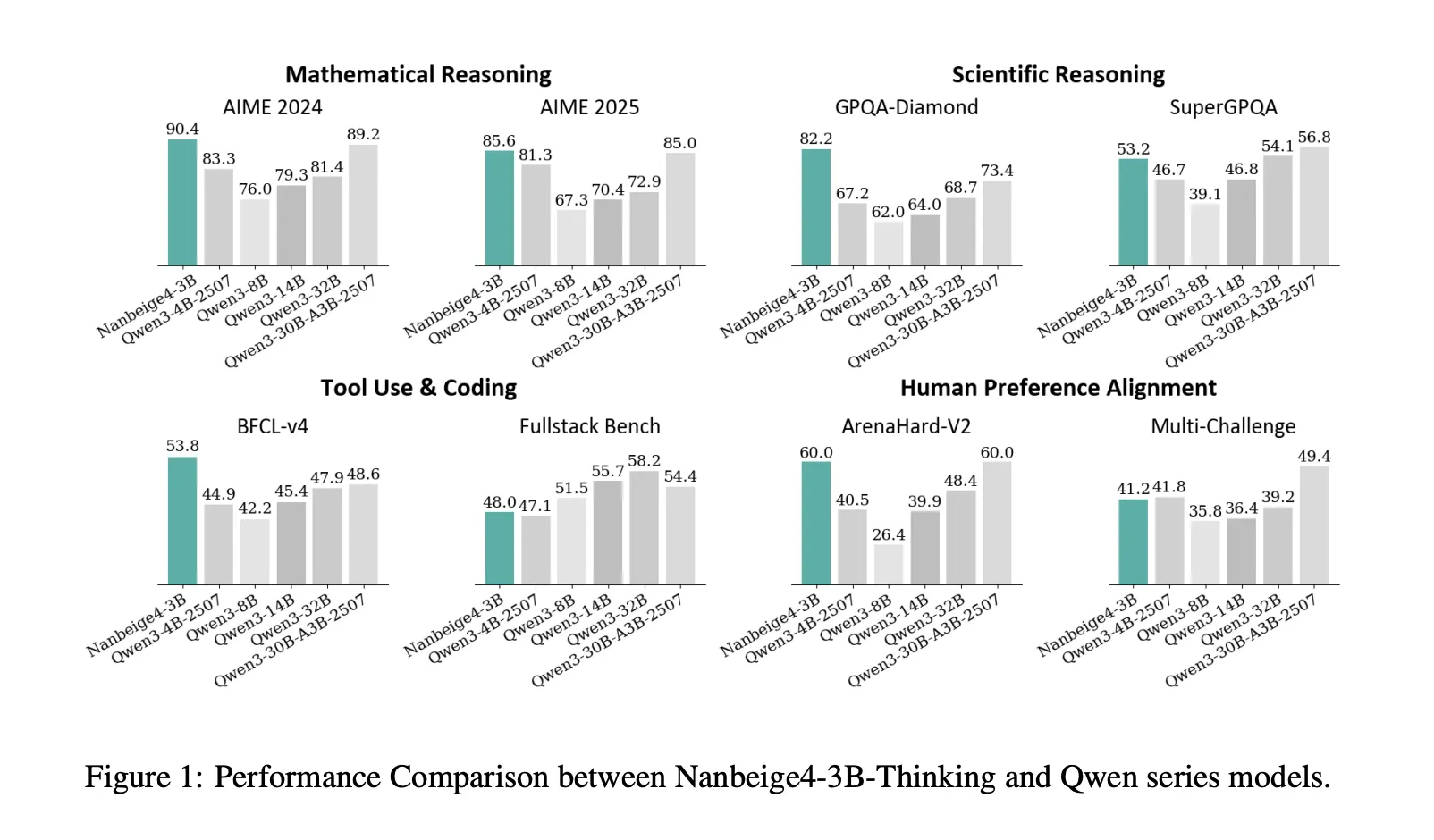

Benchmark outcomes

On AIME 2024, Nanbeige4-3B-2511 studies 90.4, whereas Qwen3-32B-2504 studies 81.4. On GPQA-Diamond, Nanbeige4-3B-2511 studies 82.2, whereas Qwen3-14B-2504 studies 64.0 and Qwen3-32B-2504 studies 68.7. These are the two benchmarks the place the analysis’s “3B beats 10× bigger” framing is straight supported.

The analysis workforce additionally showcase robust software use positive aspects on BFCL-V4, Nanbeige4-3B studies 53.8 versus 47.9 for Qwen3-32B and 48.6 for Qwen3-30B-A3B. On Area-Exhausting V2, Nanbeige4-3B studies 60.0, matching the very best rating listed in that comparability desk contained in the analysis paper. On the identical time, the mannequin is just not greatest throughout each class, on Fullstack-Bench it studies 48.0, beneath Qwen3-14B at 55.7 and Qwen3-32B at 58.2, and on SuperGPQA it studies 53.2, barely beneath Qwen3-32B at 54.1.

The coaching recipe, the components that transfer a 3B mannequin

Hybrid Information Filtering, then resampling at scale

For pretraining, the analysis workforce mix multi dimensional tagging with similarity based mostly scoring. They cut back their labeling house to twenty dimensions and report 2 key findings, content material associated labels are extra predictive than format labels, and a superb grained 0 to 9 scoring scheme outperforms binary labeling. For similarity based mostly scoring, they construct a retrieval database with lots of of billions of entries supporting hybrid textual content and vector retrieval.

They filter to 12.5T tokens of top of the range knowledge, then choose a 6.5T greater high quality subset and upsample it for two or extra epochs, producing a last 23T token coaching corpus. That is the primary place the place the report diverges from typical small mannequin coaching, the pipeline isn’t just “clear knowledge”, it’s scored, retrieved, and resampled with specific utility assumptions.

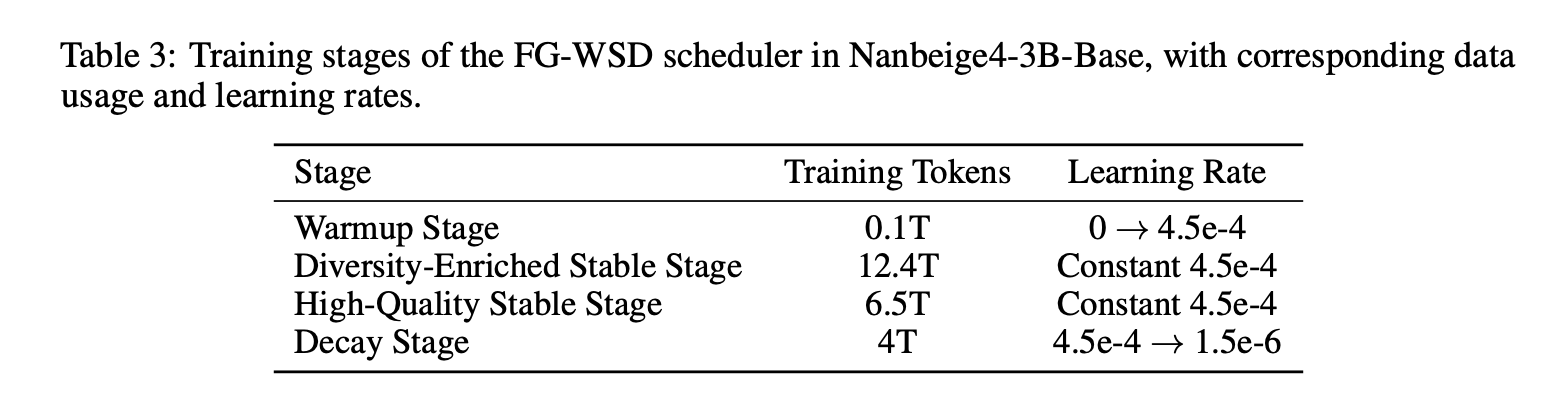

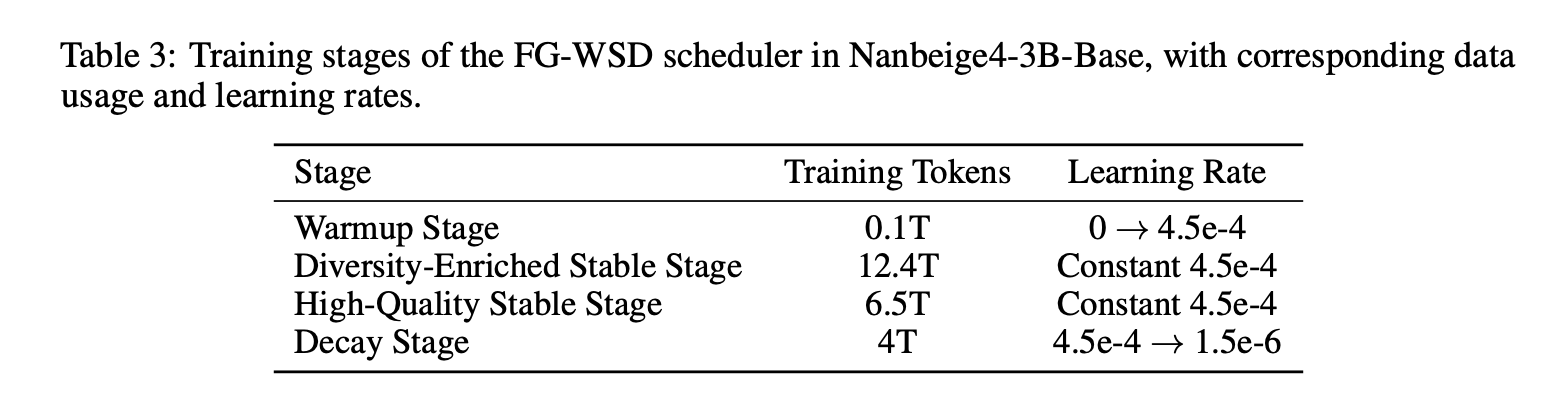

FG-WSD, a knowledge utility scheduler as a substitute of uniform sampling

Most comparable analysis tasks deal with warmup steady decay as a studying price schedule solely. Nanbeige4-3B provides a knowledge curriculum contained in the steady section through FG-WSD, Tremendous-Grained Warmup-Steady-Decay. As a substitute of sampling a set combination all through steady coaching, they progressively focus greater high quality knowledge later in coaching.

In a 1B ablation educated on 1T tokens, the above Desk reveals GSM8K enhancing from 27.1 underneath vanilla WSD to 34.3 underneath FG-WSD, with positive aspects throughout CMATH, BBH, MMLU, CMMLU, and MMLU-Professional. Within the full 3B run, the analysis workforce splits coaching into Warmup, Variety-Enriched Steady, Excessive-High quality Steady, and Decay, and makes use of ABF within the decay stage to increase context size to 64K.

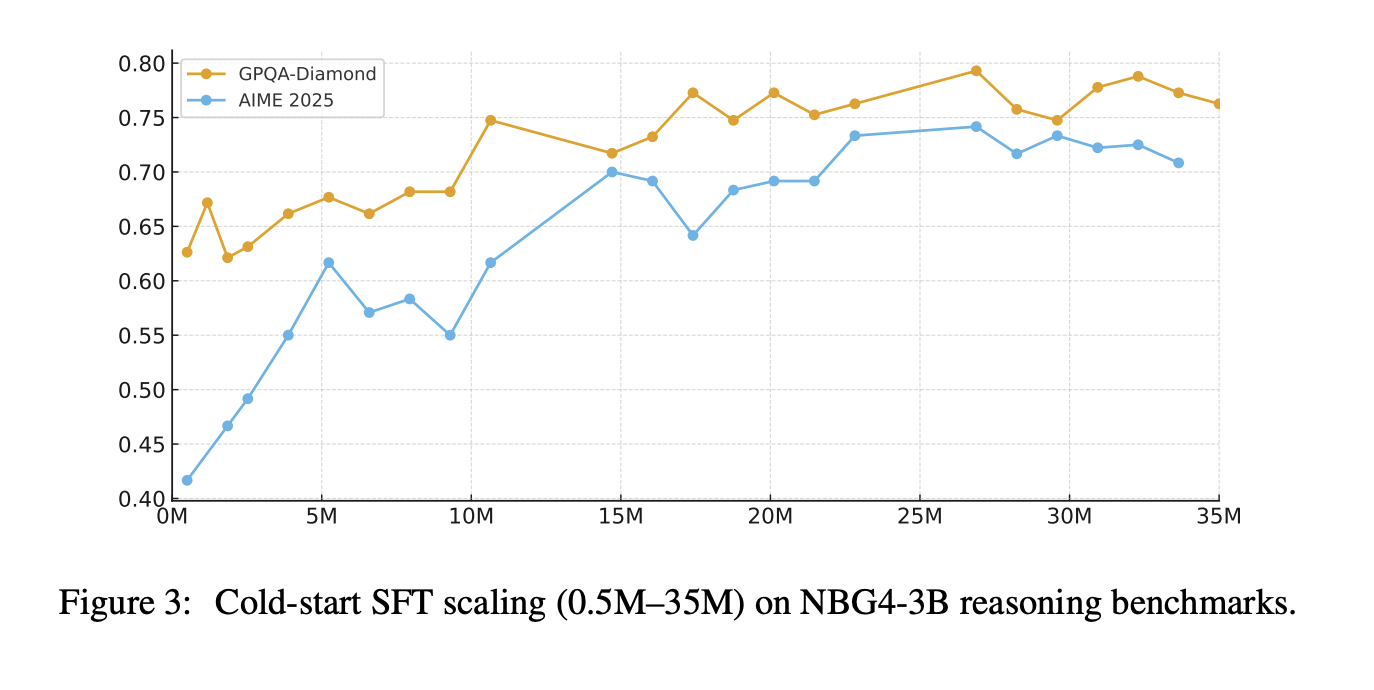

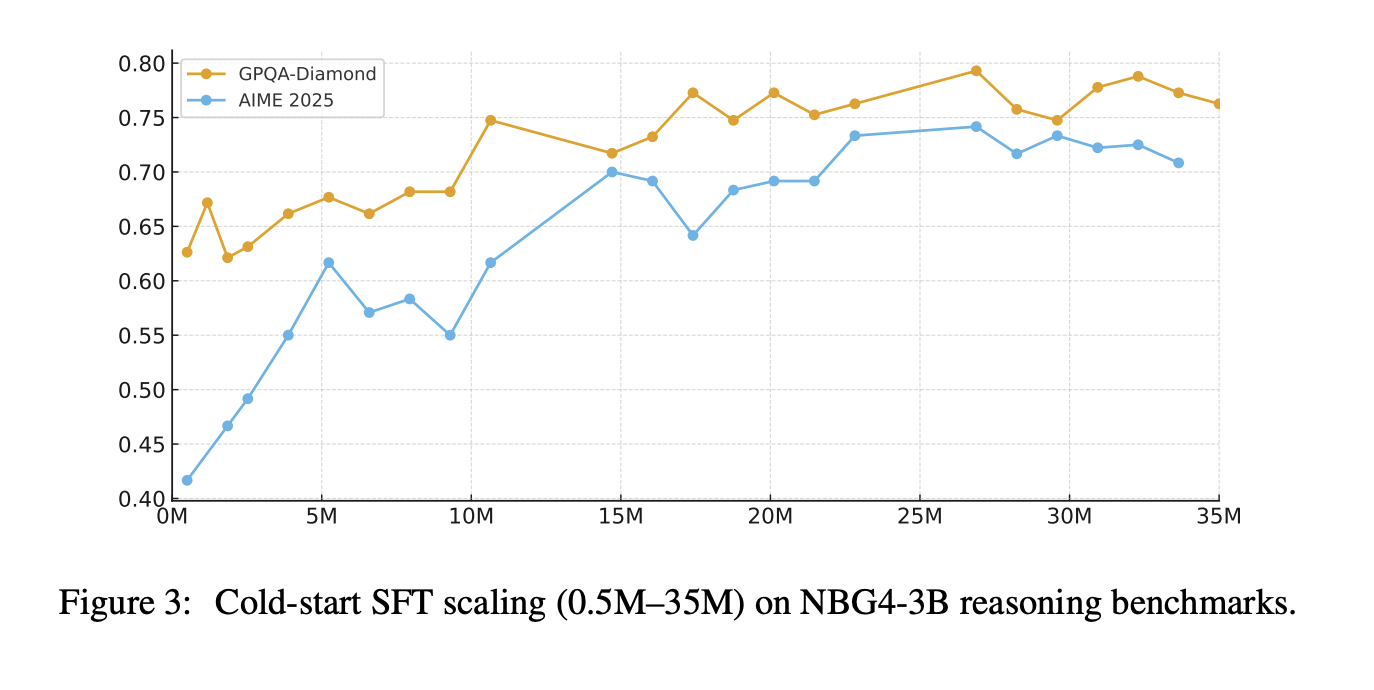

Multi-stage SFT, then repair the supervision traces

Put up coaching begins with chilly begin SFT, then general SFT. The chilly begin stage makes use of about 30M QA samples targeted on math, science, and code, with 32K context size, and a reported mixture of about 50% math reasoning, 30% scientific reasoning, and 20% code duties. The analysis workforce additionally declare that scaling chilly begin SFT directions from 0.5M to 35M retains enhancing AIME 2025 and GPQA-Diamond, with no early saturation of their experiments.

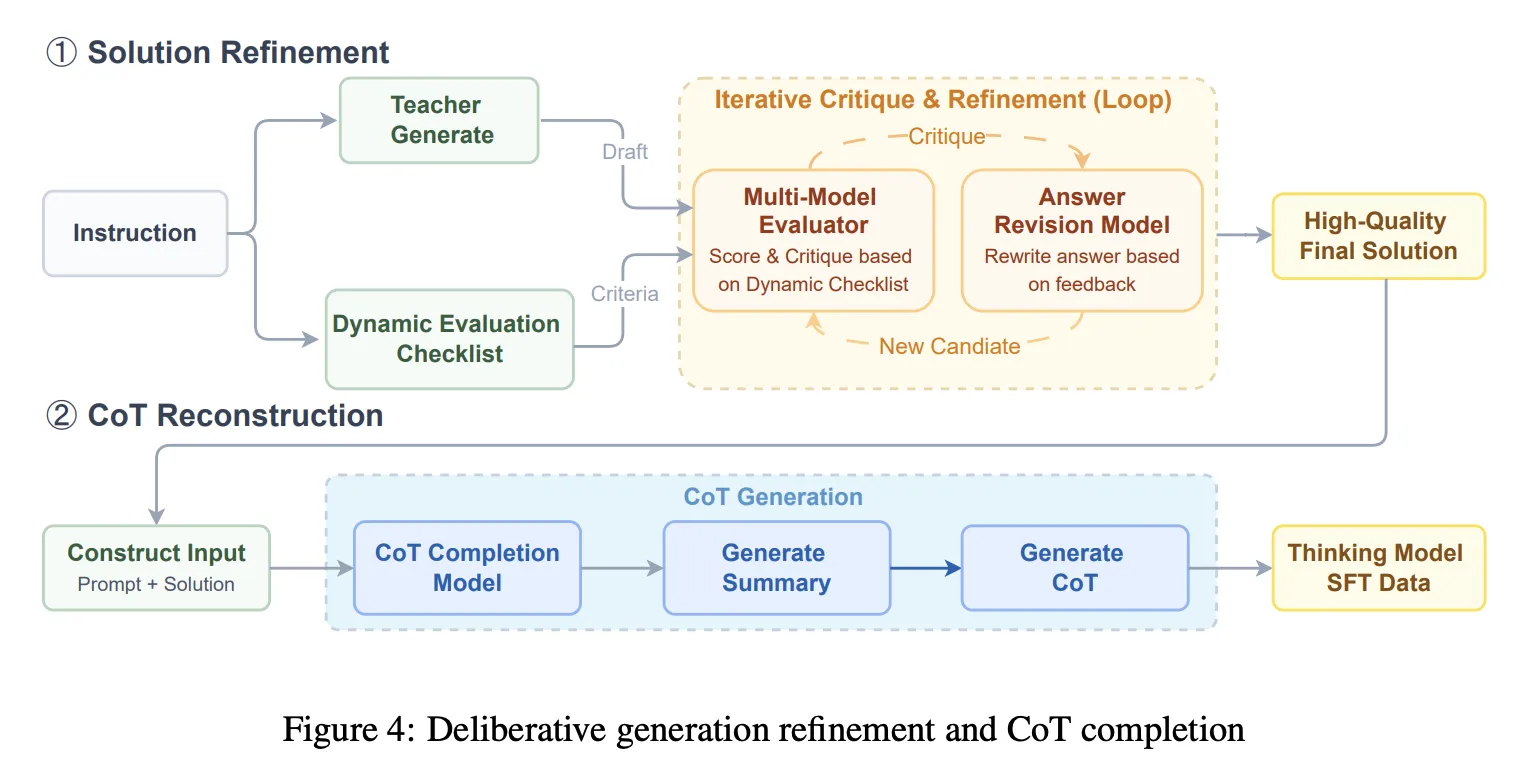

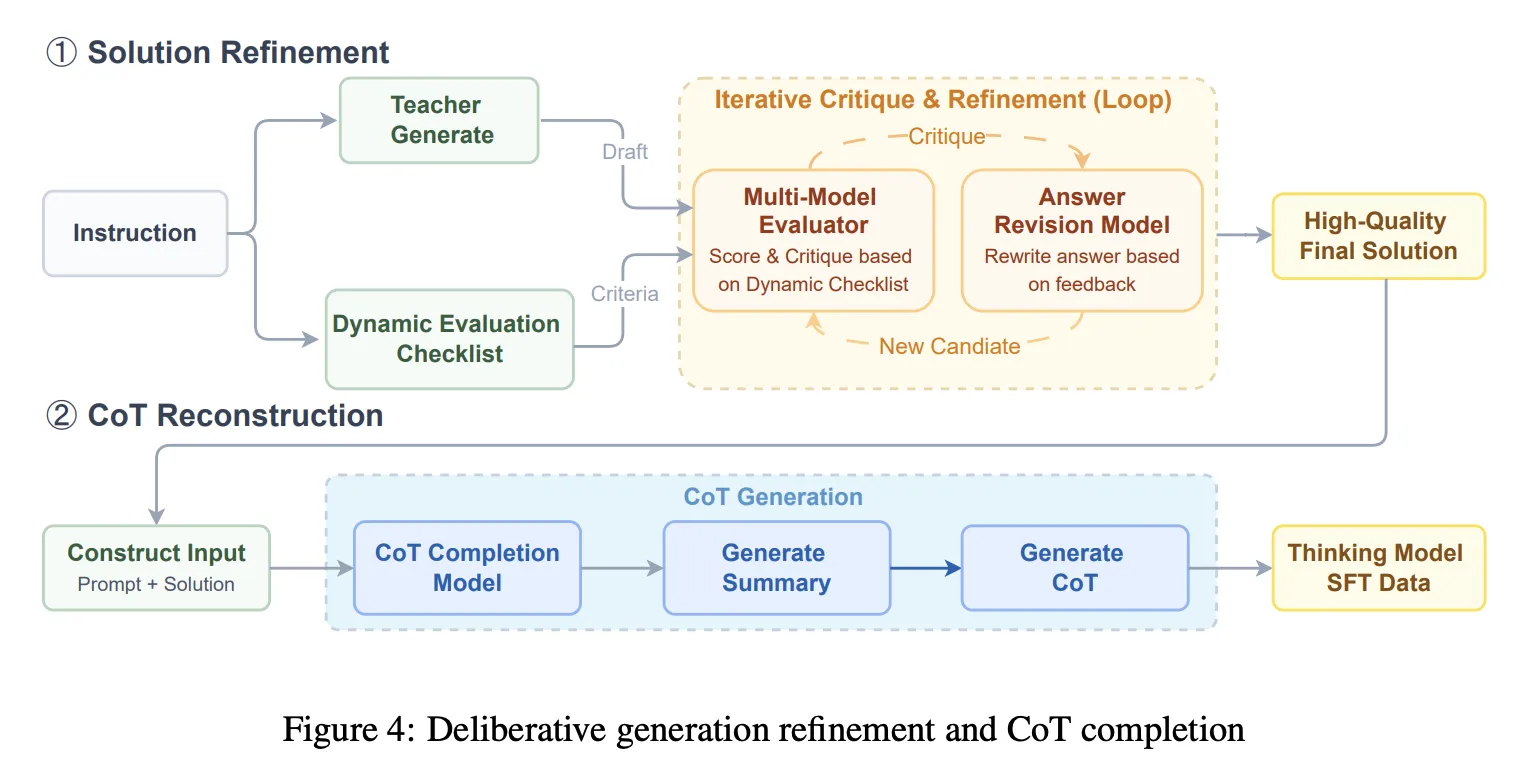

General SFT shifts to a 64K context size combine together with basic dialog and writing, agent type software use and planning, tougher reasoning that targets weaknesses, and coding duties. This stage introduces Answer refinement plus Chain-of-Thought reconstruction. The system runs iterative generate, critique, revise cycles guided by a dynamic guidelines, then makes use of a series completion mannequin to reconstruct a coherent CoT that’s per the ultimate refined resolution. That is meant to keep away from coaching on damaged reasoning traces after heavy modifying.

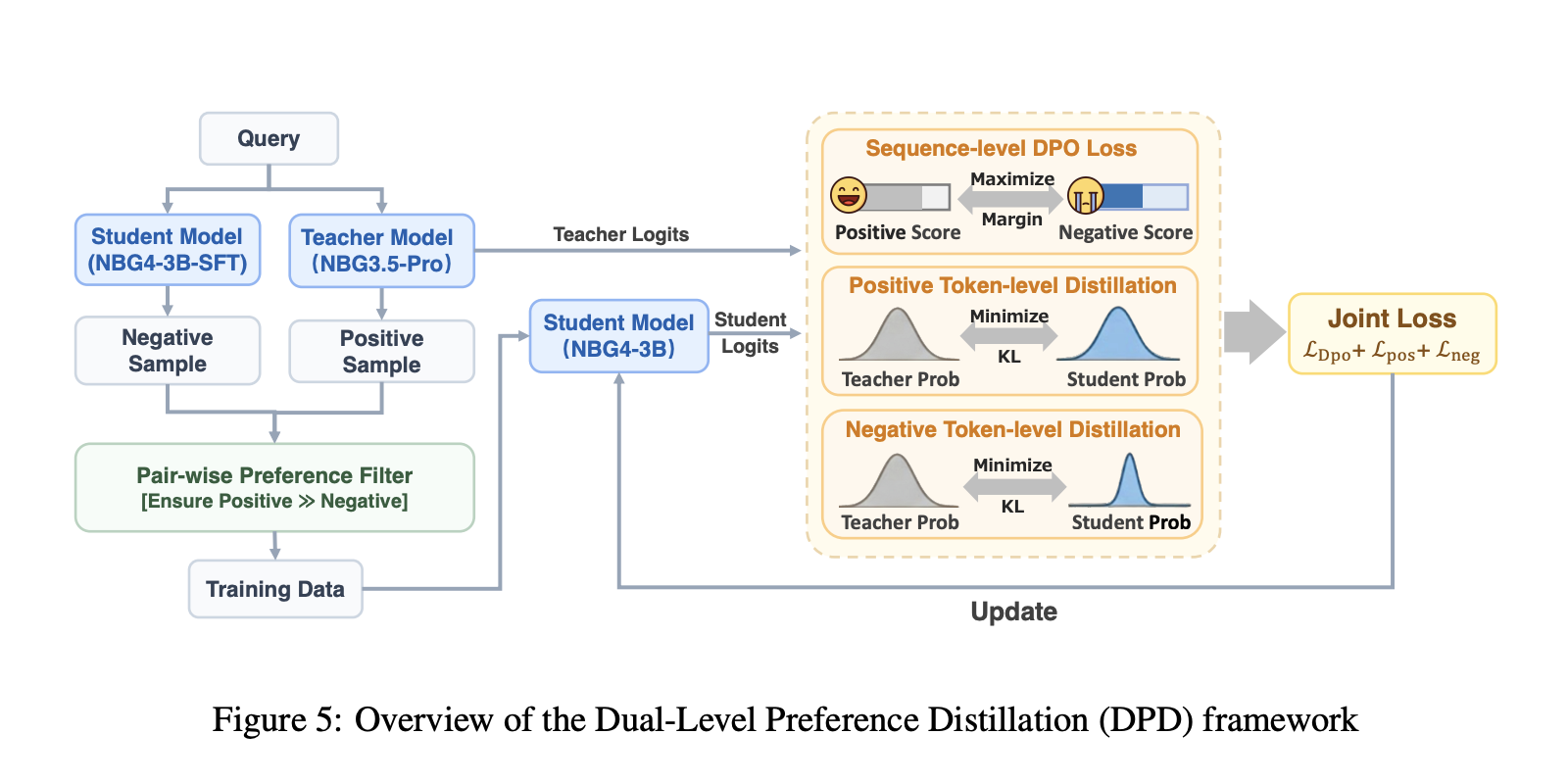

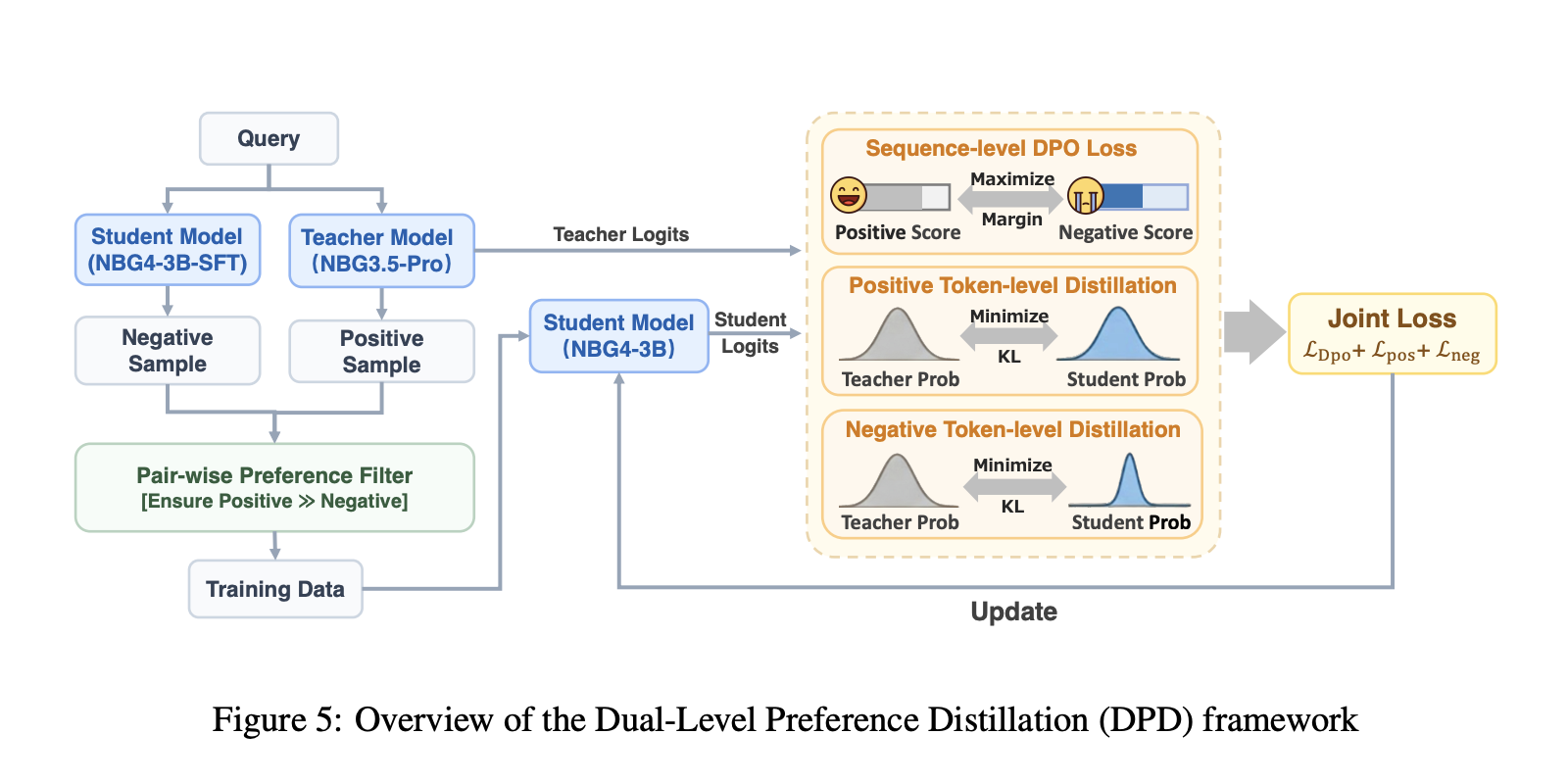

DPD distillation, then multi stage RL with verifiers

Distillation makes use of Twin-Stage Desire Distillation, DPD. The scholar learns token degree distributions from the trainer mannequin, whereas a sequence degree DPO goal maximizes the margin between optimistic and unfavorable responses. Positives come from sampling the trainer Nanbeige3.5-Professional, negatives are sampled from the 3B pupil, and distillation is utilized on each pattern varieties to scale back assured errors and enhance options.

Reinforcement studying is staged by area, and every stage makes use of on coverage GRPO. The analysis workforce describes on coverage knowledge filtering utilizing avg@16 go price and retaining samples strictly between 10% and 90% to keep away from trivial or inconceivable objects. STEM RL makes use of an agentic verifier that calls a Python interpreter to examine equivalence past string matching. Coding RL makes use of artificial check features, validated through sandbox execution, and makes use of go fail rewards from these exams. Human desire alignment RL makes use of a pairwise reward mannequin designed to provide preferences in a number of tokens and cut back reward hacking threat in comparison with basic language mannequin rewarders.

Comparability Desk

| Benchmark, metric | Qwen3-14B-2504 | Qwen3-32B-2504 | Nanbeige4-3B-2511 |

|---|---|---|---|

| AIME2024, avg@8 | 79.3 | 81.4 | 90.4 |

| AIME2025, avg@8 | 70.4 | 72.9 | 85.6 |

| GPQA-Diamond, avg@3 | 64.0 | 68.7 | 82.2 |

| SuperGPQA, avg@3 | 46.8 | 54.1 | 53.2 |

| BFCL-V4, avg@3 | 45.4 | 47.9 | 53.8 |

| Fullstack Bench, avg@3 | 55.7 | 58.2 | 48.0 |

| ArenaHard-V2, avg@3 | 39.9 | 48.4 | 60.0 |

Key Takeaways

- 3B can lead a lot bigger open fashions on reasoning, underneath the paper’s averaged sampling setup. Nanbeige4-3B-Pondering studies AIME 2024 avg@8 90.4 vs Qwen3-32B 81.4, and GPQA-Diamond avg@3 82.2 vs Qwen3-14B 64.0.

- The analysis workforce is cautious about analysis, these are avg@okay outcomes with particular decoding, not single shot accuracy. AIME is avg@8, most others are avg@3, with temperature 0.6, high p 0.95, and lengthy max technology.

- Pretraining positive aspects are tied to knowledge curriculum, not simply extra tokens. Tremendous-Grained WSD schedules greater high quality mixtures later, and the 1B ablation reveals GSM8K shifting from 27.1 to 34.3 versus vanilla scheduling.

- Put up-training focuses on supervision high quality, then desire conscious distillation. The pipeline makes use of deliberative resolution refinement plus chain-of-thought reconstruction, then Twin Desire Distillation that mixes token distribution matching with sequence degree desire optimization.

Try the Paper and Mannequin Weights. Be happy to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be happy to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be a part of us on telegram as properly.

Michal Sutter is a knowledge science skilled with a Grasp of Science in Information Science from the College of Padova. With a strong basis in statistical evaluation, machine studying, and knowledge engineering, Michal excels at remodeling advanced datasets into actionable insights.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s traits at this time: learn extra, subscribe to our publication, and develop into a part of the NextTech neighborhood at NextTech-news.com