Kicking off annually in early January, the CES present in Las Vegas has at all times been a helpful barometer of the place know-how is headed. This yr’s message was unusually clear: AI is the brand new tech infrastructure, on the forefront of trade and enterprise.

The urgency is for enterprises to know that digitalisation is now about AI transformation. The time for AI experiments and pilots is over.

Throughout the keynote speeches and displays at CES which ended final week, it was clear that AI is in all the things and is now the brand new compute platform, not a technical footnote. It now shapes price buildings, vendor dependence, safety posture and even organisational abilities.

Driving this development is semiconductors. The battle for AI is not about quicker chips. It’s about who defines how AI is constructed, deployed and paid for, reflecting an inflection level for the trade and the enterprise world.

Pointing the best way at CES had been the world’s two main semiconductor corporations, Nvidia and AMD. On the floor, their new merchandise and options look as if AI compute is fragmenting, not consolidating.

A more in-depth look reveals that they’re addressing completely different workloads which demand completely different architectures. Coaching big fashions, operating inference on the edge, powering robots or optimising enterprise workflows every comes with distinct necessities.

Their distinct visions of the AI period will strongly push enterprises to assume more durable in regards to the trade-offs they should do. What is obvious is that AI technique and silicon technique are actually inseparable.

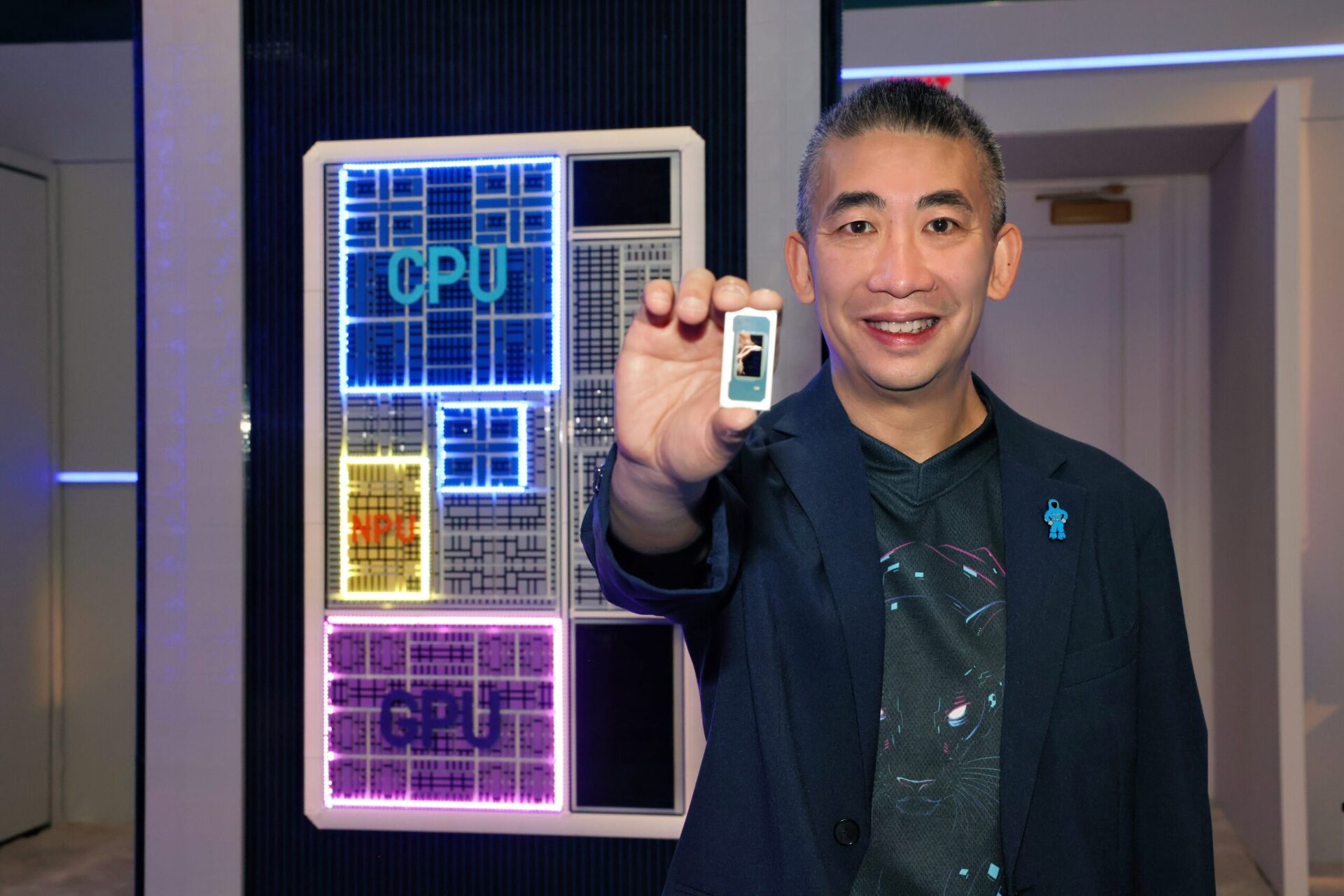

AMD: AI in all places in a heterogenous setting

AMD chair and chief government Lisa Su delivered CES’ first official keynote, outlining the corporate’s imaginative and prescient for an AI-powered future that reaches far past information centres and analysis labs.

Calling AI a very powerful know-how of the final 50 years, she mentioned: “It’s already touching each main trade, whether or not you’re going to speak about well being care or science or manufacturing or commerce.”

“We’re simply scratching the floor, AI goes to be in all places over the subsequent few years,” she added. “And most significantly, AI is for everybody.”

AMD’s greatest pitch is integration, that’s, tightly integrating CPUs, GPUs, networking and software program collectively to scale AI infrastructure effectively, she added.

AMD’s newest Helios rack platform, is designed to deliver collectively high-performance computing, superior accelerators, and networking in a single, scalable platform able to supporting the subsequent technology of AI fashions.

It is an open, modular rack design which includes a double-wide design and weighs almost 3,120kg – greater than the load of two automobiles.

Su additionally unveiled the Ryzen AI Embedded chips, a brand new platform to energy AI-driven purposes on the edge for purposes in automotive digital cockpits, good healthcare and humanoid robotics.

She was joined on stage by Gene.01, a smooth wanting humanoid robotic by Italian agency Generative Bionics. The robotic is supplied with contact sensors, together with sensor-embedded footwear that present tactile suggestions.

Su known as it “tremendous cool”, highlighting how the identical sensor know-how will also be tailored for human sufferers in medical and rehabilitation settings.

The AMD CEO additionally unveiled a sequence of chips for the PC, desktop and gaming sectors. The brand new Ryzen AI 400 Collection is geared toward premium ultra-thin and lightweight notebooks and small form-factor desktops. The Professional 400 Collection are geared toward AI acceleration and trendy safety..

They broaden the consumer computing portfolio, bringing expanded AI capabilities, premium gaming efficiency and commercial-ready options to extra programs.

Elevating the bar for gaming is the brand new Ryzen 9850X3D, AMD’s latest and quickest gaming processor. It delivers as much as 27 per cent higher gaming efficiency in comparison with the Intel Core Extremely 9 285K, in keeping with AMD. The brand new chip has eight high-performance cores and 16 threads, delivering ultra-low latency.

These new merchandise spotlight an vital message: AMD is betting that many organisations won’t need to put all their AI eggs in a single basket. As AI prices rise and workloads diversify, enterprises need room to optimise, by workload, by price range and by use case.

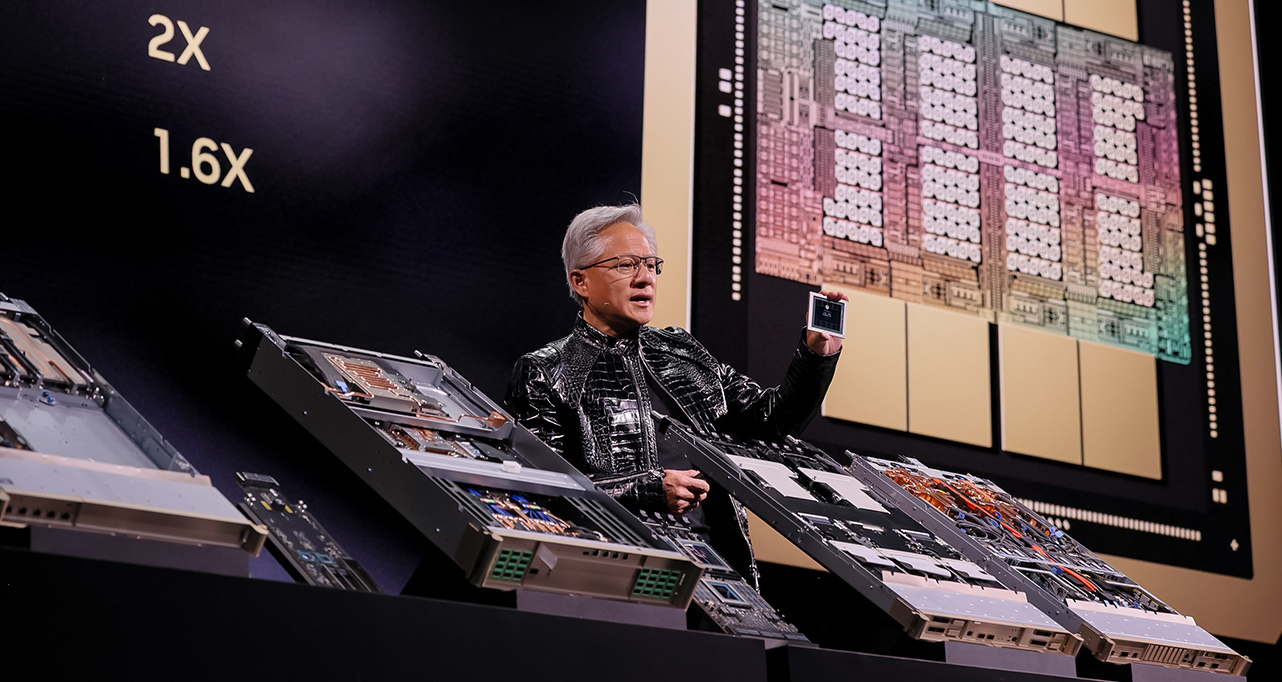

Nvidia’s new Rubin platform

Rival Nvidia chief government Jensen Huang declared at CES 2026 that AI is scaling into each area and each gadget.

“Computing has been essentially reshaped on account of accelerated computing, on account of synthetic intelligence,” he mentioned. “What which means is a few US$10 trillion or so of the final decade of computing is now being modernised to this new method of doing computing.”

Two vital merchandise he unveiled had been the Rubin structure that can exchange the Blackwell structure later this yr and the Alpamayo, an open reasoning mannequin household for autonomous car growth.

The six-chip Rubin platform addresses rising bottlenecks in mannequin coaching, storage and interconnection platform. It additionally has a brand new Vera CPU designed for agentic reasoning.

At the moment in manufacturing, the Rubin structure is a big advance in velocity and energy effectivity. In accordance with Nvidia, it’ll function three and half instances quicker than the earlier Blackwell structure on model-training duties and 5 instances quicker in inference duties. The brand new platform may even assist eight instances extra inference compute per watt.

Rubin’s promise is fewer integration complications, quicker deployment and predictable efficiency at scale. For corporations racing to coach giant fashions or run AI-heavy workloads, that may be a compelling proposition.

The brand new merchandise will allow these corporations to coach trillion-parameter fashions effectively at a time when compute energy is way in demand. The brand new merchandise additional improve the tech stack Nvidia has been constructing.

Intel: AI on the edge

Final however not least, Intel, the storied semiconductor firm, unveiled the Core Extremely Collection 3 silicon, its new AI chip for laptops. Codenamed Panther Lake, it’ll use Intel’s newest innovative 18A manufacturing course of for enhanced efficiency, density and energy supply. It’s ramping up manufacturing and orders are open.

With this new chip, Intel goals to bake AI acceleration into on a regular basis computing. The brand new chips will energy all the things from enterprise PCs and edge servers to industrial programs and telecom infrastructure.

Intel mentioned it could ship 60 per cent higher efficiency than the prior-generation of chips. Analysts mentioned Intel desires the brand new chip to bolster its core PC enterprise by enhancing the non-AI qualities consumers search for like battery life and to spice up efficiency for AI duties resembling coding.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s developments right now: learn extra, subscribe to our e-newsletter, and change into a part of the NextTech group at NextTech-news.com