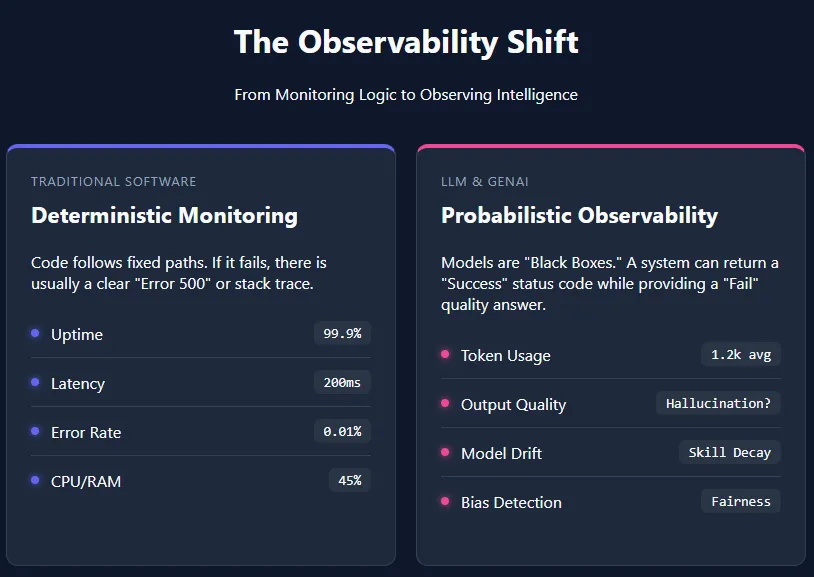

Synthetic intelligence (AI) observability refers back to the means to know, monitor, and consider AI techniques by monitoring their distinctive metrics—similar to token utilization, response high quality, latency, and mannequin drift. Not like conventional software program, giant language fashions (LLMs) and different generative AI purposes are probabilistic in nature. They don’t observe fastened, clear execution paths, which makes their decision-making tough to hint and cause about. This “black field” conduct creates challenges for belief, particularly in high-stakes or production-critical environments.

AI techniques are not experimental demos—they’re manufacturing software program. And like every manufacturing system, they want observability. Conventional software program engineering has lengthy relied on logging, metrics, and distributed tracing to know system conduct at scale. As LLM-powered purposes transfer into actual consumer workflows, the identical self-discipline is turning into important. To function these techniques reliably, groups want visibility into what occurs at every step of the AI pipeline, from inputs and mannequin responses to downstream actions and failures.

Allow us to now perceive the completely different layers of AI observability with the assistance of an instance.

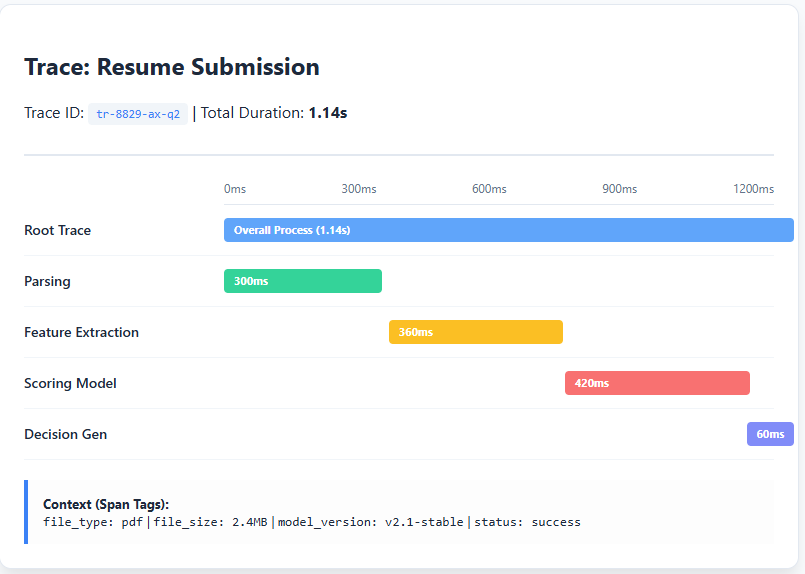

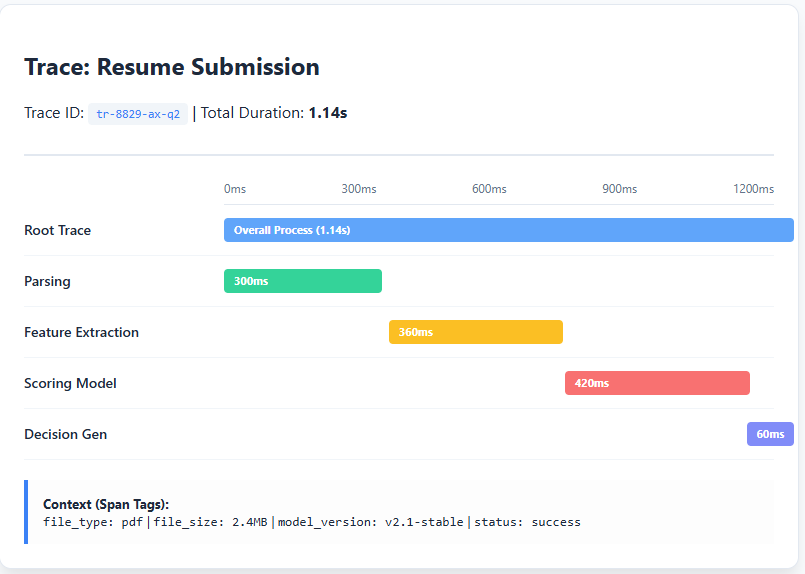

Consider an AI resume screening system as a sequence of steps quite than a single black field. A recruiter uploads a resume, the system processes it by means of a number of elements, and eventually returns a shortlist rating or advice. Every step takes time, has a price related to it, and can even fail individually. Simply trying on the closing advice may not reveal your entire image, because the finer particulars is likely to be missed.

Because of this traces and spans are vital.

Traces

A hint represents the entire lifecycle of a single resume submission—from the second the file is uploaded to the second the ultimate rating is returned. You may consider it as one steady timeline that captures all the things that occurs for that request. Each hint has a novel Hint ID, which ties all associated operations collectively.

Spans

Every main operation contained in the pipeline is captured as a span. These spans are nested inside the hint and characterize particular items of labor.

Right here’s what these spans seem like on this system:

Add Span

The resume is uploaded by the recruiter. This span data the timestamp, file dimension, format, and fundamental metadata. That is the place the hint begins.

Parsing Span

The doc is transformed into structured textual content. This span captures parsing time and errors. If resumes fail to parse appropriately or formatting breaks, the difficulty exhibits up right here.

Function Extraction Span

The parsed textual content is analyzed to extract abilities, expertise, and key phrases. This span tracks latency and intermediate outputs. Poor extraction high quality turns into seen at this stage.

Scoring Span

The extracted options are handed right into a scoring mannequin. This span logs mannequin latency, confidence scores, and any fallback logic. That is usually essentially the most compute-intensive step.

Resolution Span

The system generates a closing advice (shortlist, reject, or evaluation). This span data the output choice and response time.

Why Span-Stage Observability Issues

With out span-level tracing, all you recognize is that the ultimate advice was improper—you don’t have any visibility into whether or not the resume did not parse appropriately, key abilities have been missed throughout extraction, or the scoring mannequin behaved unexpectedly. Span-level observability makes every of those failure modes express and debuggable.

It additionally reveals the place money and time are literally being spent, similar to whether or not parsing latency is growing or scoring is dominating compute prices. Over time, as resume codecs evolve, new abilities emerge, and job necessities change, AI techniques can quietly degrade. Monitoring spans independently permits groups to detect this drift early and repair particular elements with out retraining or redesigning your entire system.

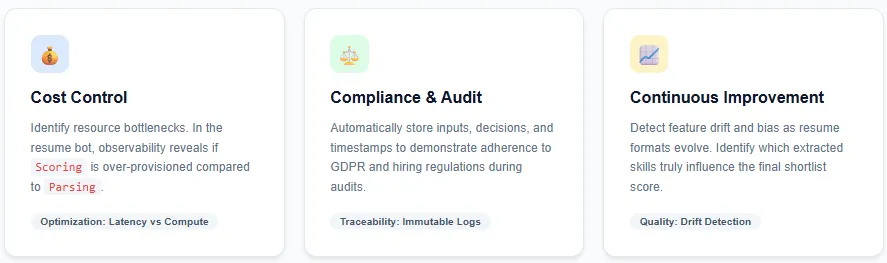

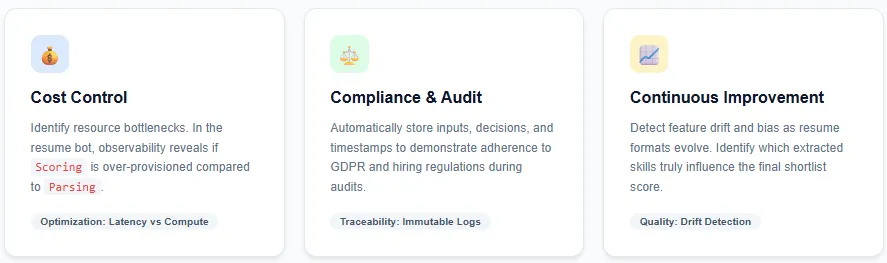

AI observability gives three core advantages: value management, compliance, and steady mannequin enchancment. By gaining visibility into how AI elements work together with the broader system, groups can rapidly spot wasted assets—for instance, within the resume screening bot, observability would possibly reveal that doc parsing is light-weight whereas candidate scoring consumes many of the compute, permitting groups to optimize or scale assets accordingly.

Observability instruments additionally simplify compliance by robotically gathering and storing telemetry similar to inputs, selections, and timestamps; within the resume bot, this makes it simpler to audit how candidate information was processed and reveal adherence to information safety and hiring laws.

Lastly, the wealthy telemetry captured at every step helps mannequin builders preserve integrity over time by detecting drift as resume codecs and abilities evolve, figuring out which options truly affect selections, and surfacing potential bias or equity points earlier than they turn into systemic issues.

Langfuse is a well-liked open-source LLMOps and observability instrument that has grown quickly since its launch in June 2023. It’s model- and framework-agnostic, helps self-hosting, and integrates simply with instruments like OpenTelemetry, LangChain, and the OpenAI SDK.

At a excessive degree, Langfuse offers groups end-to-end visibility into their AI techniques. It presents tracing of LLM calls, instruments to guage mannequin outputs utilizing human or AI suggestions, centralized immediate administration, and dashboards for efficiency and price monitoring. As a result of it really works throughout completely different fashions and frameworks, it may be added to current AI workflows with minimal friction.

Arize is an ML and LLM observability platform that helps groups monitor, consider, and analyze fashions in manufacturing. It helps each conventional ML fashions and LLM-based techniques, and integrates effectively with instruments like LangChain, LlamaIndex, and OpenAI-based brokers, making it appropriate for contemporary AI pipelines.

Phoenix, Arize’s open-source providing (licensed below ELv2), focuses on LLM observability. It consists of built-in hallucination detection, detailed tracing utilizing OpenTelemetry requirements, and instruments to examine and debug mannequin conduct. Phoenix is designed for groups that need clear, self-hosted observability for LLM purposes with out counting on managed companies.

TruLens is an observability instrument that focuses totally on the qualitative analysis of LLM responses. As a substitute of emphasizing infrastructure-level metrics, TruLens attaches suggestions capabilities to every LLM name and evaluates the generated response after it’s produced. These suggestions capabilities behave like fashions themselves, scoring or assessing facets similar to relevance, coherence, or alignment with expectations.

TruLens is Python-only and is out there as free and open-source software program below the MIT License, making it straightforward to undertake for groups that need light-weight, response-level analysis with no full LLMOps platform.

I’m a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I’ve a eager curiosity in Knowledge Science, particularly Neural Networks and their software in varied areas.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s developments immediately: learn extra, subscribe to our e-newsletter, and turn into a part of the NextTech group at NextTech-news.com