In industrial suggestion methods, the shift towards Generative Retrieval (GR) is changing conventional embedding-based nearest neighbor search with Massive Language Fashions (LLMs). These fashions characterize gadgets as Semantic IDs (SIDs)—discrete token sequences—and deal with retrieval as an autoregressive decoding activity. Nonetheless, industrial purposes typically require strict adherence to enterprise logic, akin to imposing content material freshness or stock availability. Commonplace autoregressive decoding can’t natively implement these constraints, typically main the mannequin to “hallucinate” invalid or out-of-stock merchandise identifiers.

The Accelerator Bottleneck: Tries vs. TPUs/GPUs

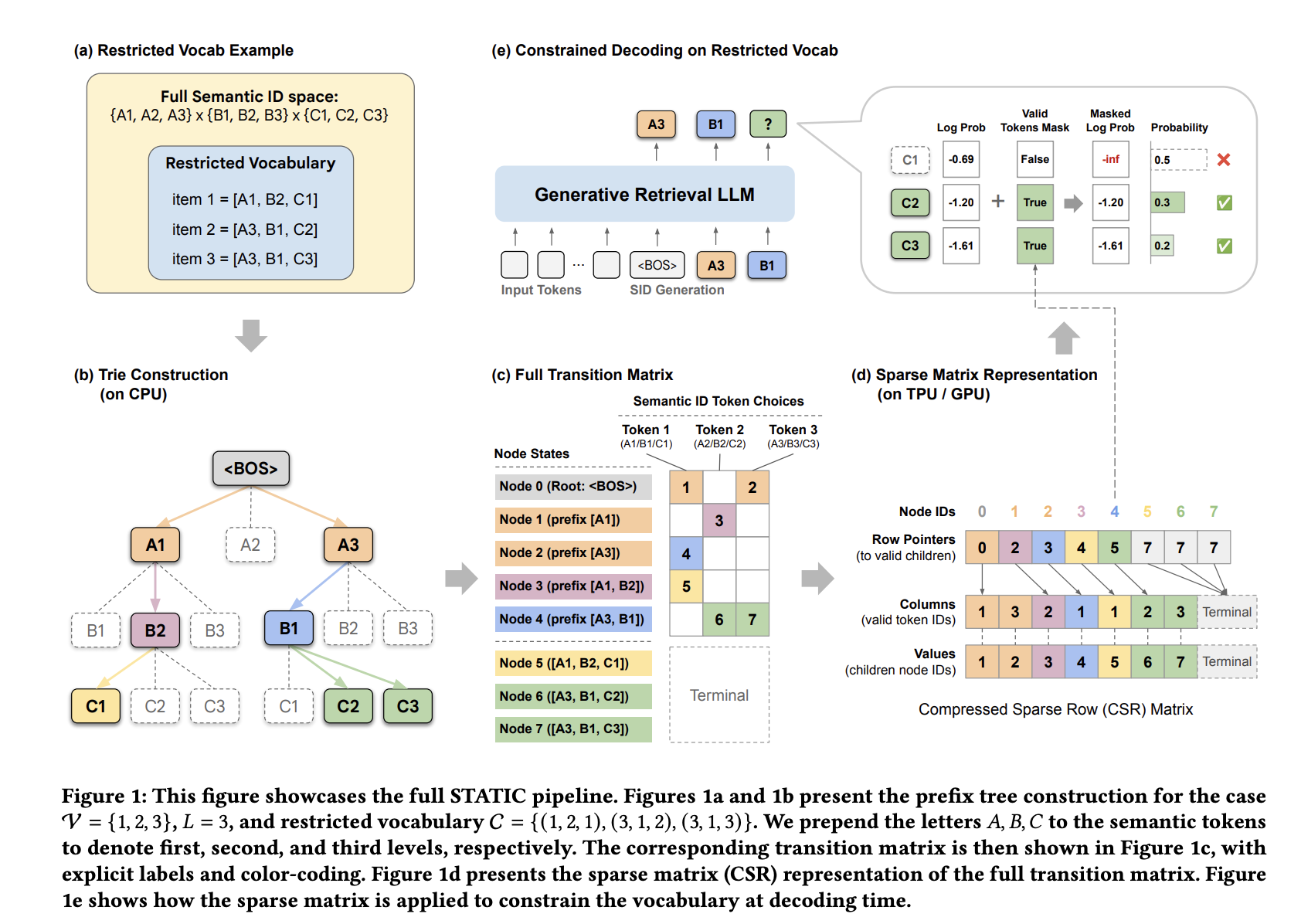

To make sure legitimate output, builders sometimes use a prefix tree (trie) to masks invalid tokens throughout every decoding step. Whereas conceptually simple, conventional trie implementations are basically inefficient on {hardware} accelerators like TPUs and GPUs.

The effectivity hole stems from two major points:

- Reminiscence Latency: Pointer-chasing buildings lead to non-contiguous, random reminiscence entry patterns. This prevents reminiscence coalescing and fails to make the most of the Excessive-Bandwidth Reminiscence (HBM) burst capabilities of contemporary accelerators.

- Compilation Incompatibility: Accelerators depend on static computation graphs for machine studying compilation (e.g., Google’s XLA). Commonplace tries use data-dependent management circulation and recursive branching, that are incompatible with this paradigm and sometimes power pricey host-device round-trips.

STATIC: Sparse Transition Matrix-Accelerated Trie Index

Google DeepMind and Youtube Researchers have launched STATIC (Sparse Transition Matrix-Accelerated Trie Index for Constrained Decoding) to resolve these bottlenecks. As an alternative of treating the trie as a graph to be traversed, STATIC flattens it right into a static Compressed Sparse Row (CSR) matrix. This transformation permits irregular tree traversals to be executed as totally vectorized sparse matrix operations.

The Hybrid Decoding Structure

STATIC employs a two-phase lookup technique to steadiness reminiscence utilization and pace:

- Dense Masking (t-1 < d): For the primary d=2 layers, the place the branching issue is highest, STATIC makes use of a bit-packed dense boolean tensor. This enables for O(1) lookups throughout probably the most computationally costly preliminary steps.

- Vectorized Node Transition Kernel (VNTK): For deeper layers (l ≥ 3), STATIC makes use of a branch-free kernel. This kernel performs a ‘speculative slice’ of a set variety of entries (Bt), equivalent to the utmost department issue at that degree. By utilizing a fixed-size slice whatever the precise youngster depend, your complete decoding course of stays a single, static computation graph.

This method achieves an I/O complexity of O(1) relative to the constraint set measurement, whereas earlier hardware-accelerated binary-search strategies scaled logarithmically (O(log|C|)).

Efficiency and Scalability

Evaluated on Google TPU v6e accelerators utilizing a 3-billion parameter mannequin with a batch measurement of two and a beam measurement (M) of 70, STATIC demonstrated vital efficiency positive factors over present strategies.

| Technique | Latency Overhead per Step (ms) | % of Complete Inference Time |

| STATIC (Ours) | +0.033 | 0.25% |

| PPV Approximate | +1.56 | 11.9% |

| Hash Bitmap | +12.3 | 94.0% |

| CPU Trie | +31.3 | 239% |

| PPV Actual | +34.1 | 260% |

STATIC achieved a 948x speedup over CPU-offloaded tries and outperformed the precise binary-search baseline (PPV) by 1033x. Its latency stays practically fixed even because the Semantic ID vocabulary measurement (|V|) will increase.

For a vocabulary of 20 million gadgets, STATIC’s higher certain for HBM utilization is roughly 1.5 GB. In observe, because of the non-uniform distribution and clustering of Semantic IDs, precise utilization is often ≤75% of this certain. The rule of thumb for capability planning is roughly 90 MB of HBM per 1 million constraints.

Deployment Outcomes

STATIC was deployed on YouTube to implement a ‘final 7 days’ freshness constraint for video suggestions. The system served a vocabulary of 20 million recent gadgets with 100% compliance.

On-line A/B testing confirmed:

- A +5.1% improve in 7-day recent video views.

- A +2.9% improve in 3-day recent video views.

- A +0.15% improve in click-through charge (CTR).

Chilly-Begin Efficiency

The framework additionally addresses the ‘cold-start’ limitation of generative retrieval—recommending gadgets not seen throughout coaching. By constraining the mannequin to a cold-start merchandise set on Amazon Critiques datasets, STATIC considerably improved efficiency over unconstrained baselines, which recorded 0.00% Recall@1. For these assessments, a 1-billion parameter Gemma structure was used with L = 4 tokens and a vocabulary measurement of |V|=256.

Key Takeaways

- Vectorized Effectivity: STATIC recasts constrained decoding from a graph traversal drawback into hardware-friendly, vectorized sparse matrix operations by flattening prefix bushes into static Compressed Sparse Row (CSR) matrices.

- Large Speedups: The system achieves a 0.033ms per-step latency, representing a 948x speedup over CPU-offloaded tries and a 47–1033x speedup over hardware-accelerated binary-search baselines.+1

- Scalable O(1) Complexity: By attaining O(1) I/O complexity relative to constraint set measurement, STATIC maintains excessive efficiency with a low reminiscence footprint of roughly 90 MB per 1 million gadgets.

- Manufacturing-Confirmed Outcomes: Deployment on YouTube confirmed 100% compliance with enterprise logic constraints, driving a 5.1% improve in recent video views and a 0.15% increase in click-through charges.

- Chilly-Begin Answer: The framework allows generative retrieval fashions to efficiently advocate cold-start gadgets, boosting Recall@1 efficiency from 0.00% to non-trivial ranges on Amazon Critiques benchmarks.

Try the Paper and Codes. Additionally, be at liberty to observe us on Twitter and don’t neglect to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be part of us on telegram as effectively.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s tendencies right now: learn extra, subscribe to our e-newsletter, and change into a part of the NextTech group at NextTech-news.com