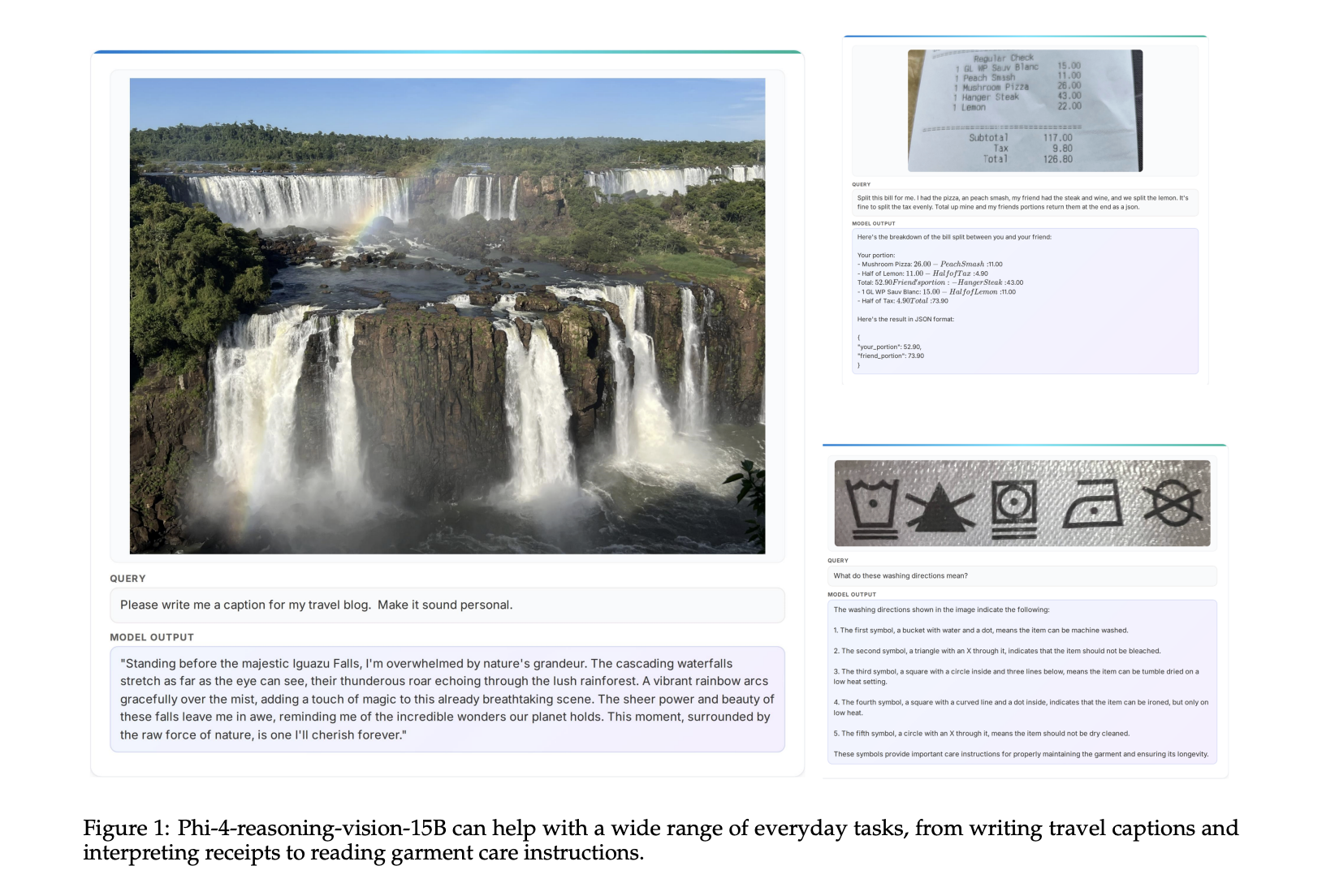

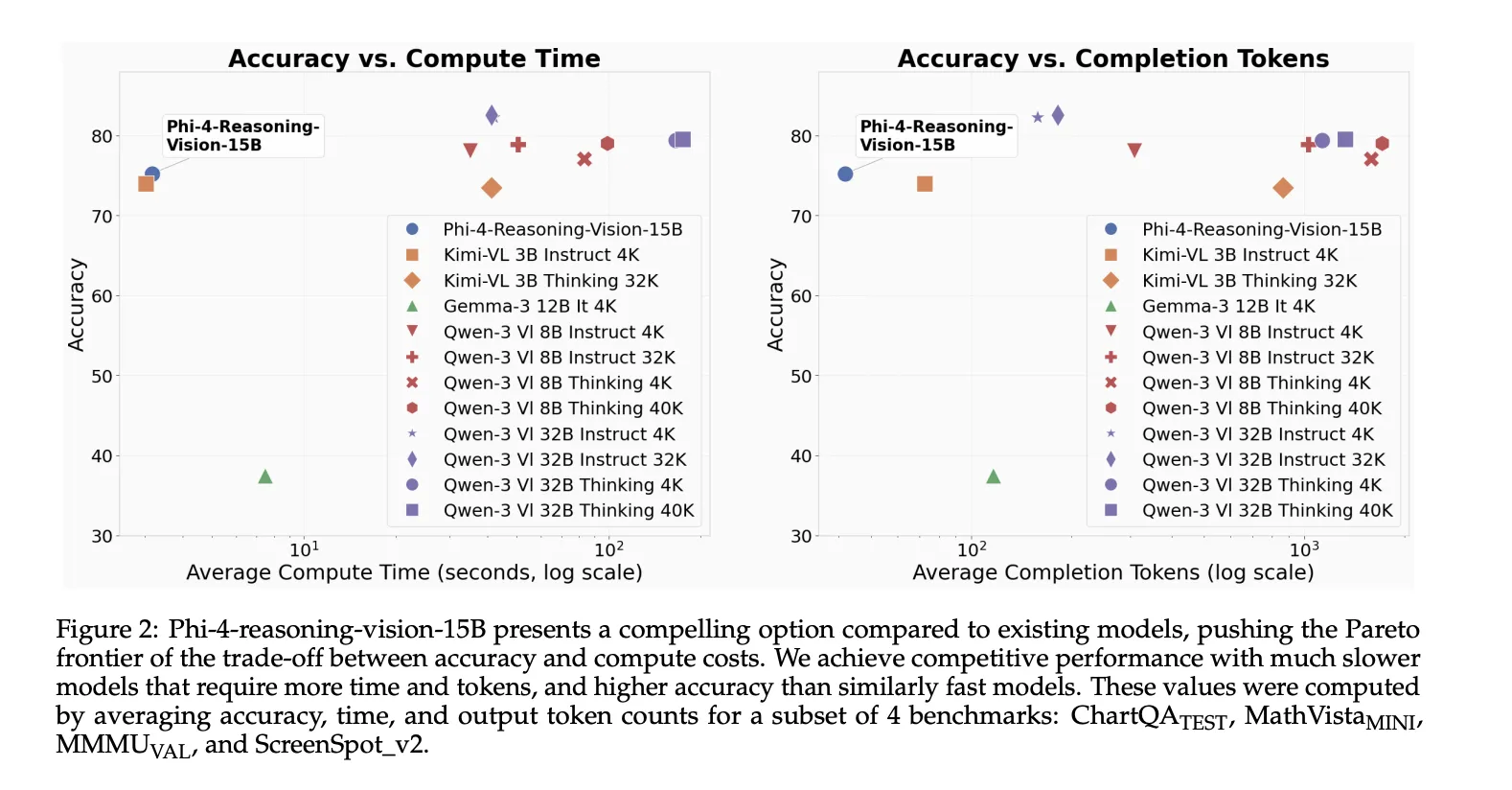

Microsoft has launched Phi-4-reasoning-vision-15B, a 15 billion parameter open-weight multimodal reasoning mannequin designed for picture and textual content duties that require each notion and selective reasoning. It’s a compact mannequin constructed to stability reasoning high quality, compute effectivity, and training-data necessities, with specific energy in scientific and mathematical reasoning and understanding consumer interfaces.

What the mannequin is constructed on?

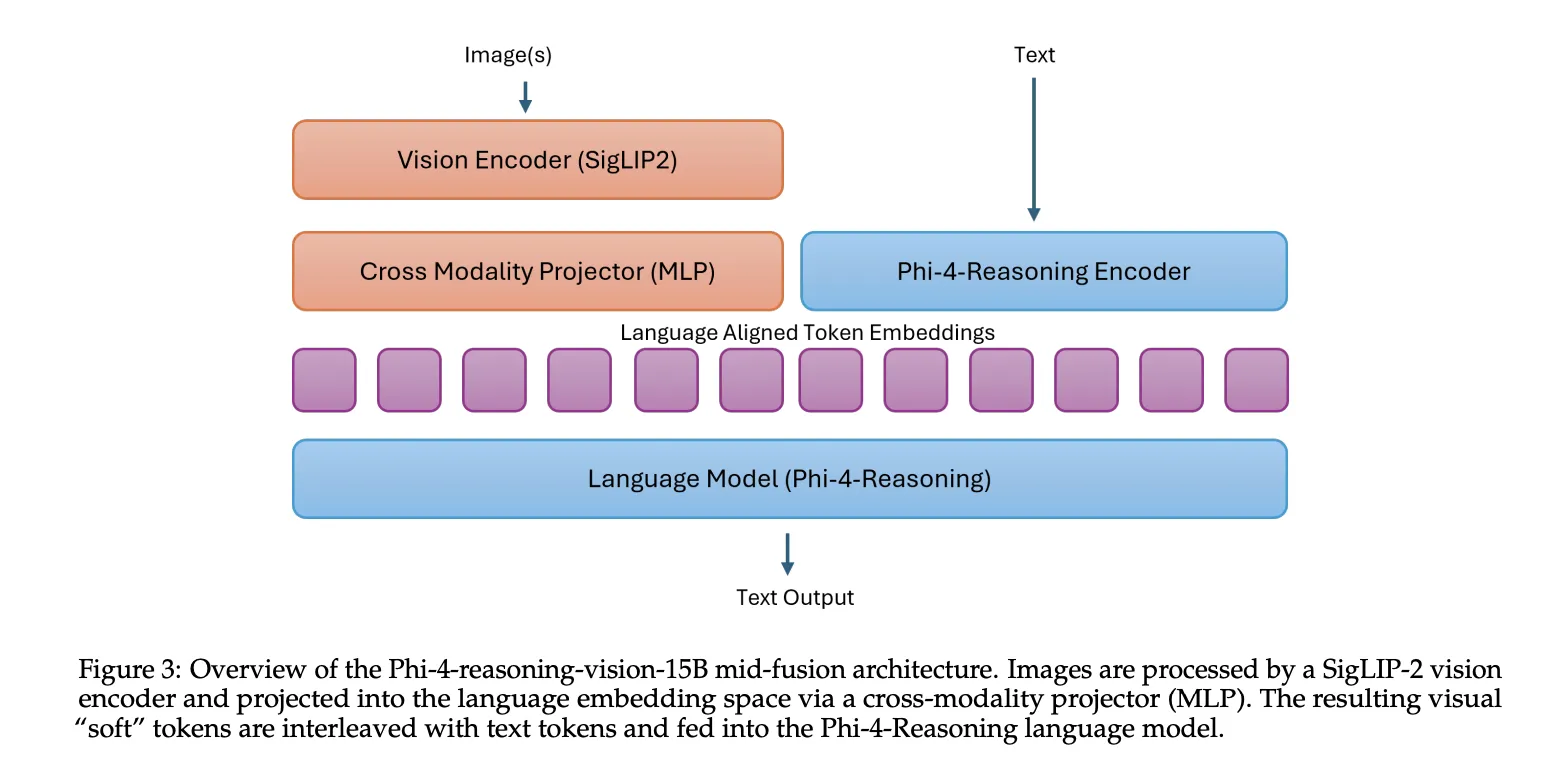

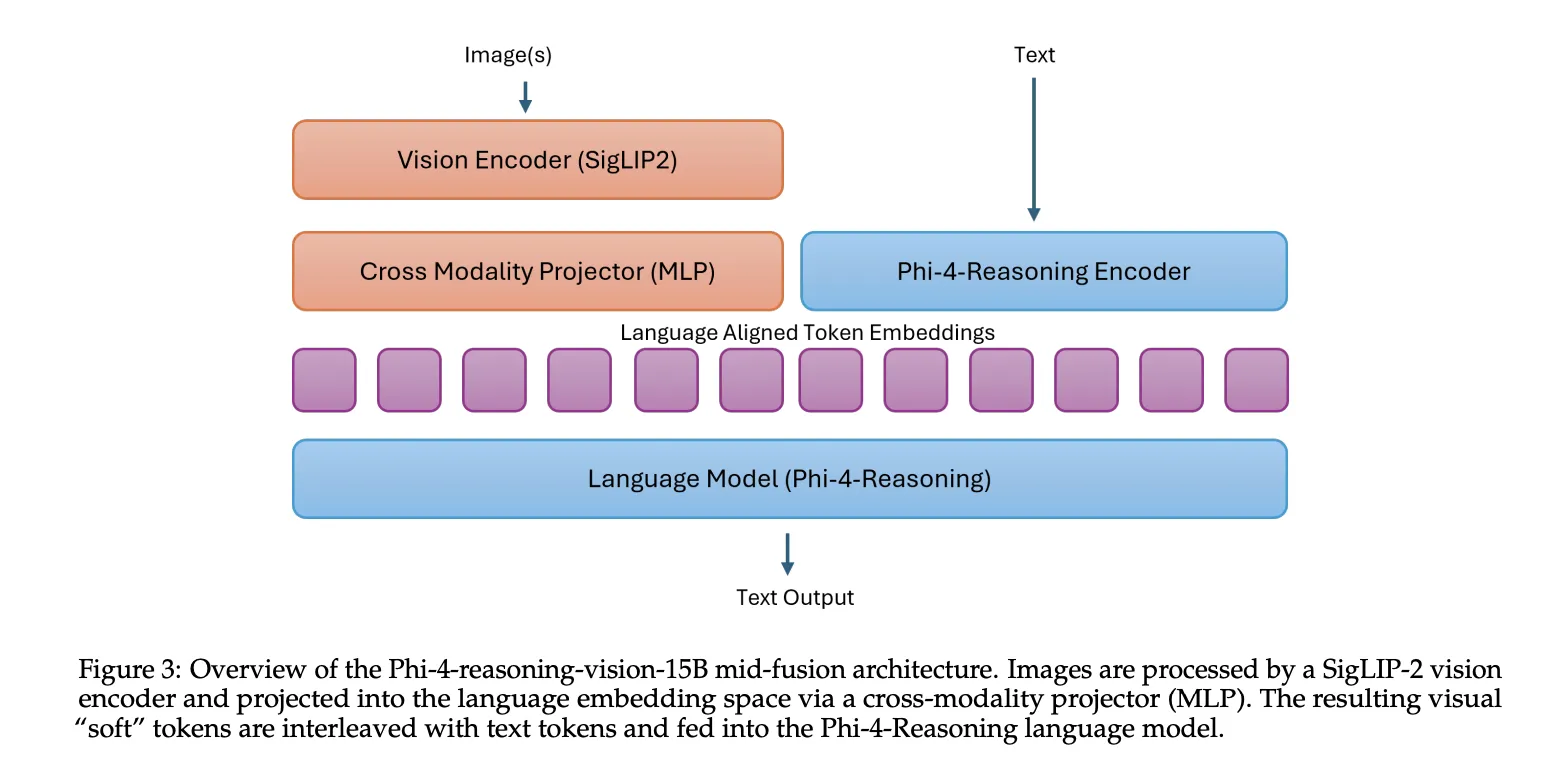

Phi-4-reasoning-vision-15B combines the Phi-4-Reasoning language spine with the SigLIP-2 imaginative and prescient encoder utilizing a mid-fusion structure. On this setup, the imaginative and prescient encoder first converts pictures into visible tokens, then these tokens are projected into the language mannequin embedding house and processed by the pretrained language mannequin. This design acts as a sensible trade-off: it preserves sturdy cross-modal reasoning whereas protecting coaching and inference prices manageable in contrast with heavier early-fusion designs.

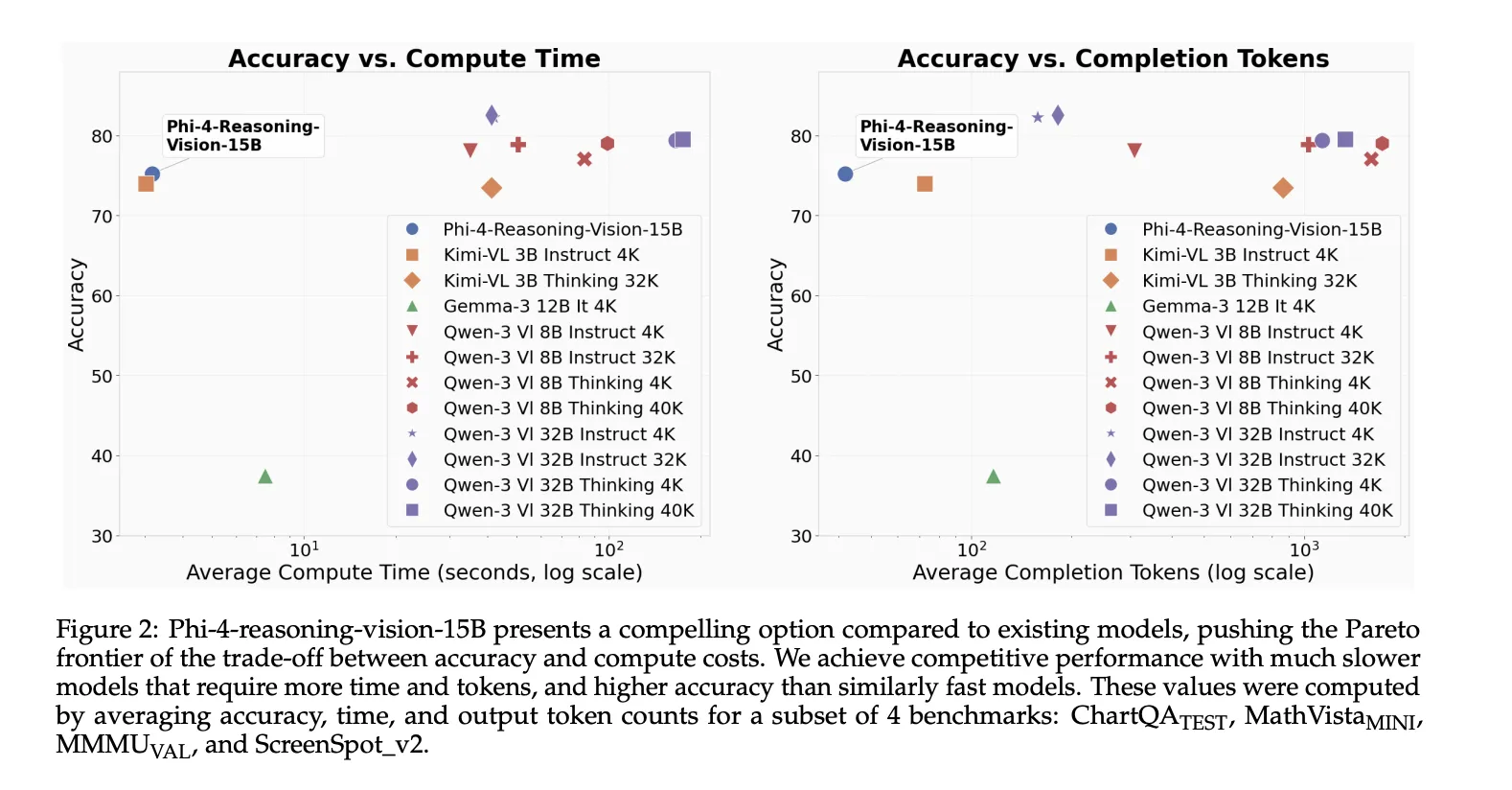

Why Microsoft took the smaller-model route?

Many latest vision-language fashions have grown in parameter rely and token utilization, which raises each latency and deployment value. Phi-4-reasoning-vision-15B was constructed as a smaller various that also handles widespread multimodal workloads with out counting on extraordinarily giant coaching datasets or extreme inference-time token technology. The mannequin was skilled on 200 billion multimodal tokens, constructing on Phi-4-Reasoning, which was skilled on 16 billion tokens, and in the end on the Phi-4 base mannequin, which was skilled on 400 billion distinctive tokens. Microsoft contrasts that with the greater than 1 trillion tokens used to coach a number of latest multimodal fashions equivalent to Qwen 2.5 VL, Qwen 3 VL, Kimi-VL, and Gemma 3.

Excessive-resolution notion was a core design selection

Microsoft workforce explains one of many extra helpful technical classes of their technical report that multimodal reasoning typically fails as a result of notion fails first. Fashions can miss the reply not as a result of they lack reasoning means, however as a result of they fail to extract the related visible particulars from dense pictures equivalent to screenshots, paperwork, or interfaces with small interactive components.

Phi-4-reasoning-vision-15B makes use of a dynamic decision imaginative and prescient encoder with as much as 3,600 visible tokens, which is meant to assist high-resolution understanding for duties equivalent to GUI grounding and fine-grained doc evaluation. The Microsoft workforce states that high-resolution, dynamic-resolution encoders yield constant enhancements, and explicitly notes that correct notion is a prerequisite for high-quality reasoning.

Blended reasoning as a substitute of forcing reasoning all over the place

A second vital design choice is the mannequin’s blended reasoning and non-reasoning coaching technique. Slightly than forcing chain-of-thought-style reasoning for all duties, Microsoft workforce skilled the mannequin to modify between two modes. Reasoning samples embrace

The purpose of this hybrid setup is to let the mannequin reply straight on duties the place longer reasoning provides latency with out bettering accuracy, whereas nonetheless invoking structured reasoning on duties equivalent to math and science. Microsoft workforce additionally notes an vital limitation: the boundary between these modes is discovered implicitly, so switching shouldn’t be all the time optimum. Customers can override the default conduct by way of express prompting with

What areas are stronger?

Microsoft workforce highlights 2 major utility areas. The primary is scientific and mathematical reasoning over visible inputs, together with handwritten equations, diagrams, charts, tables, and quantitative paperwork. The second is computer-use agent duties, the place the mannequin interprets display screen content material, localizes GUI components, and helps interplay with desktop, net, or cell interfaces.

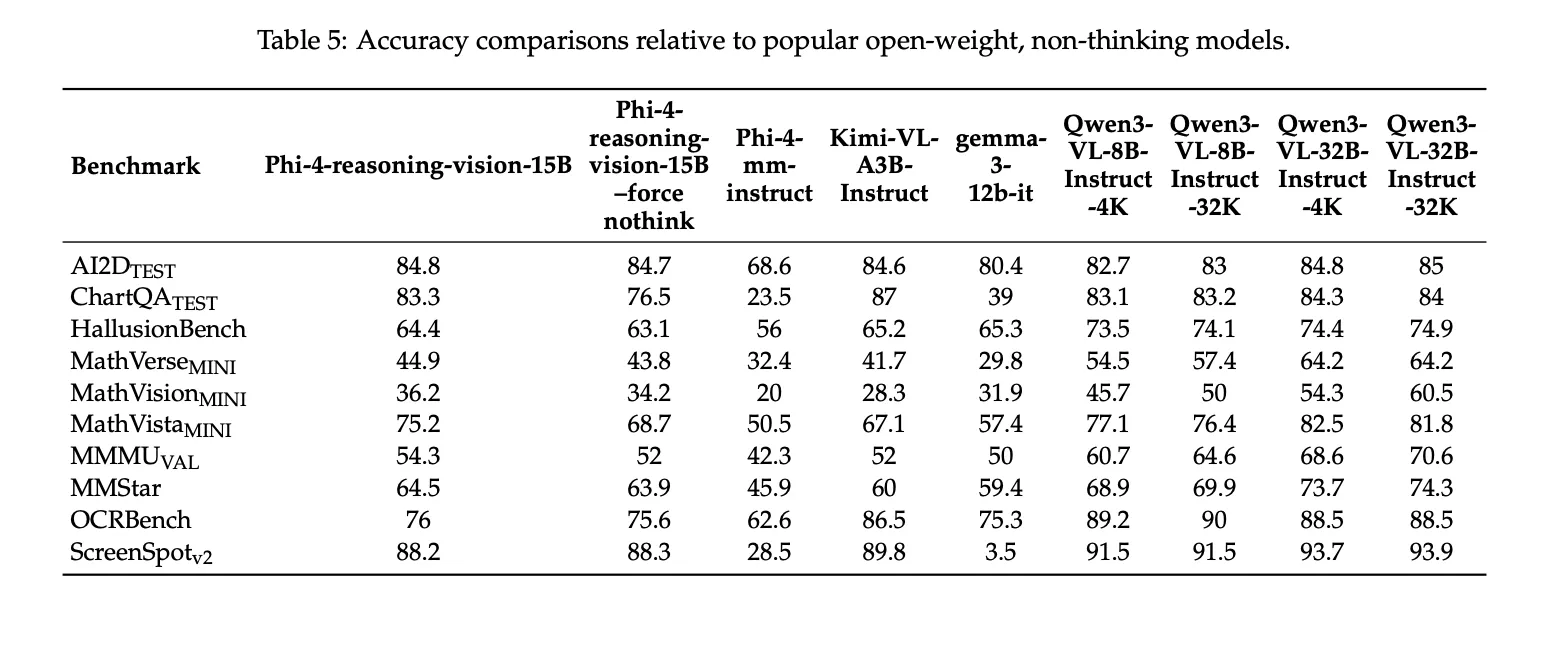

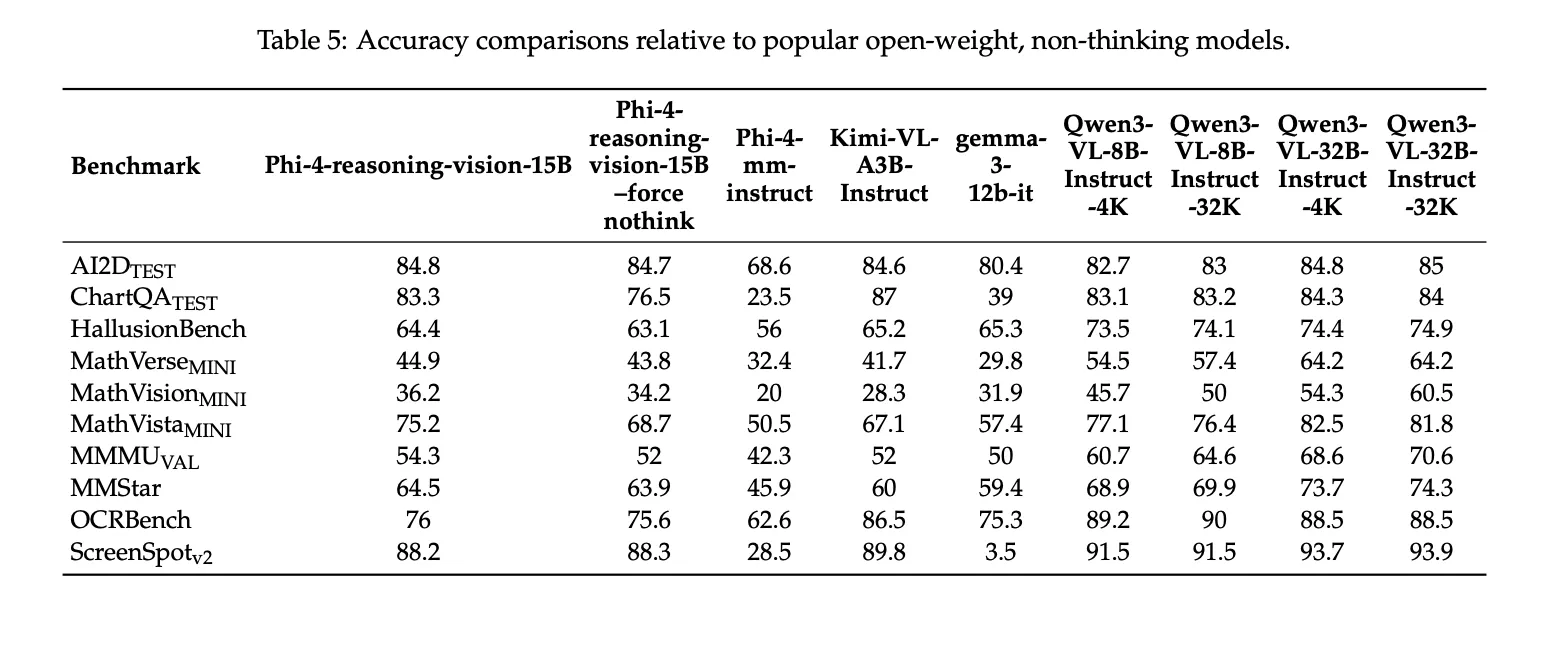

Benchmark outcomes

Microsoft workforce stories the next benchmark scores for Phi-4-reasoning-vision-15B: 84.8 on AI2DTEST, 83.3 on ChartQATEST, 44.9 on MathVerseMINI, 36.2 on MathVisionMINI, 75.2 on MathVistaMINI, 54.3 on MMMUVAL, 64.5 on MMStar, 76.0 on OCRBench, and 88.2 on ScreenSpotv2. The technical report additionally notes that these outcomes have been generated utilizing Eureka ML Insights and VLMEvalKit, with fastened analysis settings, and that Microsoft workforce presents them as comparability outcomes fairly than leaderboard claims.

Key Takeaways

- Phi-4-reasoning-vision-15B is a 15B open-weight multimodal mannequin constructed by combining Phi-4-Reasoning with the SigLIP-2 imaginative and prescient encoder in a mid-fusion structure.

- Microsoft workforce designed the mannequin for compact multimodal reasoning, with a deal with math, science, doc understanding, and GUI grounding, fairly than scaling to a a lot bigger parameter rely.

- Excessive-resolution visible notion is a core a part of the system, with assist for dynamic decision encoding and as much as 3,600 visible tokens, which helps on dense screenshots, paperwork, and interface-heavy duties.

- The mannequin makes use of blended reasoning and non-reasoning coaching, permitting it to modify between

- Microsoft’s reported benchmarks present sturdy efficiency for its measurement, together with outcomes on AI2DTEST, ChartQATEST, MathVistaMINI, OCRBench, and ScreenSpotv2, which helps its positioning as a compact however succesful vision-language reasoning mannequin.

Try the Paper, Repo and Mannequin Weights. Additionally, be at liberty to comply with us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as nicely.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s developments right now: learn extra, subscribe to our e-newsletter, and grow to be a part of the NextTech neighborhood at NextTech-news.com