Within the growth of autonomous brokers, the technical bottleneck is shifting from mannequin reasoning to the execution setting. Whereas Massive Language Fashions (LLMs) can generate code and multi-step plans, offering a useful and remoted setting for that code to run stays a big infrastructure problem.

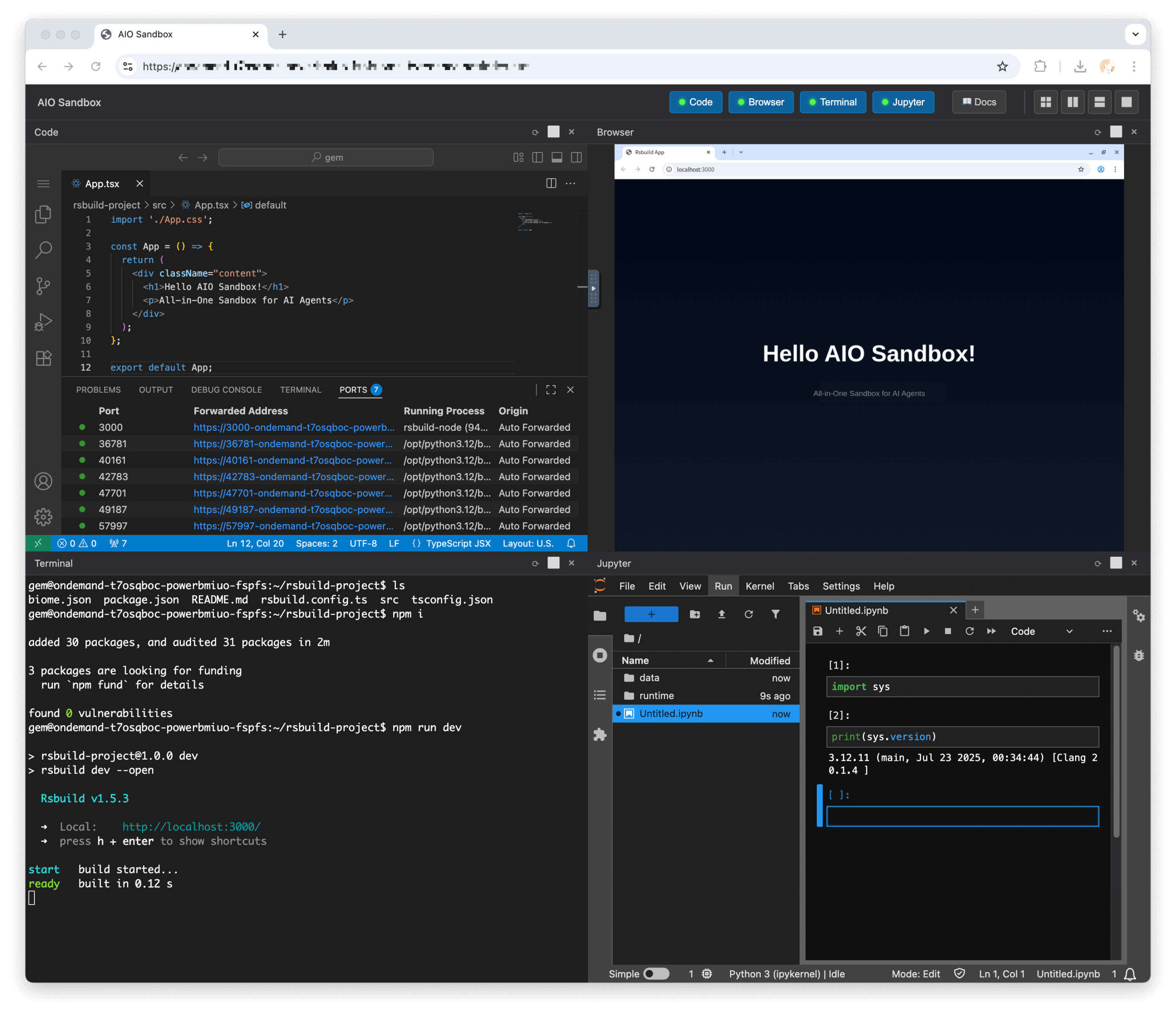

Agent-Infra’s Sandbox, an open-source mission, addresses this by offering an ‘All-in-One’ (AIO) execution layer. In contrast to normal containerization, which regularly requires guide configuration for tool-chaining, the AIO Sandbox integrates a browser, a shell, and a file system right into a single setting designed for AI brokers.

The All-in-One Structure

The first architectural hurdle in agent growth is device fragmentation. Sometimes, an agent may want a browser to fetch information, a Python interpreter to research it, and a filesystem to retailer the outcomes. Managing these as separate companies introduces latency and synchronization complexity.

Agent-Infra consolidates these necessities right into a single containerized setting. The sandbox consists of:

- Laptop Interplay: A Chromium browser controllable through the Chrome DevTools Protocol (CDP), with documented help for Playwright.

- Code Execution: Pre-configured runtimes for Python and Node.js.

- Customary Tooling: A bash terminal and a file system accessible throughout modules.

- Improvement Interfaces: Built-in VSCode Server and Jupyter Pocket book situations for monitoring and debugging.

The Unified File System

A core technical characteristic of the Sandbox is its Unified File System. In a regular agentic workflow, an agent may obtain a file utilizing a browser-based device. In a fragmented setup, that file should be programmatically moved to a separate setting for processing.

The AIO Sandbox makes use of a shared storage layer. This implies a file downloaded through the Chromium browser is instantly seen to the Python interpreter and the Bash shell. This shared state permits for transitions between duties—resembling an agent downloading a CSV from an internet portal and instantly operating an information cleansing script in Python—with out exterior information dealing with.

Mannequin Context Protocol (MCP) Integration

The Sandbox consists of native help for the Mannequin Context Protocol (MCP), an open normal that facilitates communication between AI fashions and instruments. By offering pre-configured MCP servers, Agent-Infra permits builders to show sandbox capabilities to LLMs through a standardized protocol.

The obtainable MCP servers embody:

- Browser: For net navigation and information extraction.

- File: For operations on the unified filesystem.

- Shell: For executing system instructions.

- Markitdown: For changing doc codecs into Markdown to optimize them for LLM consumption.

Isolation and Deployment

The Sandbox is designed for ‘enterprise-grade Docker deployment,’ specializing in isolation and scalability. Whereas it supplies a persistent setting for advanced duties—resembling sustaining a terminal session over a number of turns—it’s constructed to be light-weight sufficient for high-density deployment.

Deployment and Management:

- Infrastructure: The mission consists of Kubernetes (K8s) deployment examples, permitting groups to leverage K8s-native options like useful resource limits (CPU and reminiscence) to handle the sandbox’s footprint.

- Container Isolation: By operating agent actions inside a devoted container, the sandbox supplies a layer of separation between the agent’s generated code and the host system.

- Entry: The setting is managed via an API and SDK, permitting builders to programmatically set off instructions, execute code, and handle the setting state.

Technical Comparability: Conventional Docker vs. AIO Sandbox

| Function | Conventional Docker Strategy | AIO Sandbox Strategy (Agent-Infra) |

| Structure | Sometimes multi-container (one for browser, one for code, one for shell). | Unified Container: Browser, Shell, Python, and IDEs (VSCode/Jupyter) in a single runtime. |

| Knowledge Dealing with | Requires quantity mounts or guide API “plumbing” to maneuver information between containers. | Unified File System: Recordsdata are natively shared. Browser downloads are immediately seen to the shell/Python. |

| Agent Integration | Requires customized “glue code” to map LLM actions to container instructions. | Native MCP Assist: Pre-configured Mannequin Context Protocol servers for traditional agent discovery. |

| Person Interface | CLI-based; Internet-UIs like VSCode or VNC require important guide setup. | Constructed-in Visuals: Built-in VNC (for Chromium), VSCode Server, and Jupyter prepared out-of-the-box. |

| Useful resource Management | Managed through normal Docker/K8s cgroups and useful resource limits. |

Depends on underlying orchestrator (K8s/Docker) for useful resource throttling and limits. |

| Connectivity | Customary Docker bridge/host networking; guide proxy setup wanted. | CDP-based Browser Management: Specialised browser interplay through Chrome DevTools Protocol. |

| Persistence | Containers are sometimes long-lived or reset manually; state administration is customized. | Stateful Session Assist: Helps persistent terminals and workspace state throughout the job lifecycle. |

Scaling the Agent Stack

Whereas the core Sandbox is open-source (Apache-2.0), the platform is positioned as a scalable resolution for groups constructing advanced agentic workflows. By decreasing the ‘Agent Ops’ overhead—the work required to take care of execution environments and deal with dependency conflicts—the sandbox permits builders to give attention to the agent’s logic somewhat than the underlying runtime.

As AI brokers transition from easy chatbots to operators able to interacting with the net and native information, the execution setting turns into a crucial element of the stack. Agent-Infra workforce is positioning the AIO Sandbox as a standardized, light-weight runtime for this transition.

Take a look at the Repo right here. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be part of us on telegram as properly.

Michal Sutter is an information science skilled with a Grasp of Science in Knowledge Science from the College of Padova. With a stable basis in statistical evaluation, machine studying, and information engineering, Michal excels at remodeling advanced datasets into actionable insights.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s developments as we speak: learn extra, subscribe to our publication, and turn into a part of the NextTech neighborhood at NextTech-news.com