Transformers use consideration and Combination-of-Consultants to scale computation, however they nonetheless lack a local solution to carry out information lookup. They re-compute the identical native patterns many times, which wastes depth and FLOPs. DeepSeek’s new Engram module targets precisely this hole by including a conditional reminiscence axis that works alongside MoE quite than changing it.

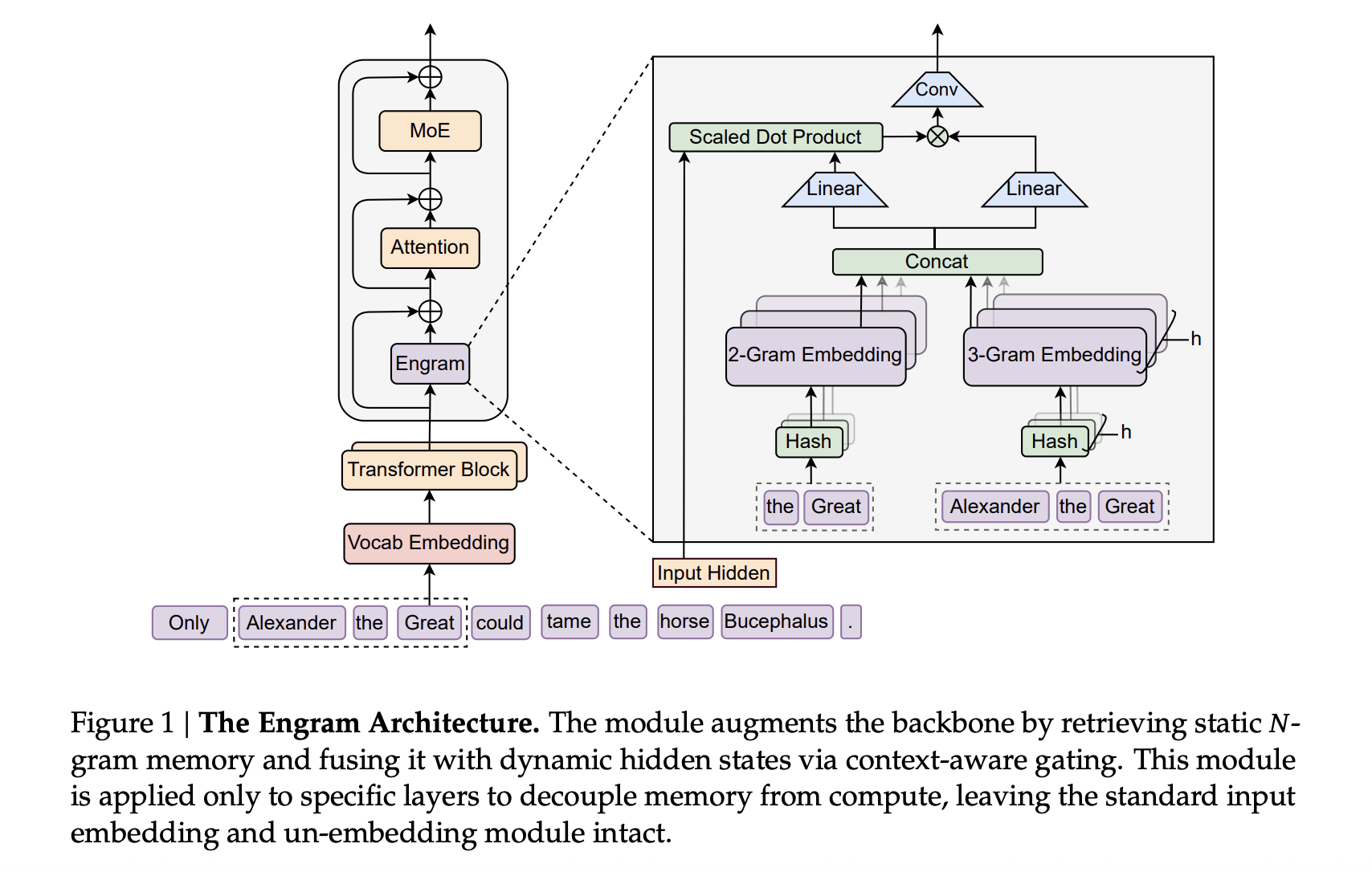

At a excessive stage, Engram modernizes basic N gram embeddings and turns them right into a scalable, O(1) lookup reminiscence that plugs immediately into the Transformer spine. The result’s a parametric reminiscence that shops static patterns similar to frequent phrases and entities, whereas the spine focuses on tougher reasoning and lengthy vary interactions.

How Engram Matches Into A DeepSeek Transformer

The proposed strategy use the DeepSeek V3 tokenizer with a 128k vocabulary and pre-train on 262B tokens. The spine is a 30 block Transformer with hidden dimension 2560. Every block makes use of Multi head Latent Consideration with 32 heads and connects to feed ahead networks by means of Manifold Constrained Hyper Connections with growth fee 4. Optimization makes use of the Muon optimizer.

Engram attaches to this spine as a sparse embedding module. It’s constructed from hashed N gram tables, with multi head hashing into prime sized buckets, a small depthwise convolution over the N gram context and a context conscious gating scalar within the vary 0 to 1 that controls how a lot of the retrieved embedding is injected into every department.

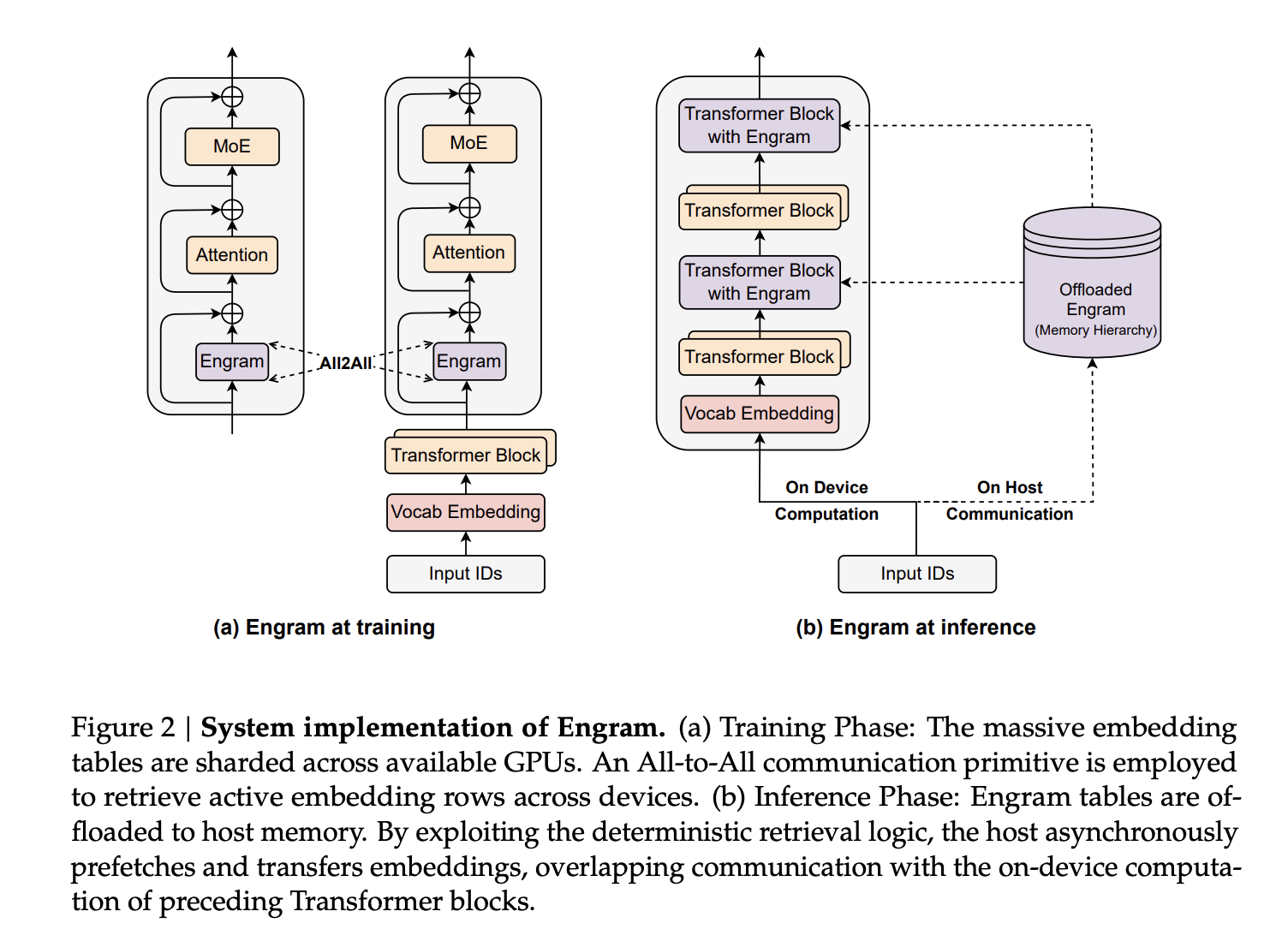

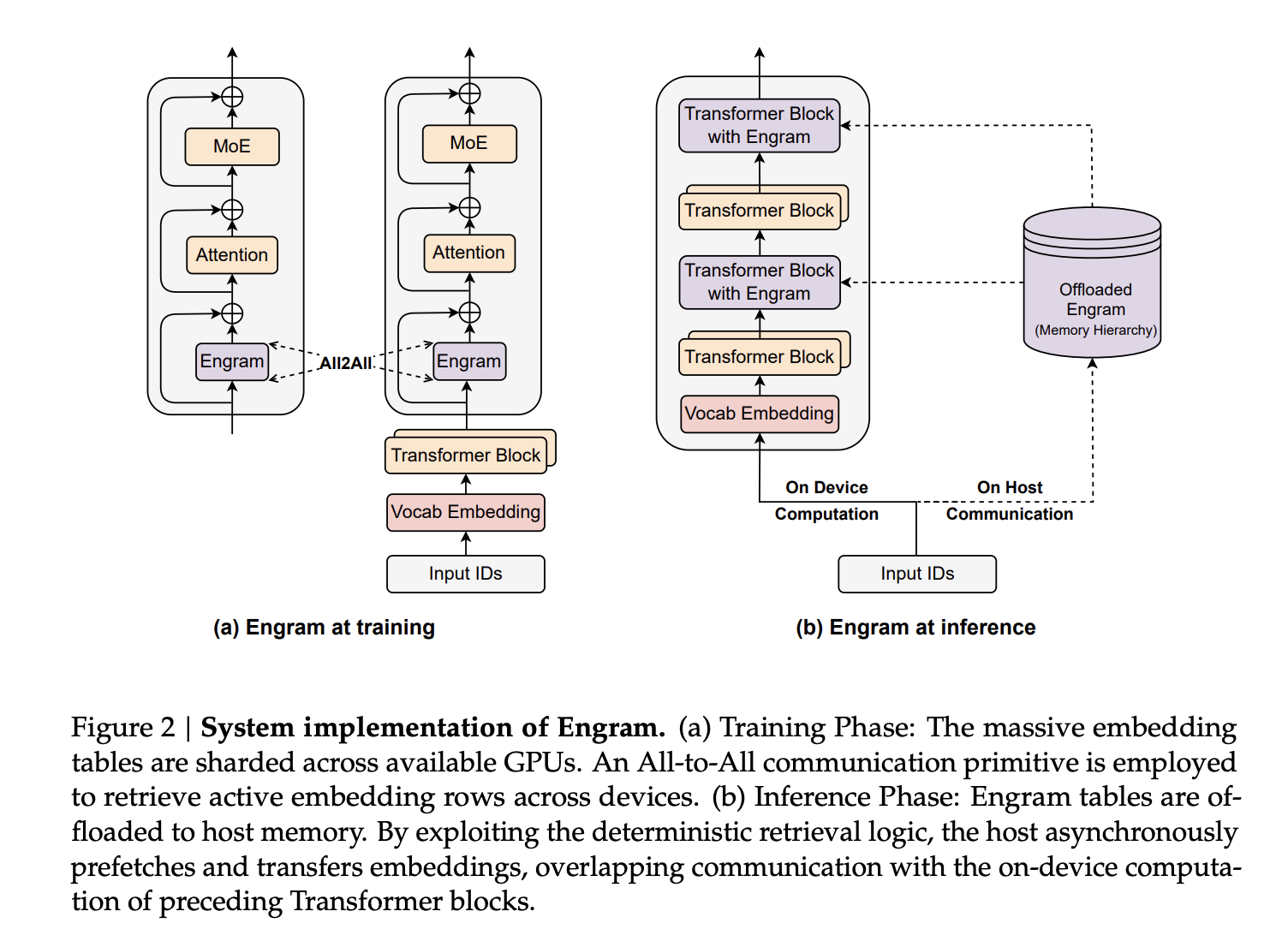

Within the giant scale fashions, Engram-27B and Engram-40B share the identical Transformer spine as MoE-27B. MoE-27B replaces the dense feed ahead with DeepSeekMoE, utilizing 72 routed specialists and a couple of shared specialists. Engram-27B reduces routed specialists from 72 to 55 and reallocates these parameters right into a 5.7B Engram reminiscence whereas holding whole parameters at 26.7B. The Engram module makes use of N equal to {2,3}, 8 Engram heads, dimension 1280 and is inserted at layers 2 and 15. Engram 40B will increase the Engram reminiscence to 18.5B parameters whereas holding activated parameters fastened.

Sparsity Allocation, A Second Scaling Knob Beside MoE

The core design query is find out how to break up the sparse parameter finances between routed specialists and conditional reminiscence. The analysis workforce formalize this because the Sparsity Allocation drawback, with allocation ratio ρ outlined because the fraction of inactive parameters assigned to MoE specialists. A pure MoE mannequin has ρ equal to 1. Decreasing ρ reallocates parameters from specialists into Engram slots.

On mid scale 5.7B and 9.9B fashions, sweeping ρ offers a transparent U formed curve of validation loss versus allocation ratio. Engram fashions match the pure MoE baseline even when ρ drops to about 0.25, which corresponds to roughly half as many routed specialists. The optimum seems when round 20 to 25 % of the sparse finances is given to Engram. This optimum is steady throughout each compute regimes, which suggests a sturdy break up between conditional computation and conditional reminiscence below fastened sparsity.

The analysis workforce additionally studied an infinite reminiscence regime on a hard and fast 3B MoE spine skilled for 100B tokens. They scale the Engram desk from roughly 2.58e5 to 1e7 slots. Validation loss follows an virtually good energy regulation in log area, that means that extra conditional reminiscence retains paying off with out additional compute. Engram additionally outperforms OverEncoding, one other N gram embedding methodology that averages into the vocabulary embedding, below the identical reminiscence finances.

Giant Scale Pre Coaching Outcomes

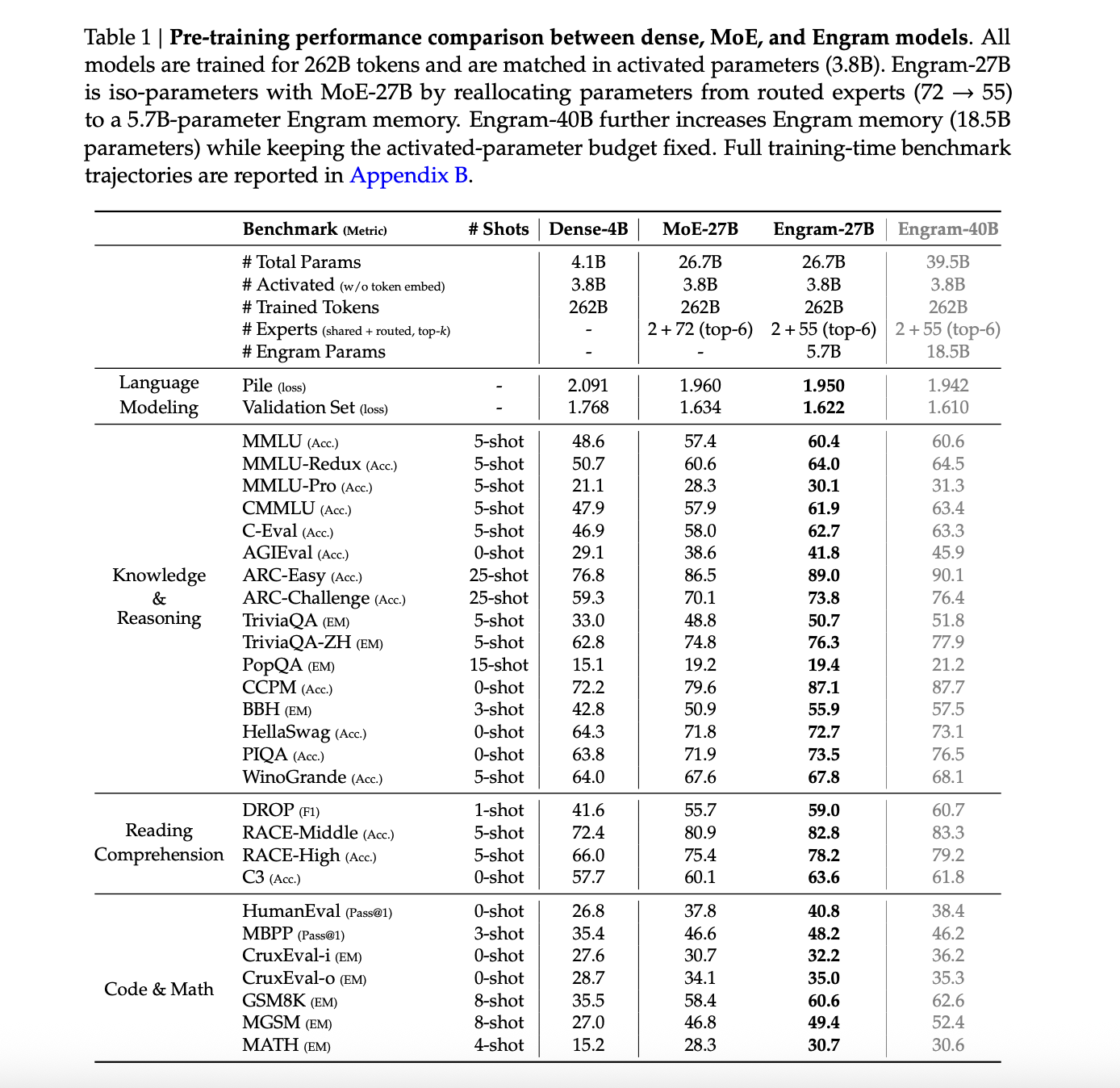

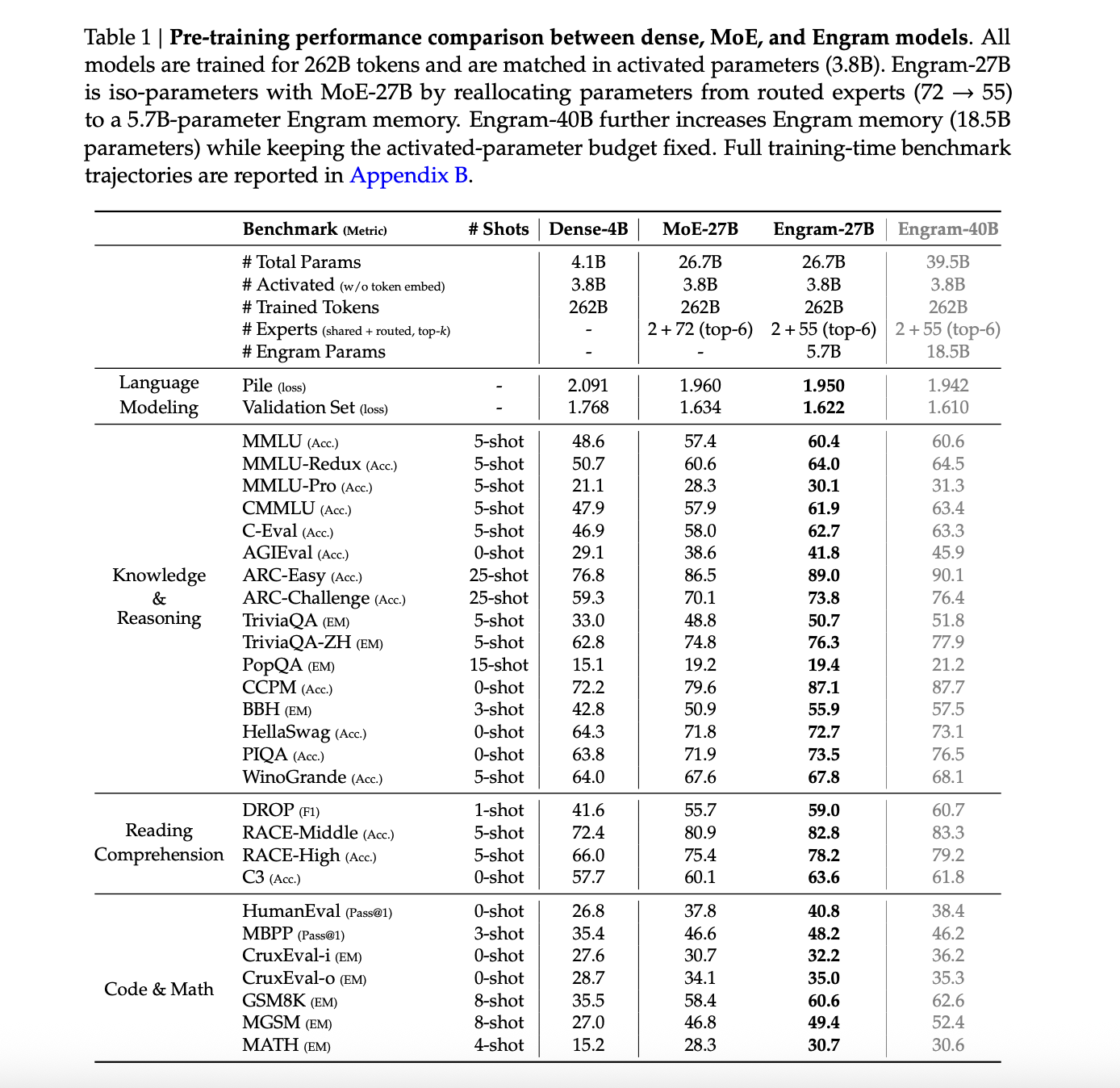

The primary comparability includes 4 fashions skilled on the identical 262B token curriculum, with 3.8B activated parameters in all circumstances. These are Dense 4B with 4.1B whole parameters, MoE 27B and Engram 27B at 26.7B whole parameters, and Engram 40B at 39.5B whole parameters.

On The Pile check set, language modeling loss is 2.091 for MoE 27B, 1.960 for Engram 27B, 1.950 for the Engram 27B variant and 1.942 for Engram 40B. The Dense 4B Pile loss shouldn’t be reported. Validation loss on the inner held out set drops from 1.768 for MoE 27B to 1.634 for Engram 27B and to 1.622 and 1.610 for the Engram variants.

Throughout information and reasoning benchmarks, Engram-27B persistently improves over MoE-27B. MMLU will increase from 57.4 to 60.4, CMMLU from 57.9 to 61.9 and C-Eval from 58.0 to 62.7. ARC Problem rises from 70.1 to 73.8, BBH from 50.9 to 55.9 and DROP F1 from 55.7 to 59.0. Code and math duties additionally enhance, for instance HumanEval from 37.8 to 40.8 and GSM8K from 58.4 to 60.6.

Engram 40B usually pushes these numbers additional although the authors observe that it’s doubtless below skilled at 262B tokens as a result of its coaching loss continues to diverge from the baselines close to the tip of pre coaching.

Lengthy Context Conduct And Mechanistic Results

After pre-training, the analysis workforce lengthen the context window utilizing YaRN to 32768 tokens for 5000 steps, utilizing 30B prime quality lengthy context tokens. They evaluate MoE-27B and Engram-27B at checkpoints akin to 41k, 46k and 50k pre coaching steps.

On LongPPL and RULER at 32k context, Engram-27B matches or exceeds MoE-27B below three circumstances. With about 82 % of the pre coaching FLOPs, Engram-27B at 41k steps matches LongPPL whereas bettering RULER accuracy, for instance Multi Question NIAH 99.6 versus 73.0 and QA 44.0 versus 34.5. Below iso loss at 46k and iso FLOPs at 50k, Engram 27B improves each perplexity and all RULER classes together with VT and QA.

Mechanistic evaluation makes use of LogitLens and Centered Kernel Alignment. Engram variants present decrease layer smart KL divergence between intermediate logits and the ultimate prediction, particularly in early blocks, which implies representations change into prediction prepared sooner. CKA similarity maps present that shallow Engram layers align greatest with a lot deeper MoE layers. For instance, layer 5 in Engram-27B aligns with round layer 12 within the MoE baseline. Taken collectively, this helps the view that Engram successfully will increase mannequin depth by offloading static reconstruction to reminiscence.

Ablation research on a 12 layer 3B MoE mannequin with 0.56B activated parameters add a 1.6B Engram reminiscence as a reference configuration, utilizing N equal to {2,3} and inserting Engram at layers 2 and 6. Sweeping a single Engram layer throughout depth exhibits that early insertion at layer 2 is perfect. The part ablations spotlight three key items, multi department integration, context conscious gating and tokenizer compression.

Sensitivity evaluation exhibits that factual information depends closely on Engram, with TriviaQA dropping to about 29 % of its authentic rating when Engram outputs are suppressed at inference, whereas studying comprehension duties retain round 81 to 93 % of efficiency, for instance C3 at 93 %.

Key Takeaways

- Engram provides a conditional reminiscence axis to sparse LLMs in order that frequent N gram patterns and entities are retrieved by way of O(1) hashed lookup, whereas the Transformer spine and MoE specialists deal with dynamic reasoning and lengthy vary dependencies.

- Below a hard and fast parameter and FLOPs finances, reallocating about 20 to 25 % of the sparse capability from MoE specialists into Engram reminiscence lowers validation loss, displaying that conditional reminiscence and conditional computation are complementary quite than competing.

- In giant scale pre coaching on 262B tokens, Engram-27B and Engram-40B with the identical 3.8B activated parameters outperform a MoE-27B baseline on language modeling, information, reasoning, code and math benchmarks, whereas holding the Transformer spine structure unchanged.

- Lengthy context extension to 32768 tokens utilizing YaRN exhibits that Engram-27B matches or improves LongPPL and clearly improves RULER scores, particularly Multi-Question-Needle in a Haystack and variable monitoring, even when skilled with decrease or equal compute in comparison with MoE-27B.

Try the Paper and GitHub Repo. Additionally, be at liberty to observe us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be part of us on telegram as properly.

Try our newest launch of ai2025.dev, a 2025-focused analytics platform that turns mannequin launches, benchmarks, and ecosystem exercise right into a structured dataset you may filter, evaluate, and export.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s traits as we speak: learn extra, subscribe to our publication, and change into a part of the NextTech group at NextTech-news.com