Within the present panorama of generative AI, the ‘scaling legal guidelines’ have typically dictated that extra parameters equal extra intelligence. Nevertheless, Liquid AI is difficult this conference with the discharge of LFM2.5-350M. This mannequin is definitely a technical case research in intelligence density with extra pre-training (from 10T to 28T tokens) and large-scale reinforcement studying

The importance of LFM2.5-350M lies in its structure and coaching effectivity. Whereas essentially the most AI firms has been targeted on frontier fashions, Liquid AI is focusing on the ‘edge’—units with restricted reminiscence and compute—by proving {that a} 350-million parameter mannequin can outperform fashions greater than twice its dimension on a number of evaluated benchmarks.

Structure: The Hybrid LIV Spine

The core technical differentiator of the LFM2.5-350M is its departure from the pure Transformer structure. It makes use of a hybrid construction constructed on Linear Enter-Various Programs (LIVs).

Conventional Transformers rely totally on self-attention mechanisms, which undergo from quadratic scaling points: because the context window grows, the reminiscence and computational necessities for the Key-Worth (KV) cache improve. Liquid AI addresses this through the use of a hybrid spine consisting of:

- 10 Double-Gated LIV Convolution Blocks: These deal with nearly all of the sequence processing. LIVs operate equally to superior Recurrent Neural Networks (RNNs) however are designed to be extra parallelizable and secure throughout coaching. They keep a constant-state reminiscence, decreasing the I/O overhead.

- 6 Grouped Question Consideration (GQA) Blocks: By integrating a small variety of consideration blocks, the mannequin retains high-precision retrieval and long-range context dealing with with out the total reminiscence overhead of an ordinary Transformer.

This hybrid method permits the LFM2.5-350M to help a 32k context window (32,768 tokens) whereas sustaining a particularly lean reminiscence footprint.

Efficiency and Intelligence Density

The LFM2.5-350M was pre-trained on 28 trillion tokens with a particularly excessive training-to-parameter ratio. This ensures that the mannequin’s restricted parameter rely is utilized to its most potential, leading to excessive ‘intelligence density.’

Benchmarks and Use Instances

The LFM2.5-350M is a specialist mannequin designed for high-speed, agentic duties moderately than general-purpose reasoning.

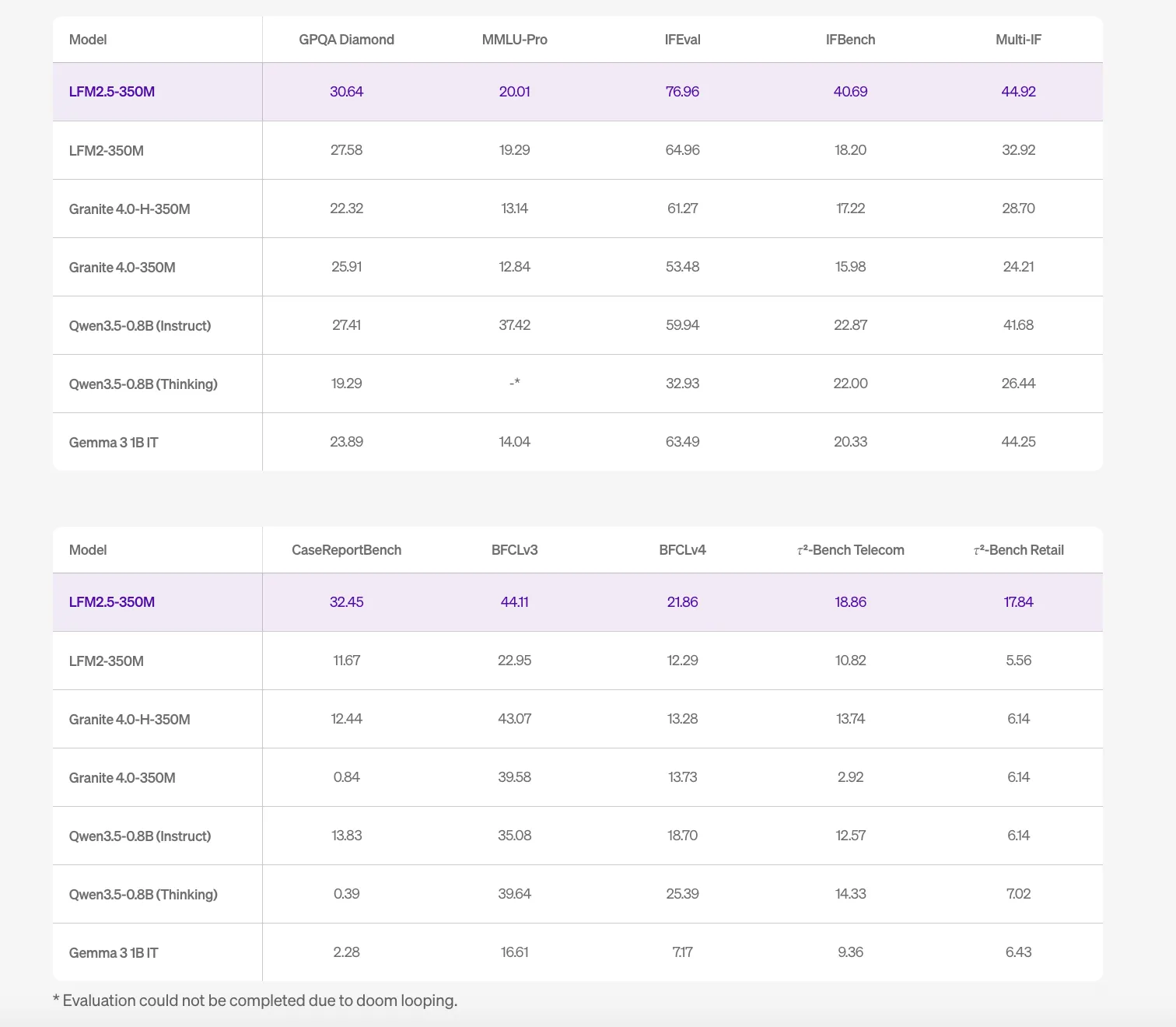

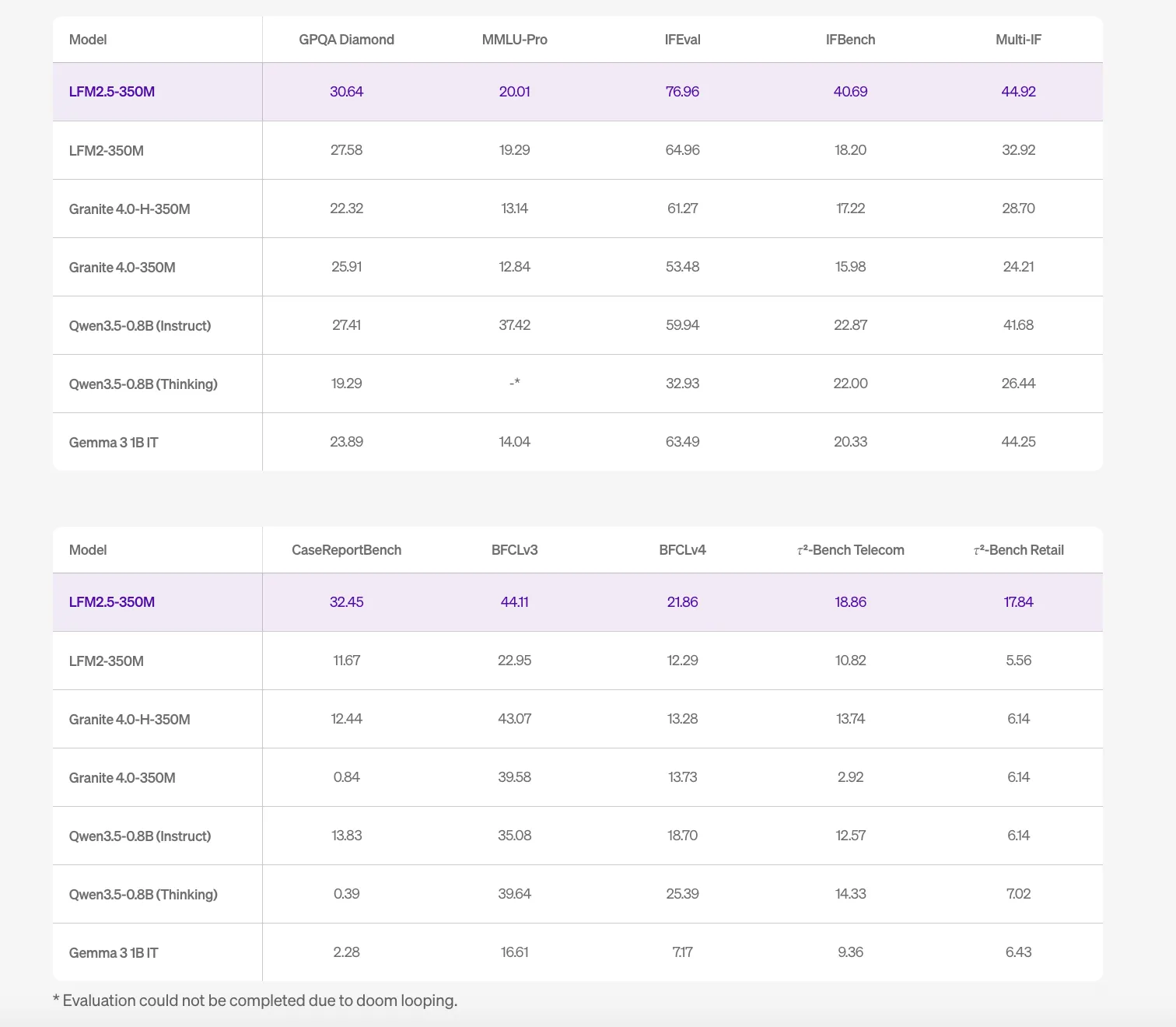

| Benchmark | Rating |

| IFEval (Instruction Following) | 76.96 |

| GPQA Diamond | 30.64 |

| MMLU-Professional | 20.01 |

The excessive IFEval rating signifies the mannequin is environment friendly at following complicated, structured directions, making it appropriate for software use, operate calling, and structured knowledge extraction (e.g., JSON). Nevertheless, the documentation explicitly states that LFM2.5-350M will not be beneficial for arithmetic, complicated coding, or inventive writing. For these duties, the reasoning capabilities of bigger parameter counts stay obligatory.

{Hardware} Optimization and Inference Effectivity

A serious hurdle for AI devs is the ‘reminiscence wall’—the bottleneck created by transferring knowledge between the processor and reminiscence. As a result of the LFM2.5-350M makes use of LIVs and GQA, it drastically reduces KV cache dimension, boosting throughput. On a single NVIDIA H100 GPU, the mannequin can attain a throughput of 40.4K output tokens per second at excessive concurrency.

Liquid AI crew stories device-specific low-memory inference outcomes that make native deployment viable:

- Snapdragon 8 Elite NPU: 169MB peak reminiscence utilizing RunAnywhere This autumn.

- Snapdragon GPU: 81MB peak reminiscence utilizing RunAnywhere This autumn.

- Raspberry Pi 5: 300MB utilizing Cactus Engine int8.

Key Takeaways

- Excessive Intelligence Density: By coaching a 350M parameter mannequin on 28 trillion tokens, Liquid AI crew achieved an tremendous excessive 80,000:1 token-to-parameter ratio, permitting it to outperform fashions greater than twice its dimension on a number of benchmarks.

- Hybrid LIV Structure: The mannequin departs from pure Transformers through the use of Linear Enter-Various Programs (LIVs) mixed with a small variety of Grouped Question Consideration (GQA) blocks, considerably decreasing the reminiscence overhead of the KV cache.

- Edge-First Effectivity: It’s designed for native deployment with a 32k context window and a remarkably low reminiscence footprint—reaching as little as 81MB on cellular GPUs and 169MB on NPUs through specialised inference engines.

- Specialised Agentic Functionality: The mannequin is extremely optimized for instruction following (IFEval: 76.96) and power use, although it’s explicitly not beneficial for complicated coding, arithmetic, or inventive writing.

- Huge Throughput: The architectural effectivity permits high-speed utility, processing as much as 40.4K output tokens per second on a single H100, making it very best for high-volume knowledge extraction and real-time classification.

Try the Technical particulars and Mannequin Weight. Additionally, be at liberty to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you’ll be able to be a part of us on telegram as effectively.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s tendencies immediately: learn extra, subscribe to our publication, and change into a part of the NextTech neighborhood at NextTech-news.com