Whereas the tech people obsesses over the most recent Llama checkpoints, a a lot grittier battle is being fought within the basements of knowledge facilities. As AI fashions scale to trillions of parameters, the clusters required to coach them have develop into a number of the most advanced—and fragile—machines on the planet.

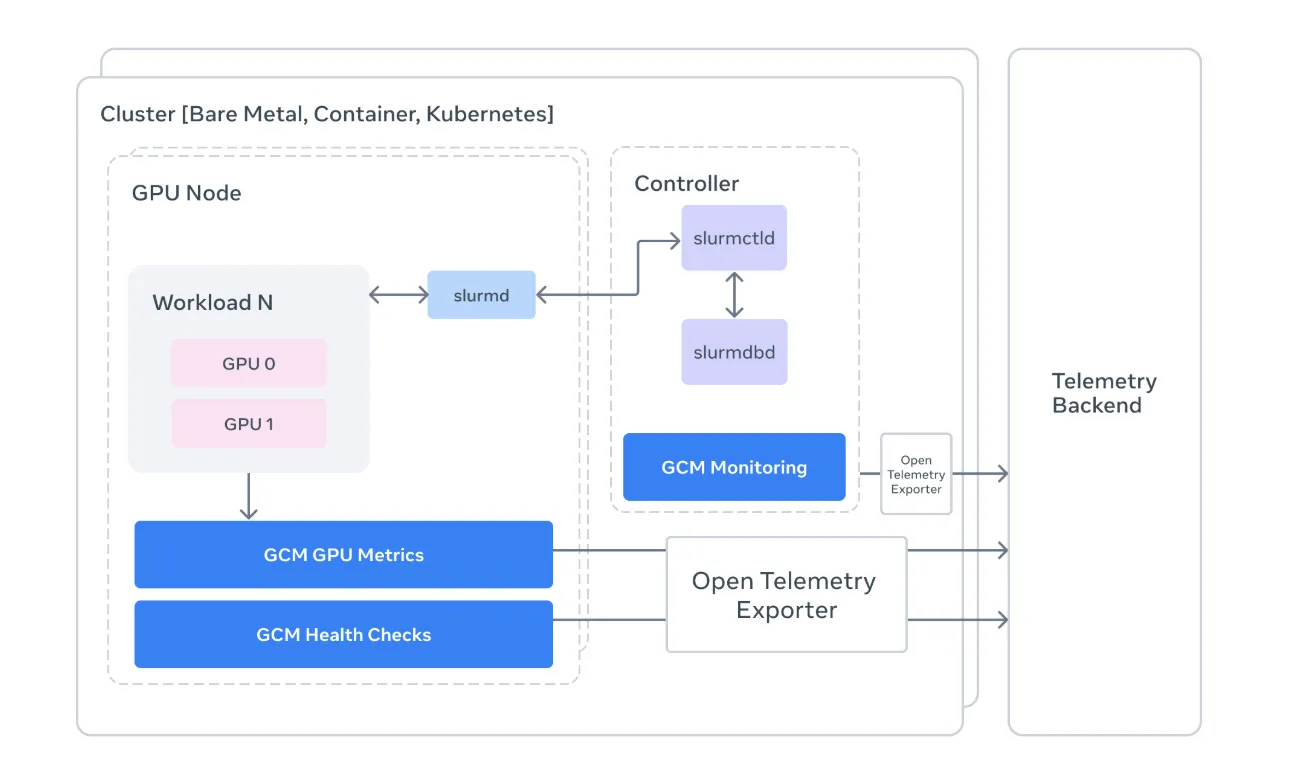

Meta AI Analysis workforce simply launched GCM (GPU Cluster Monitoring), a specialised toolkit designed to unravel the ‘silent killer’ of AI progress: {hardware} instability at scale. GCM is a blueprint for find out how to handle the hardware-to-software handshake in Excessive-Efficiency Computing (HPC).

The Drawback: When ‘Normal’ Observability Isn’t Sufficient

In conventional net improvement, if a microservice lags, you verify your dashboard and scale horizontally. In AI coaching, the foundations are totally different. A single GPU in a 4,096-card cluster can expertise a ‘silent failure’—the place it technically stays ‘up’ however its efficiency degrades—successfully poisoning the gradients for the whole coaching run.

Normal monitoring instruments are sometimes too high-level to catch these nuances. Meta’s GCM acts as a specialised bridge, connecting the uncooked {hardware} telemetry of NVIDIA GPUs with the orchestration logic of the cluster.

1. Monitoring the ‘Slurm’ Manner

For devs, Slurm is the ever-present (if often irritating) workload supervisor. GCM integrates instantly with Slurm to offer context-aware monitoring.

- Job-Degree Attribution: As a substitute of seeing a generic spike in energy consumption, GCM lets you attribute metrics to particular Job IDs.

- State Monitoring: It pulls knowledge from

sacct,sinfo, andsqueueto create a real-time map of cluster well being. If a node is marked asDRAIN, GCM helps you perceive why earlier than it ruins a researcher’s weekend.

2. The ‘Prolog’ and ‘Epilog’ Technique

One of the technically very important components of the GCM framework is its suite of Well being Checks. In an HPC setting, timing is all the pieces. GCM makes use of two crucial home windows:

- Prolog: These are scripts run earlier than a job begins. GCM checks if the InfiniBand community is wholesome and if the GPUs are literally reachable. If a node fails a pre-check, the job is diverted, saving hours of ‘lifeless’ compute time.

- Epilog: These run after a job completes. GCM makes use of this window to run deep diagnostics utilizing NVIDIA’s DCGM (Information Heart GPU Supervisor) to make sure the {hardware} wasn’t broken through the heavy lifting.

3. Telemetry and the OTLP Bridge

For devs and AI researchers who must justify their compute budgets, GCM’s Telemetry Processor is the star of the present. It converts uncooked cluster knowledge into OpenTelemetry (OTLP) codecs.

By standardizing telemetry, GCM permits groups to pipe hardware-specific knowledge (like GPU temperature, NVLink errors, and XID occasions) into fashionable observability stacks. This implies you may lastly correlate a dip in coaching throughput with a particular {hardware} throttled occasion, shifting from ‘the mannequin is sluggish’ to ‘GPU 3 on Node 50 is overheating.’

Beneath the Hood: The Tech Stack

Meta’s implementation is a masterclass in pragmatic engineering. The repository is primarily Python (94%), making it extremely extensible for AI devs, with performance-critical logic dealt with in Go.

- Collectors: Modular parts that collect telemetry from sources like

nvidia-smiand the Slurm API. - Sinks: The ‘output’ layer. GCM helps a number of sinks, together with

stdoutfor native debugging and OTLP for production-grade monitoring. - DCGM & NVML: GCM leverages the NVIDIA Administration Library (NVML) to speak on to the {hardware}, bypassing high-level abstractions that may disguise errors.

Key Takeaways

- Bridging the ‘Silent Failure’ Hole: GCM solves a crucial AI infrastructure drawback: figuring out ‘zombie’ GPUs that seem on-line however trigger coaching runs to crash or produce corrupted gradients attributable to {hardware} instability.

- Deep Slurm Integration: In contrast to basic cloud monitoring, GCM is purpose-built for Excessive-Efficiency Computing (HPC). It anchors {hardware} metrics to particular Slurm Job IDs, permitting engineers to attribute efficiency dips or energy spikes to particular fashions and customers.

- Automated Well being ‘Prolog’ and ‘Epilog’: The framework makes use of a proactive diagnostic technique, working specialised well being checks through NVIDIA DCGM earlier than a job begins (Prolog) and after it ends (Epilog) to make sure defective nodes are drained earlier than they waste costly compute time.

- Standardized Telemetry through OTLP: GCM converts low-level {hardware} knowledge (temperature, NVLink errors, XID occasions) into the OpenTelemetry (OTLP) format. This permits groups to pipe advanced cluster knowledge into fashionable observability stacks like Prometheus or Grafana for real-time visualization.

- Modular, Language-Agnostic Design: Whereas the core logic is written in Python for accessibility, GCM makes use of Go for performance-critical sections. Its ‘Collector-and-Sink’ structure permits builders to simply plug in new knowledge sources or export metrics to customized backend techniques.

Try the Repo and Challenge Web page. Additionally, be at liberty to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be part of us on telegram as properly.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s developments as we speak: learn extra, subscribe to our e-newsletter, and develop into a part of the NextTech neighborhood at NextTech-news.com