How do you retain artificial knowledge recent and numerous for contemporary AI fashions with out turning a single orchestration pipeline into the bottleneck? Meta AI researchers introduce Matrix, a decentralized framework the place each management and knowledge stream are serialized into messages that transfer by distributed queues. As LLM coaching more and more depends on artificial conversations, instrument traces and reasoning chains, most current techniques nonetheless rely upon a central controller or area particular setups, which wastes GPU capability, provides coordination overhead and limits knowledge range. Matrix as a substitute makes use of peer to look agent scheduling on a Ray cluster and delivers 2 to fifteen instances increased token throughput on actual workloads whereas sustaining comparable high quality.

From Centralized Controllers to Peer to Peer Brokers

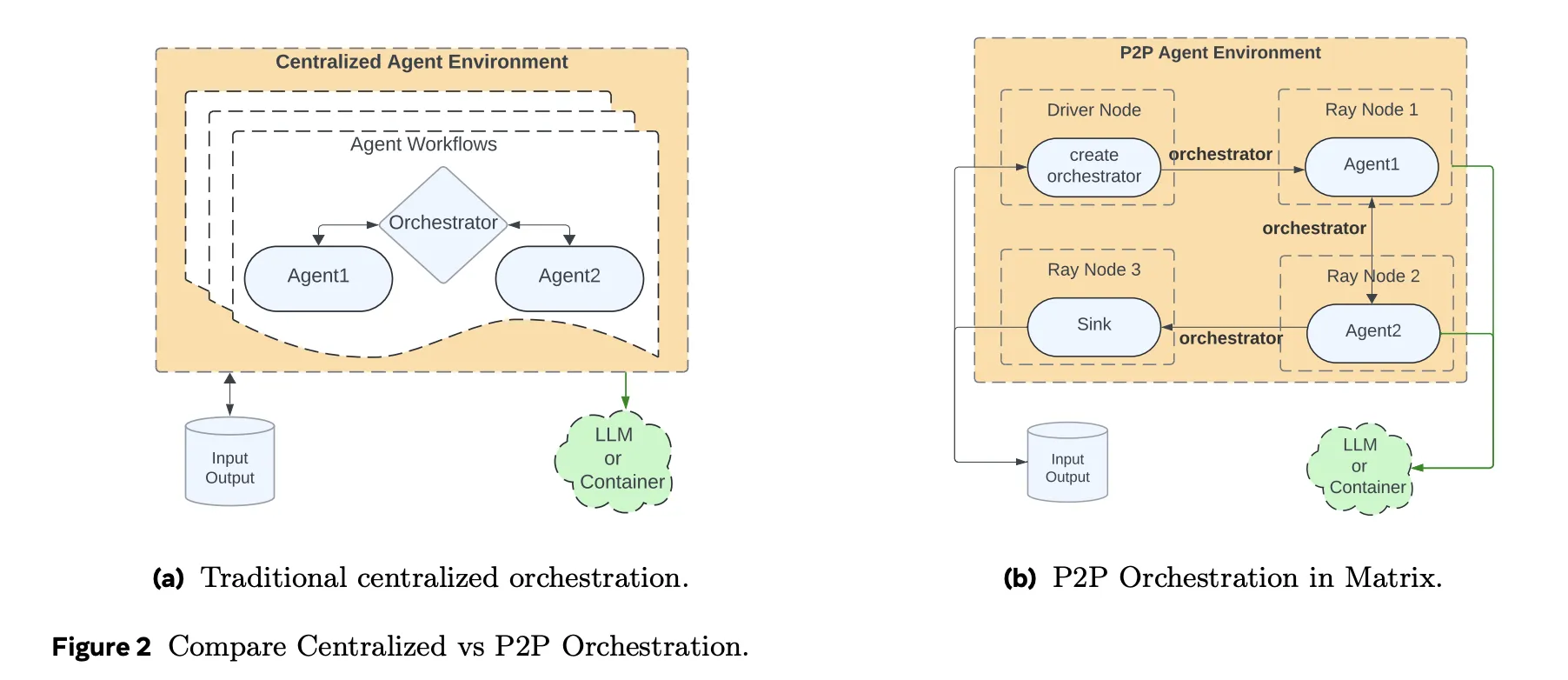

Conventional agent frameworks maintain workflow state and management logic inside a central orchestrator. Each agent name, instrument name and retry goes by that controller. This mannequin is straightforward to purpose about, nevertheless it doesn’t scale nicely if you want tens of 1000’s of concurrent artificial dialogues or instrument trajectories.

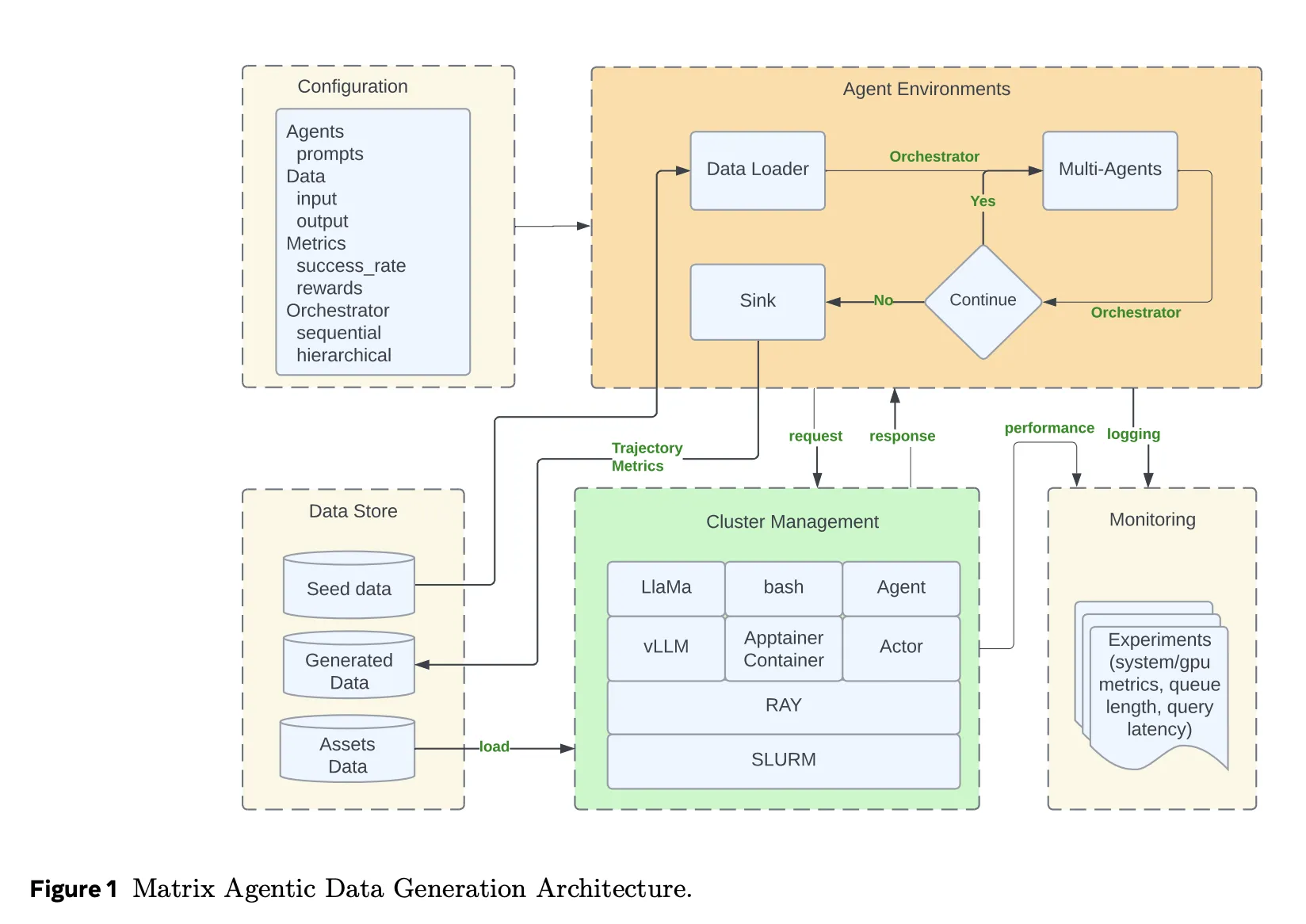

Matrix takes a distinct method. It serializes each management stream and knowledge stream right into a message object referred to as an orchestrator. The orchestrator holds the duty state, together with dialog historical past, intermediate outcomes and routing logic. Stateless brokers, carried out as Ray actors, pull an orchestrator from a distributed queue, apply their position particular logic, replace the state after which ship it on to the subsequent agent chosen by the orchestrator. There isn’t a central scheduler within the interior loop. Every activity advances independently at row degree, slightly than ready for batch degree limitations as in Spark or Ray Information.

This design reduces idle time when completely different trajectories have very completely different lengths. It additionally makes fault dealing with native to a activity. If one orchestrator fails it doesn’t stall a batch.

System Stack and Providers

Matrix runs on a Ray cluster that’s normally launched on SLURM. Ray supplies distributed actors and queues. Ray Serve exposes LLM endpoints behind vLLM and SGLang, and can even path to exterior APIs comparable to Azure OpenAI or Gemini by proxy servers.

Instrument calls and different complicated companies run inside Apptainer containers. This isolates the agent runtime from code execution sandboxes, HTTP instruments or customized evaluators. Hydra manages configuration for agent roles, orchestrator varieties, useful resource allocations and I or O schemas. Grafana integrates with Ray metrics to trace queue size, pending duties, token throughput and GPU utilization in actual time.

Matrix additionally introduces message offloading. When dialog historical past grows past a measurement threshold, massive payloads are saved in Ray’s object retailer and solely object identifiers are saved within the orchestrator. This reduces cluster bandwidth whereas nonetheless permitting brokers to reconstruct prompts when wanted.

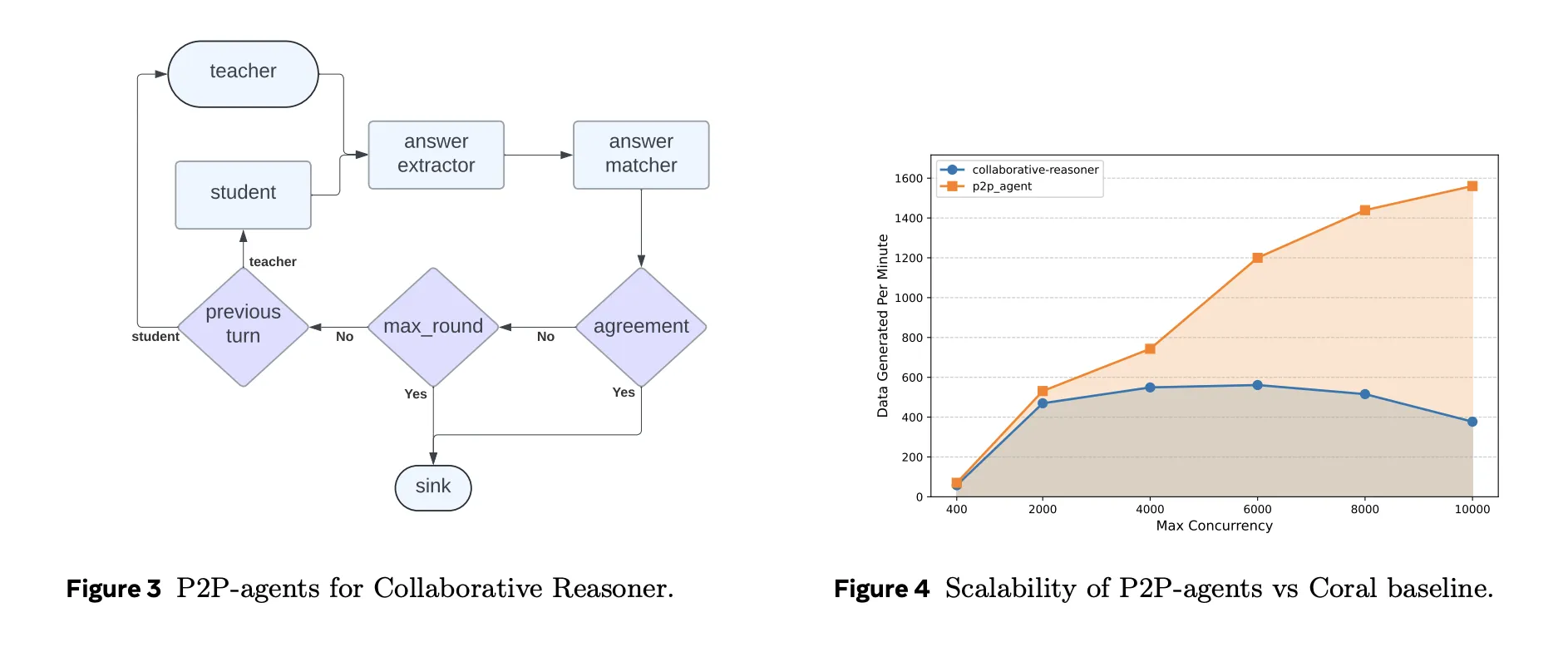

Case Examine 1: Collaborative Reasoner

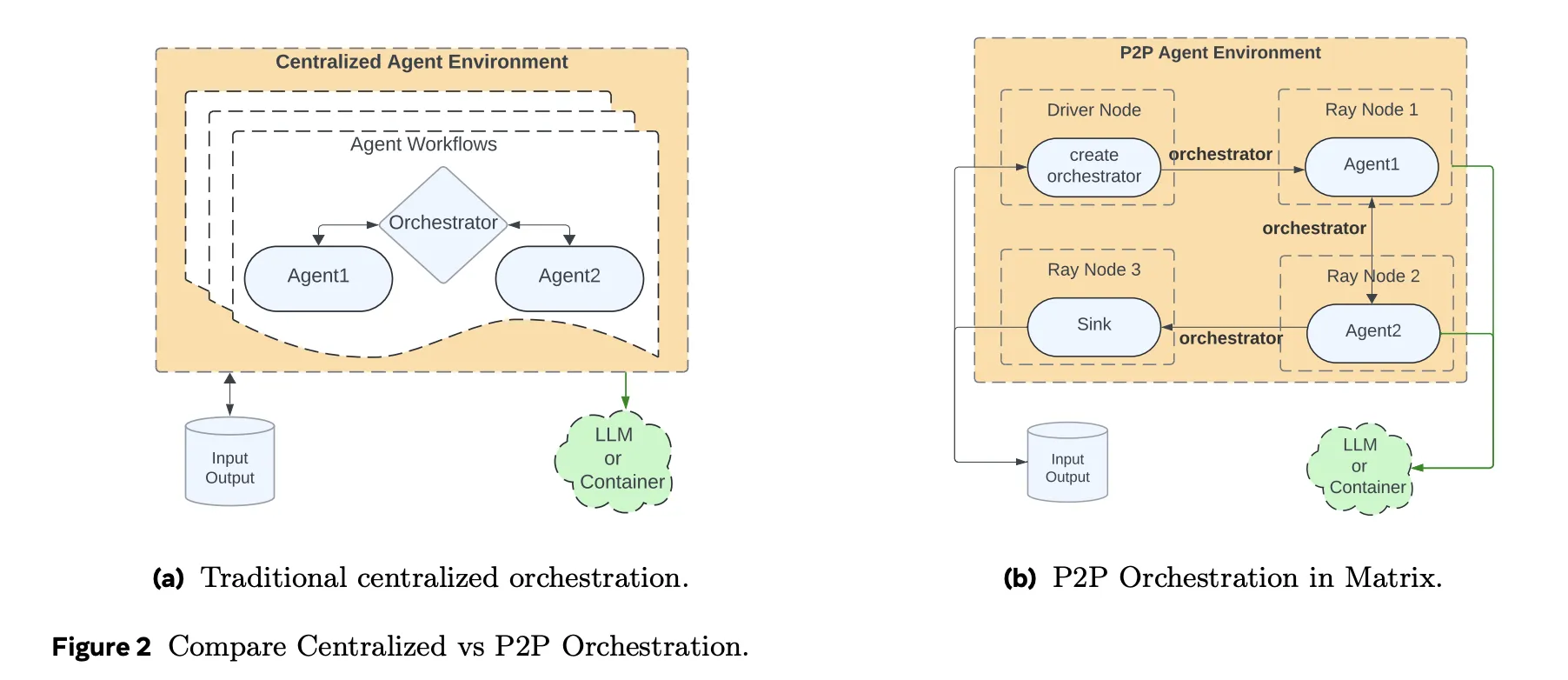

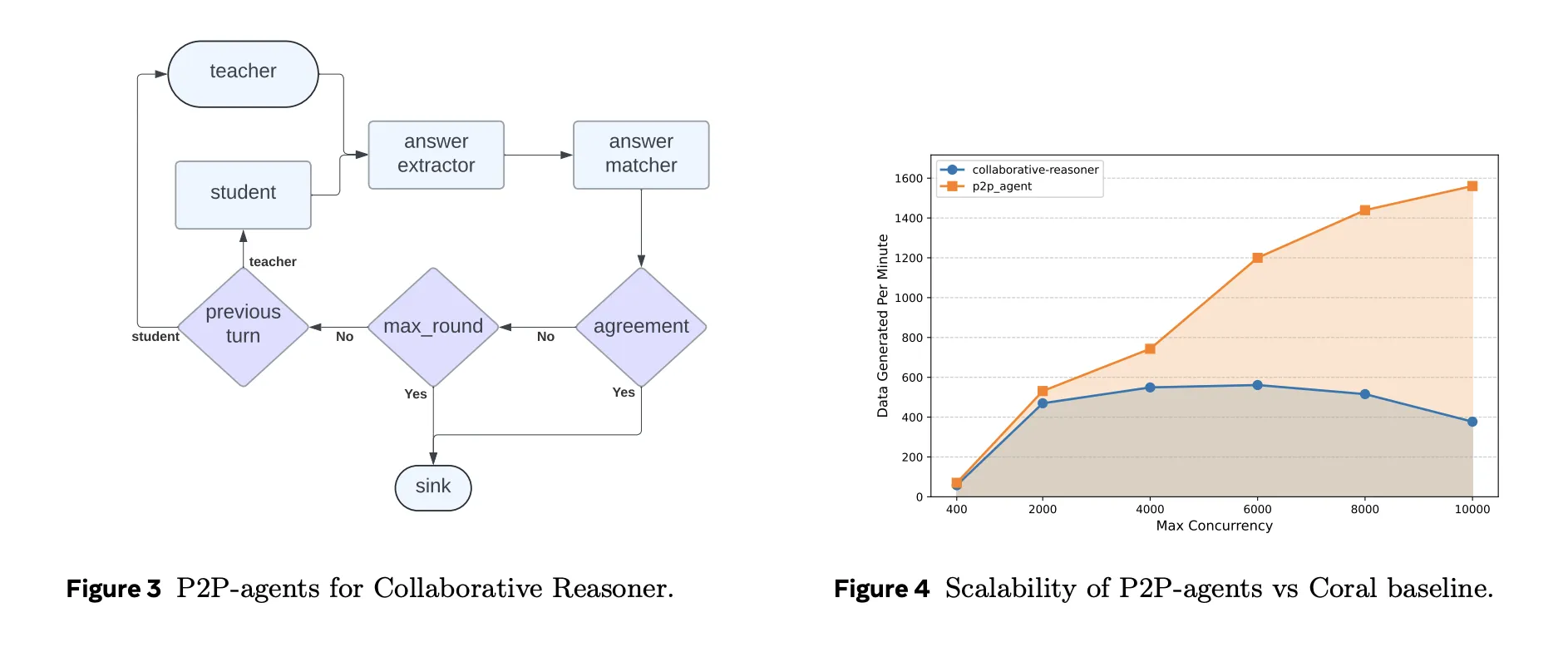

Collaborative Reasoner, often known as Coral, evaluates multi agent dialogue the place two LLM brokers talk about a query, disagree when wanted and attain a ultimate reply. Within the unique implementation a central controller manages 1000’s of self collaboration trajectories. Matrix reimplements the identical protocol utilizing peer to look orchestrators and stateless brokers.

On 31 A100 nodes, utilizing LLaMA 3.1 8B Instruct, Matrix configures concurrency as 248 GPUs with 50 queries per GPU, so 12,400 concurrent conversations. The Coral baseline runs at its optimum concurrency of 5,000. Below equivalent {hardware}, Matrix generates about 2 billion tokens in roughly 4 hours, whereas Coral produces about 0.62 billion tokens in about 9 hours. That may be a 6.8 instances enhance in token throughput with nearly equivalent settlement correctness round 0.47.

Case Examine 2: NaturalReasoning Internet Information Curation

NaturalReasoning constructs a reasoning dataset from massive net corpora. Matrix fashions the pipeline with three brokers. A Filter agent makes use of a smaller classifier mannequin to pick English passages that seemingly include reasoning. A Rating agent makes use of a bigger instruction tuned mannequin to assign high quality scores. A Query agent extracts questions, solutions and reasoning chains.

On 25 million DCLM net paperwork, solely about 5.45 p.c survive all filters, yielding round 1.19 million query reply pairs with related reasoning steps. Matrix then compares completely different parallelism methods on a 500 thousand doc subset. One of the best configuration combines knowledge parallelism and activity parallelism, with 20 knowledge partitions and 700 concurrent duties per partition. This achieves about 1.61 instances increased throughput than a setting that solely scales activity concurrency.

Over the complete 25 million doc run, Matrix reaches 5,853 tokens per second, in comparison with 2,778 tokens per second for a Ray Information batch baseline with 14,000 concurrent duties. That corresponds to a 2.1 instances throughput acquire that comes purely from peer to look row degree scheduling, not from completely different fashions.

Case Examine 3, Tau2-Bench Instrument Use Trajectories

Tau2-Bench evaluates conversational brokers that should use instruments and a database in a buyer assist setting. Matrix represents this atmosphere with 4 brokers, a consumer simulator, an assistant, a instrument executor and a reward calculator, plus a sink that collects metrics. Instrument APIs and reward logic are reused from the Tau2 reference implementation and are wrapped in containers.

On a cluster with 13 H100 nodes and dozens of LLM replicas, Matrix generates 22,800 trajectories in about 1.25 hours. That corresponds to roughly 41,000 tokens per second. The baseline Tau2-agent implementation on a single node, configured with 500 concurrent threads, reaches about 2,654 tokens per second and 1,519 trajectories. Common reward stays nearly unchanged throughout each techniques, which confirms that the speedup doesn’t come from slicing corners within the atmosphere. General, Matrix delivers about 15.4 instances increased token throughput on this benchmark.

Key Takeaways

- Matrix replaces centralized orchestrators with a peer to look, message pushed agent structure that treats every activity as an impartial state machine transferring by stateless brokers.

- The framework is constructed totally on an open supply stack, SLURM, Ray, vLLM, SGLang and Apptainer, and scales to tens of 1000’s of concurrent multi agent workflows for artificial knowledge technology, benchmarking and knowledge processing.

- Throughout three case research, Collaborative Reasoner, NaturalReasoning and Tau2-Bench, Matrix delivers about 2 to fifteen.4 instances increased token throughput than specialised baselines below equivalent {hardware}, whereas sustaining comparable output high quality and rewards.

- Matrix offloads massive dialog histories to Ray’s object retailer and retains solely light-weight references in messages, which reduces peak community bandwidth and helps excessive throughput LLM serving with gRPC primarily based mannequin backends.

Editorial Notes

Matrix is a practical techniques contribution that takes multi agent artificial knowledge technology from bespoke scripts to an operational runtime. By encoding management stream and knowledge stream into orchestrators, then pushing execution into stateless P2P brokers on Ray, it cleanly separates scheduling, LLM inference and instruments. The case research on Collaborative Reasoner, NaturalReasoning and Tau2-Bench present that cautious techniques design, not new mannequin architectures, is now the principle lever for scaling artificial knowledge pipelines.

Try the Paper and Repo. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be part of us on telegram as nicely.

Michal Sutter is an information science skilled with a Grasp of Science in Information Science from the College of Padova. With a strong basis in statistical evaluation, machine studying, and knowledge engineering, Michal excels at reworking complicated datasets into actionable insights.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s tendencies as we speak: learn extra, subscribe to our e-newsletter, and turn out to be a part of the NextTech group at NextTech-news.com