ByteDance Seed just lately dropped a analysis which may change how we construct reasoning AI. For years, devs and AI researchers have struggled to ‘cold-start’ Massive Language Fashions (LLMs) into Lengthy Chain-of-Thought (Lengthy CoT) fashions. Most fashions lose their approach or fail to switch patterns throughout multi-step reasoning.

The ByteDance group found the issue: we’ve been reasoning the flawed approach. As a substitute of simply phrases or nodes, efficient AI reasoning has a steady, molecular-like construction.

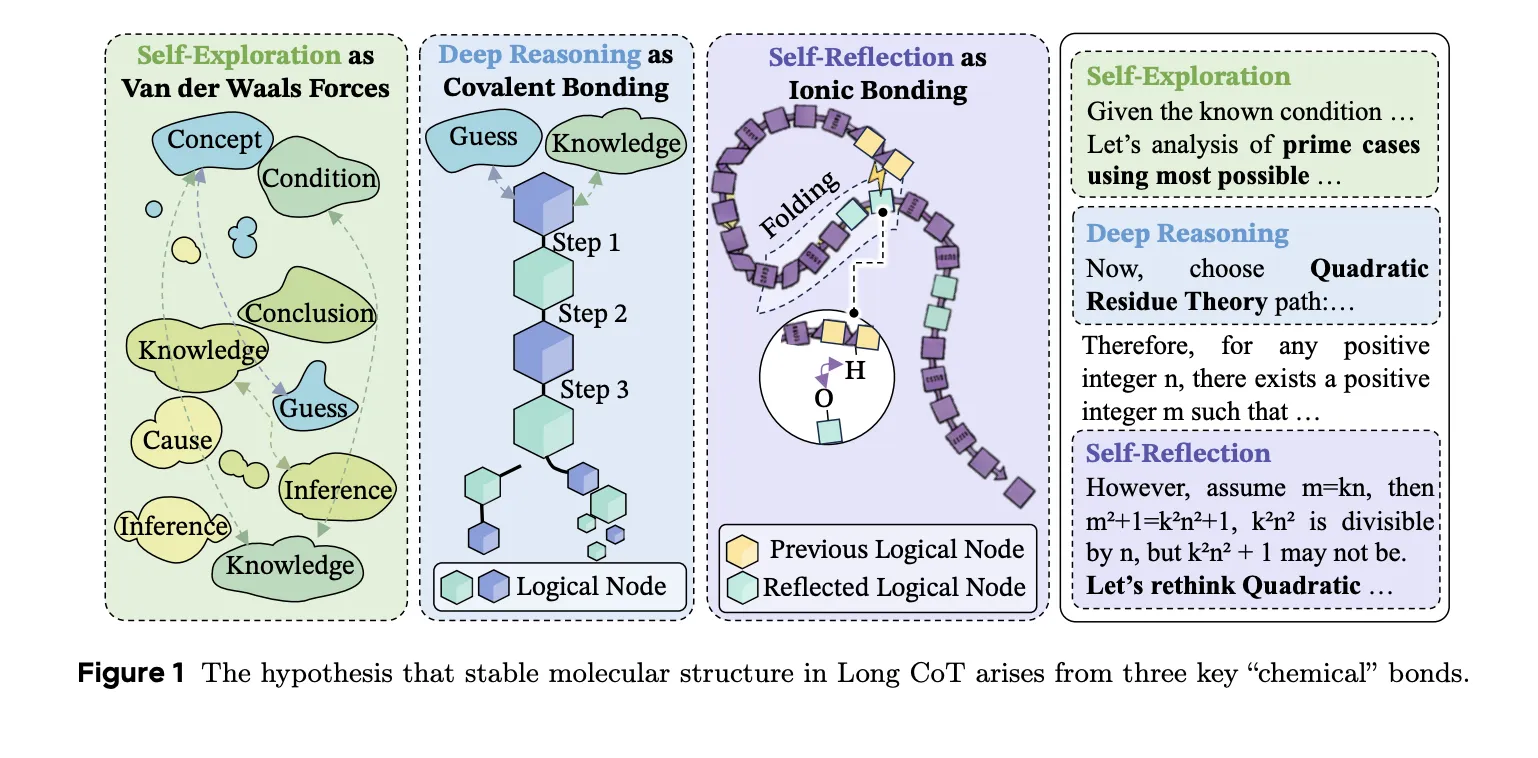

The three ‘Chemical Bonds’ of Thought

The researchers posit that high-quality reasoning trajectories are held collectively by 3 interplay sorts. These mirror the forces present in natural chemistry:

- Deep Reasoning as Covalent Bonds: This varieties the first ‘bone’ of the thought course of. It encodes sturdy logical dependencies the place Step A should justify Step B. Breaking this bond destabilizes the complete reply.

- Self-Reflection as Hydrogen Bonds: This acts as a stabilizer. Simply as proteins achieve stability when chains fold, reasoning stabilizes when later steps (like Step 100) revise or reinforce earlier premises (like Step 10). Of their exams, 81.72% of reflection steps efficiently reconnected to beforehand shaped clusters.

- Self-Exploration as Van der Waals Forces: These are weak bridges between distant clusters of logic. They permit the mannequin to probe new prospects or various hypotheses earlier than imposing stronger logical constraints.

Why ‘Wait, Let Me Suppose’ Isn’t Sufficient

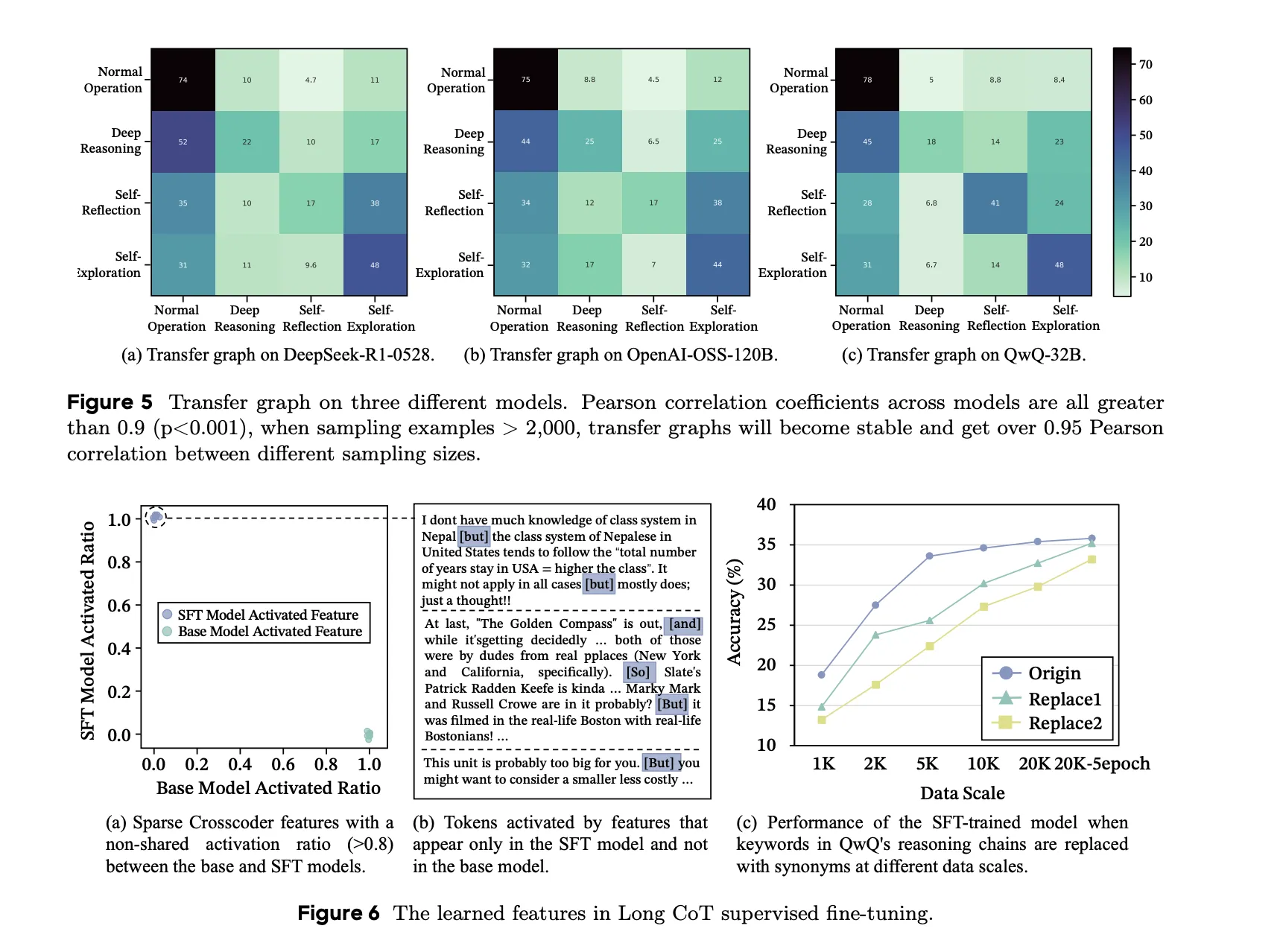

Most AI devs/researchers attempt to repair reasoning by coaching fashions to mimic key phrases like ‘wait’ or ‘perhaps’. ByteDance group proved that fashions really study the underlying reasoning habits, not the floor phrases.

The analysis group identifies a phenomenon referred to as Semantic Isomers. These are reasoning chains that remedy the identical process and use the identical ideas however differ in how their logical ‘bonds’ are distributed.

Key findings embrace:

- Imitation Fails: Advantageous-tuning on human-annotated traces or utilizing In-Context Studying (ICL) from weak fashions fails to construct steady Lengthy CoT buildings.

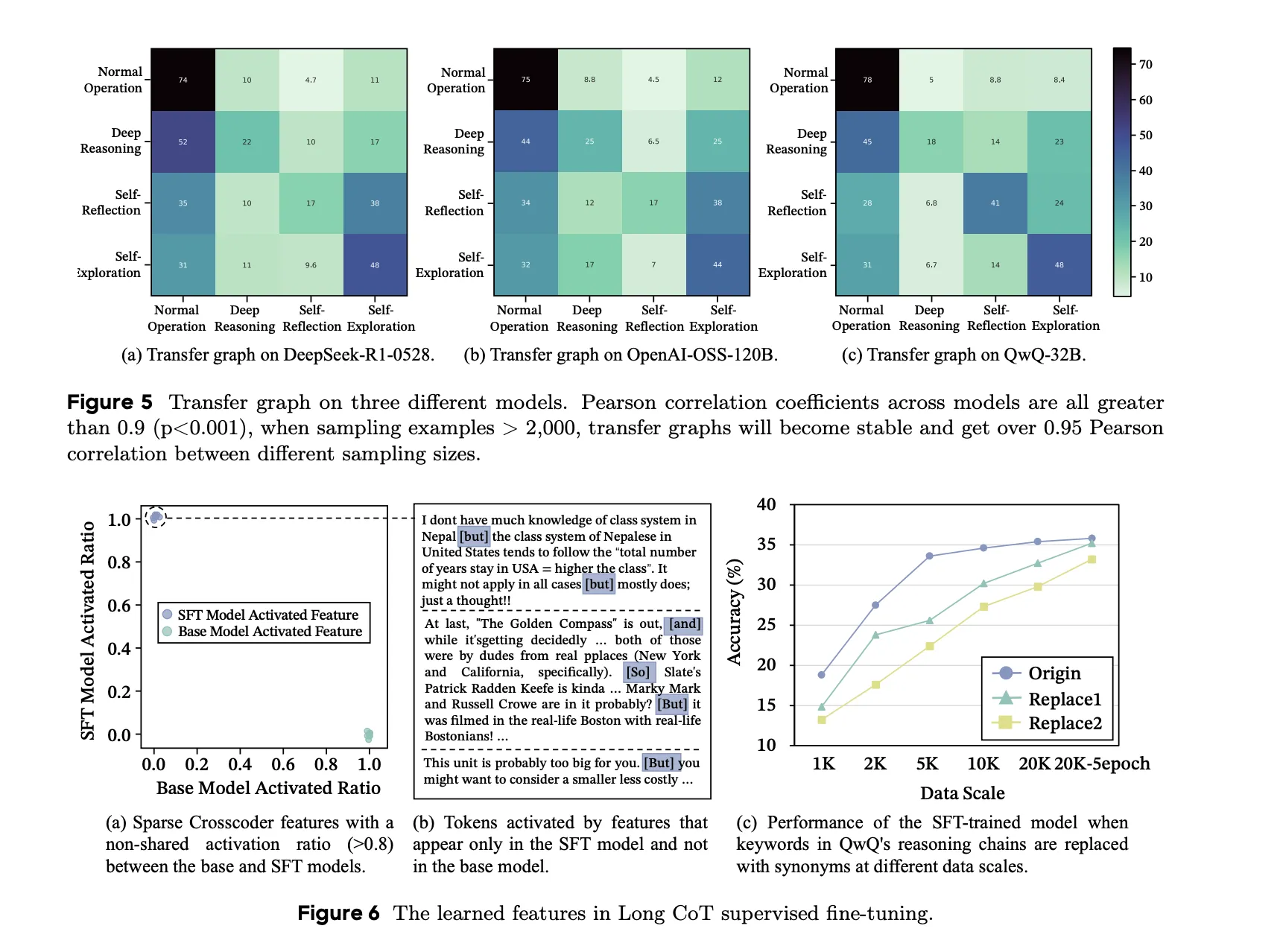

- Structural Battle: Mixing reasoning knowledge from completely different sturdy academics (like DeepSeek-R1 and OpenAI-OSS) really destabilizes the mannequin. Even when the info is comparable, the completely different “molecular” buildings trigger structural chaos and drop efficiency.

- Info Circulation: In contrast to people, who’ve uniform info achieve, sturdy reasoning fashions exhibit metacognitive oscillation. They alternate between high-entropy exploration and steady convergent validation.

MOLE-SYN: The Synthesis Methodology

To repair these points, ByteDance group launched MOLE-SYN. This can be a ‘distribution-transfer-graph’ technique. As a substitute of straight copying a instructor’s textual content, it transfers the behavioral construction to the scholar mannequin.

It really works by estimating a habits transition graph from sturdy fashions and guiding a less expensive mannequin to synthesize its personal efficient Lengthy CoT buildings. This decoupling of construction from floor textual content yields constant positive factors throughout 6 main benchmarks, together with GSM8K, MATH-500, and OlymBench.

Defending the ‘Thought Molecule‘

This analysis additionally sheds gentle on how personal AI corporations shield their fashions. Exposing full reasoning traces permits others to clone the mannequin’s inner procedures.

ByteDance group discovered that summarization and reasoning compression are efficient defenses. By lowering the token depend—usually by greater than 45%—corporations disrupt the reasoning bond distributions. This creates a niche between what the mannequin outputs and its inner ‘error-bounded transitions,’ making it a lot tougher to distill the mannequin’s capabilities.

Key Takeaways

- Reasoning as ‘Molecular’ Bonds: Efficient Lengthy Chain-of-Thought (Lengthy CoT) is outlined by three particular ‘chemical’ bonds: Deep Reasoning (covalent-like) varieties the logical spine, Self-Reflection (hydrogen-bond-like) offers world stability by means of logical folding, and Self-Exploration (van der Waals-like) bridges distant semantic ideas.

- Conduct Over Key phrases: Fashions internalize underlying reasoning buildings and transition distributions relatively than simply surface-level lexical cues like ‘wait’ or ‘perhaps’. Changing key phrases with synonyms doesn’t considerably influence efficiency, proving that true reasoning depth comes from realized behavioral motifs.

- The ‘Semantic Isomer’ Battle: Combining heterogeneous reasoning knowledge from completely different sturdy fashions (e.g., DeepSeek-R1 and OpenAI-OSS) can set off ‘structural chaos’. Even when knowledge sources are statistically related, incompatible behavioral distributions can break logical coherence and degrade mannequin efficiency.

- MOLE-SYN Methodology: This ‘distribution-transfer-graph’ framework allows fashions to synthesize efficient Lengthy CoT buildings from scratch utilizing cheaper instruction LLMs. By transferring the behavioral transition graph as an alternative of direct textual content, MOLE-SYN achieves efficiency near costly distillation whereas stabilizing Reinforcement Studying (RL).

- Safety by way of Structural Disruption: Non-public LLMs can shield their inner reasoning processes by means of summarization and compression. Lowering token depend by roughly 45% or extra successfully ‘breaks’ the bond distributions, making it considerably tougher for unauthorized fashions to clone inner reasoning procedures by way of distillation.

Take a look at the Paper. Additionally, be happy to observe us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be a part of us on telegram as nicely.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s tendencies right this moment: learn extra, subscribe to our e-newsletter, and grow to be a part of the NextTech group at NextTech-news.com