PixVerse has introduced the discharge of PixVerse R1, billed because the world’s first general-purpose real-time world mannequin, marking a significant leap in interactive AI-generated video and digital environments.

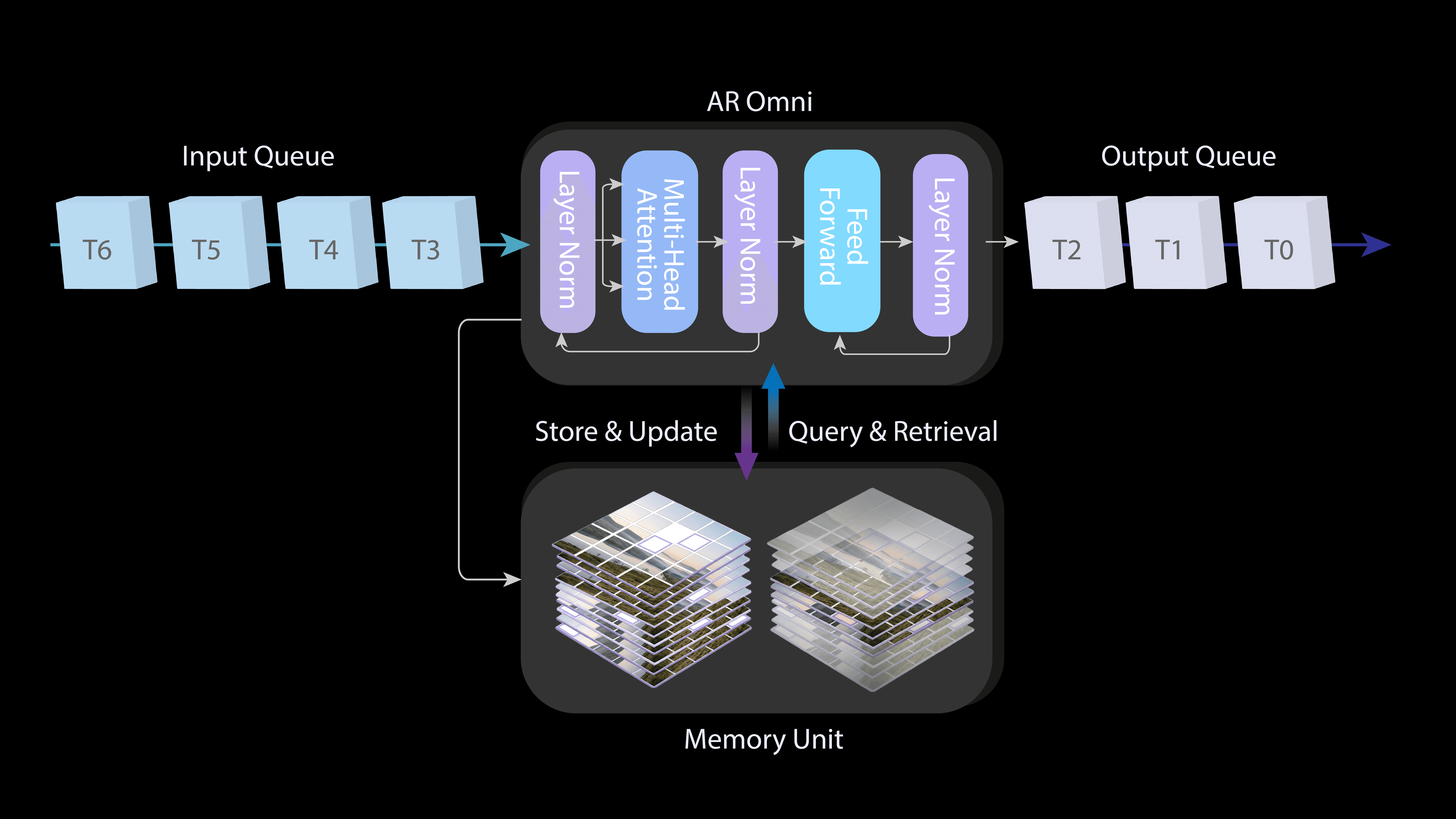

In line with the corporate, PixVerse R1 is powered by an instant-response engine that reduces era latency to near-zero, successfully ending the period of asynchronous rendering. Constructed on an autoregressive streaming structure, the mannequin permits customers to change prompts in actual time throughout era—very like a director adjusting scenes on the fly—whereas delivering synchronized 1080p high-definition audio and video.

With PixVerse R1, video is now not a closed, completed product. As a substitute, it turns into an interactive, steady, and co-evolving digital world, enabling real-time interplay, extension, and collaborative creation.

Key Technical Highlights of PixVerse R1:

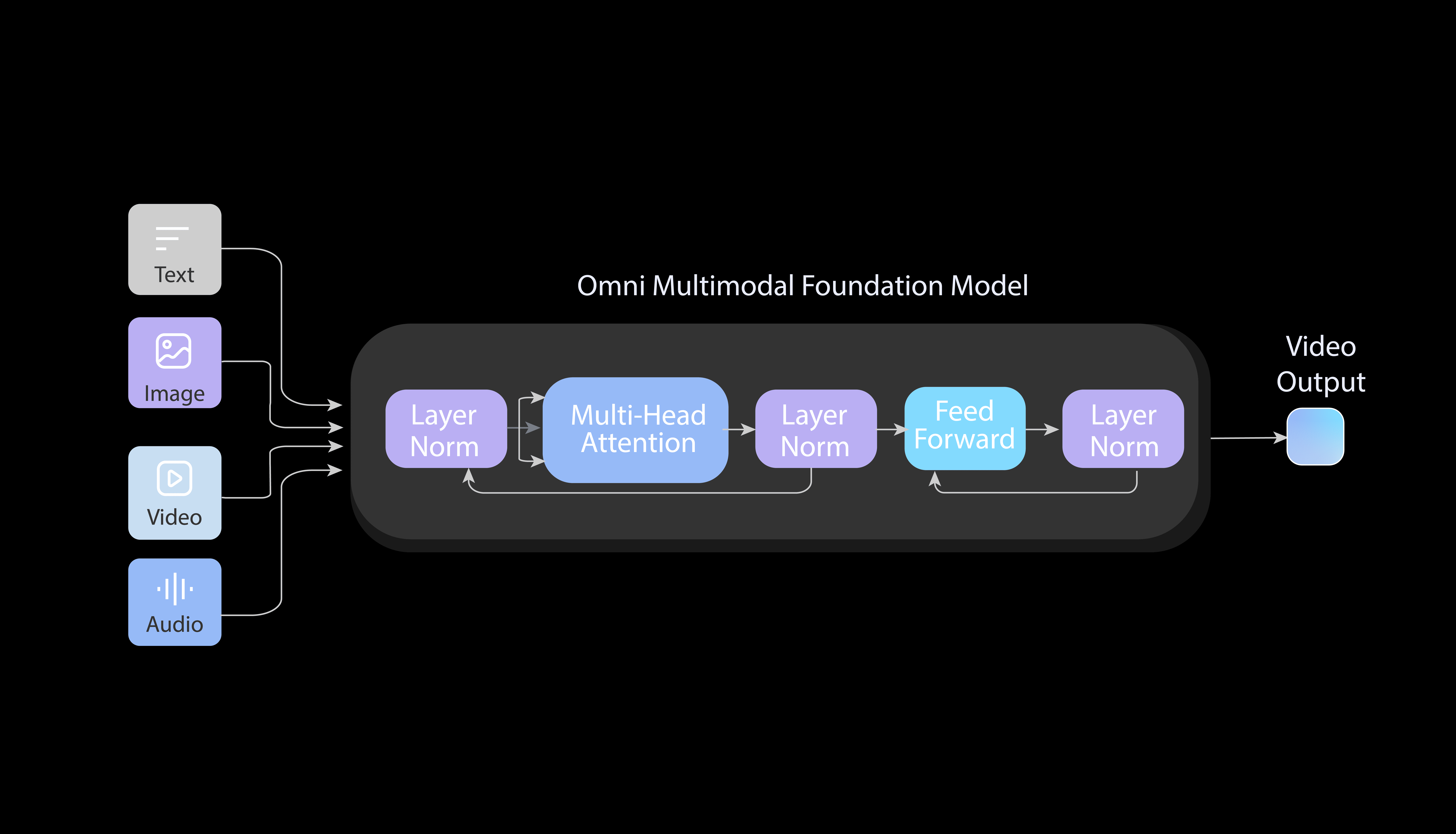

1. Unified Mannequin Structure

A single mannequin able to processing textual content, audio, and video in a unified framework.

2. Infinite Streaming

Steady, long-duration video era enabled by way of autoregressive modeling.

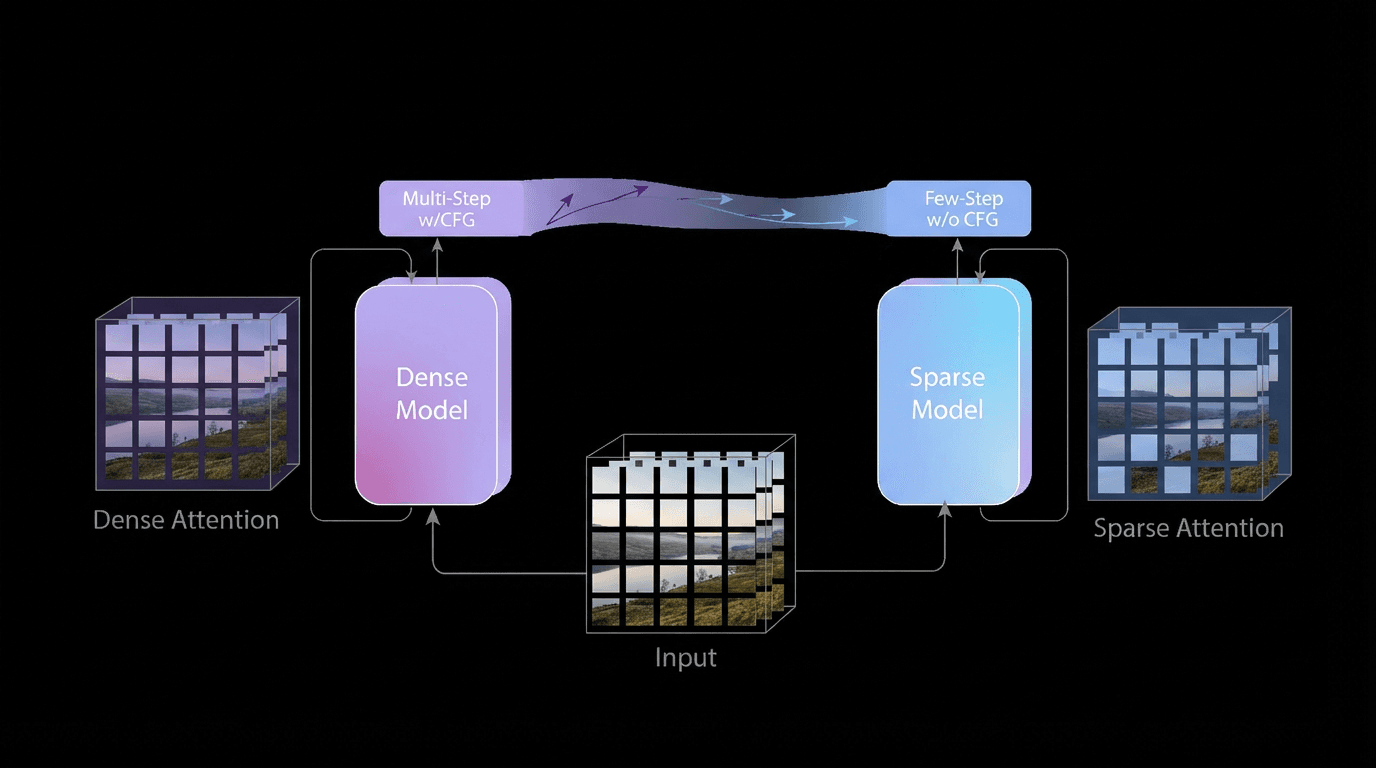

3. Immediate Response Engine

Breakthrough ultra-low-latency sampling, requiring only one–4 steps per era cycle.

PixVerse was based by Wang Changhu, former Director of the ByteDance AI Lab. The corporate has surpassed 100 million customers globally, with over 16 million month-to-month lively customers. Backed by greater than $60 million in funding led by Alibaba, PixVerse reviews an annual recurring income (ARR) exceeding $40 million, underscoring its robust commercialization potential.

Supply: OSChina

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s developments right now: learn extra, subscribe to our publication, and change into a part of the NextTech neighborhood at NextTech-news.com