Giant context home windows have dramatically elevated how a lot data trendy language fashions can course of in a single immediate. With fashions able to dealing with a whole lot of hundreds—and even hundreds of thousands—of tokens, it’s simple to imagine that Retrieval-Augmented Era (RAG) is now not crucial. When you can match a complete codebase or documentation library into the context window, why construct a retrieval pipeline in any respect?

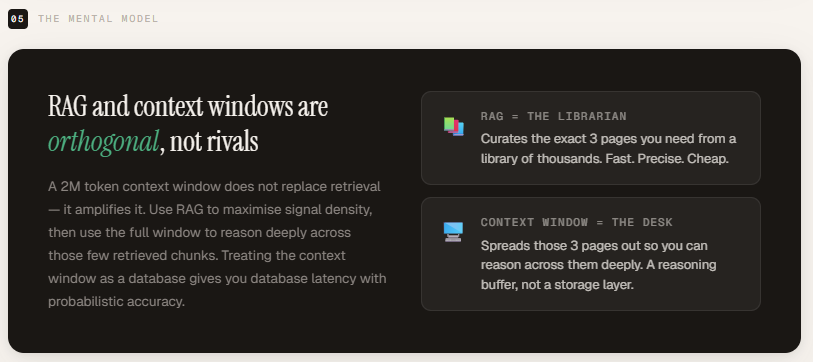

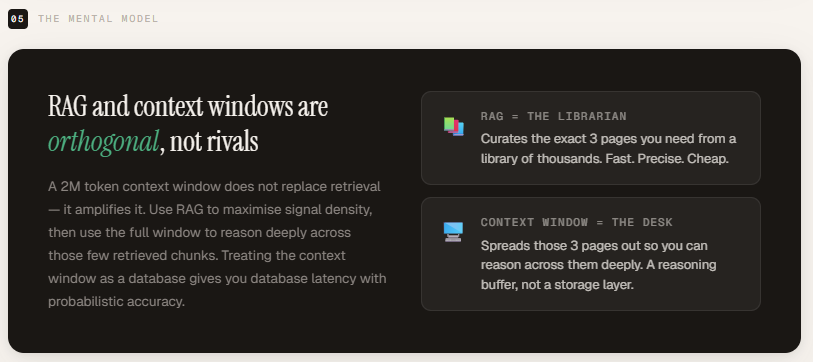

The important thing distinction is {that a} context window defines how a lot the mannequin can see, whereas RAG determines what the mannequin ought to see. A big window will increase capability, however it doesn’t enhance relevance. RAG filters and selects crucial data earlier than it reaches the mannequin, enhancing signal-to-noise ratio, effectivity, and reliability. The 2 approaches resolve completely different issues and usually are not substitutes for each other.

On this article, we evaluate each methods instantly. Utilizing the OpenAI API, we consider Retrieval-Augmented Era in opposition to brute-force context stuffing on the identical documentation corpus. We measure token utilization, latency, and value—and display how burying crucial data inside massive prompts can have an effect on mannequin efficiency. The outcomes spotlight why massive context home windows complement RAG slightly than substitute it.

Putting in the dependencies

import os

import time

import textwrap

import numpy as np

import tiktoken

from openai import OpenAI

from getpass import getpass

os.environ["OPENAI_API_KEY"] = getpass('Enter OpenAI API Key: ')

shopper = OpenAI()We use text-embedding-3-small because the embedding mannequin to transform paperwork and queries into vector representations for environment friendly semantic retrieval. For technology and reasoning, we use gpt-4o, with token accounting dealt with through its corresponding tiktoken encoding to precisely measure context measurement and value.

EMBED_MODEL = "text-embedding-3-small"

CHAT_MODEL = "gpt-4o"

ENC = tiktoken.encoding_for_model("gpt-4o")Creating the doc corpus

This corpus serves because the retrieval supply for our benchmark. Within the RAG setup, embeddings are generated for every doc and related chunks are retrieved based mostly on semantic similarity. Within the context-stuffing setup, the whole corpus is injected into the immediate. As a result of the paperwork include particular numeric clauses (e.g., cut-off dates, price caps, refund home windows), they’re well-suited for testing retrieval accuracy, sign density, and the “Misplaced within the Center” impact below large-context situations.

The corpus consists of 10 structured coverage paperwork totaling roughly 650 tokens, with every doc ranging between 54 and 83 tokens. This measurement retains the dataset manageable whereas nonetheless reflecting the range and density of a practical enterprise documentation set.

Though comparatively small, the corpus consists of tightly packed numerical clauses, conditional guidelines, and compliance statements—making it appropriate for evaluating retrieval precision, reasoning accuracy, and token effectivity. It gives a managed surroundings to match RAG-based selective retrieval in opposition to full context stuffing with out introducing exterior noise.

def count_tokens(textual content: str) -> int:

return len(ENC.encode(textual content))

DOCS = [

{

"id": 1, "title": "Refund Policy",

"content": (

"Customers may request a full refund within 30 days of purchase. "

"Refunds are processed within 5-7 business days to the original payment method. "

"Digital products are non-refundable once the download link has been accessed. "

"Subscription cancellations stop future charges but do not trigger automatic refunds "

"for the current billing cycle unless the cancellation is made within 48 hours of renewal."

)

},

{

"id": 2, "title": "Shipping Information",

"content": (

"Standard shipping takes 5-7 business days. Express shipping delivers in 2-3 business days. "

"Orders over $50 qualify for free standard shipping within the continental US. "

"International shipping is available to 30 countries and takes 10-21 business days. "

"Tracking numbers are emailed within 24 hours of dispatch."

)

},

{

"id": 3, "title": "Account Security",

"content": (

"Two-factor authentication (2FA) can be enabled from the Security tab in account settings. "

"Passwords must be at least 12 characters and include one uppercase letter, one number, "

"and one special character. Active sessions expire after 30 days of inactivity. "

"Suspicious login attempts trigger an automatic account lock and a reset email."

)

},

{

"id": 4, "title": "API Rate Limits",

"content": (

"Free tier: 100 requests per day, max 10 requests per minute. "

"Pro tier: 10 000 requests per day, max 200 requests per minute. "

"Enterprise tier: unlimited requests, burst up to 1 000 per minute. "

"All responses include X-RateLimit-Remaining and X-RateLimit-Reset headers. "

"Exceeding limits returns HTTP 429 with a Retry-After header."

)

},

{

"id": 5, "title": "Data Privacy & GDPR",

"content": (

"All user data is encrypted at rest using AES-256 and in transit using TLS 1.3. "

"We never sell or rent personal data to third parties. "

"The platform is fully GDPR and CCPA compliant. "

"Data deletion requests are processed within 72 hours. "

"Users can export all their data in JSON or CSV format from the Privacy section."

)

},

{

"id": 6, "title": "Billing & Subscription Cycles",

"content": (

"Subscriptions renew automatically on the same calendar day each month. "

"Annual plans offer a 20 % discount compared to monthly billing. "

"Invoices are sent via email 3 days before each renewal. "

"Failed payments retry three times over 7 days before the account is downgraded."

)

},

{

"id": 7, "title": "Supported File Formats",

"content": (

"Supported upload formats: PDF, DOCX, XLSX, PPTX, PNG, JPG, WebP, MP4, MOV. "

"Maximum individual file size is 100 MB. "

"Batch uploads support up to 50 files simultaneously. "

"Files are virus-scanned on upload and quarantined if threats are detected."

)

},

{

"id": 8, "title": "Compliance Certifications",

"content": (

"The platform holds SOC 2 Type II certification, renewed annually. "

"ISO 27001 compliance is maintained with quarterly internal audits. "

"A HIPAA Business Associate Agreement (BAA) is available for healthcare customers on the Enterprise plan. "

"PCI-DSS Level 1 compliance covers all payment processing flows."

)

},

{

"id": 9, "title": "SLA & Uptime Guarantees",

"content": (

"Enterprise SLA guarantees 99.9 % monthly uptime (≤ 43 minutes downtime/month). "

"Scheduled maintenance windows occur every Sunday between 02:00-04:00 UTC. "

"Unplanned incidents are communicated via status.example.com within 15 minutes. "

"SLA breaches are compensated with service credits applied to the next invoice."

)

},

{

"id": 10, "title": "Cancellation Policy",

"content": (

"Users can cancel at any time from the Subscription tab in account settings. "

"Annual plan holders receive a pro-rated refund for unused months if cancelled within 30 days of renewal. "

"Cancellation takes effect at the end of the current billing period; access continues until then. "

"Re-activation within 90 days of cancellation restores all historical data."

)

},

]total_tokens = sum(count_tokens(d["content"]) for d in DOCS)

print(f"Corpus: {len(DOCS)} paperwork | {total_tokens} tokens totaln")

for d in DOCS:

print(f" [{d['id']:02d}] {d['title']:<35} ({count_tokens(d['content'])} tokens)")Constructing the Embedding Index

We generate vector embeddings for all 10 paperwork utilizing the text-embedding-3-small mannequin and retailer them in a NumPy array. Every doc is transformed right into a 1,536-dimensional float32 vector, producing an index with form (10, 1536).

All the indexing step completes in 1.82 seconds, demonstrating how light-weight semantic indexing is at this scale. This vector matrix now acts as our retrieval layer—enabling quick similarity search in the course of the RAG workflow as an alternative of scanning uncooked textual content at inference time.

def embed_texts(texts: listing[str]) -> np.ndarray:

"""Name OpenAI Embeddings API and return a (N, 1536) float32 array."""

response = shopper.embeddings.create(mannequin=EMBED_MODEL, enter=texts)

return np.array([item.embedding for item in response.data], dtype=np.float32)

print("Constructing index ... ", finish="", flush=True)

t0 = time.perf_counter()

corpus_texts = [d["content"] for d in DOCS]

index = embed_texts(corpus_texts) # form: (10, 1536)

elapsed = time.perf_counter() - t0

print(f"accomplished in {elapsed:.2f}s | index form: {index.form}")Retrieval and Immediate helpers

The under features implement the complete comparability pipeline between RAG and context stuffing.

- retrieve() embeds the person question, computes cosine similarity through a dot product in opposition to the precomputed index, and returns the top-k most related paperwork with similarity scores. As a result of text-embedding-3-small outputs unit-norm vectors, the dot product instantly represents cosine similarity—conserving retrieval each easy and environment friendly.

- build_rag_prompt() constructs a centered immediate utilizing solely the retrieved chunks, making certain excessive sign density and minimal irrelevant context.

- build_stuffed_prompt() constructs a brute-force immediate by injecting the whole corpus into the context, simulating the “simply use the entire window” strategy.

- call_llm() sends the immediate to gpt-4o, measures latency, and captures token utilization, permitting us to instantly evaluate price, velocity, and effectivity between the 2 methods.

Collectively, these helpers create a managed surroundings to benchmark retrieval precision versus uncooked context capability.

def retrieve(question: str, okay: int = 3) -> listing[dict]:

"""

Embed the question, compute cosine similarity in opposition to the index,

and return the top-k doc dicts with their scores.

text-embedding-3-small returns unit-norm vectors, so the dot product

IS cosine similarity -- no further normalisation wanted.

"""

q_vec = embed_texts([query])[0] # form: (1536,)

scores = index @ q_vec # dot product = cosine similarity

top_idx = np.argsort(scores)[::-1][:k] # top-k indices, highest first

return [{"doc": DOCS[i], "rating": float(scores[i])} for i in top_idx]

def build_rag_prompt(question: str, chunks: listing[dict]) -> str:

"""Construct a centered immediate from solely the retrieved chunks."""

context_parts = [

f"[Source: {c['doc']['title']}]n{c['doc']['content']}"

for c in chunks

]

context = "nn---nn".be a part of(context_parts)

return (

f"You're a useful assist assistant. "

f"Reply the query under utilizing the supplied context. "

f"Be particular and direct.nn"

f"CONTEXT:n{context}nn"

f"QUESTION: {question}"

)

def build_stuffed_prompt(question: str) -> str:

"""Construct a immediate that dumps the whole corpus into the context."""

context_parts = [

f"[Source: {d['title']}]n{d['content']}"

for d in DOCS

]

context = "nn---nn".be a part of(context_parts)

return (

f"You're a useful assist assistant. "

f"Reply the query under utilizing the supplied context. "

f"Be particular and direct.nn"

f"CONTEXT:n{context}nn"

f"QUESTION: {question}"

)

def call_llm(immediate: str) -> tuple[str, float, int, int]:

"""Returns (reply, latency_ms, input_tokens, output_tokens)."""

t0 = time.perf_counter()

res = shopper.chat.completions.create(

mannequin = CHAT_MODEL,

messages = [{"role": "user", "content": prompt}],

temperature = 0,

)

latency_ms = (time.perf_counter() - t0) * 1000

reply = res.decisions[0].message.content material.strip()

return reply, latency_ms, res.utilization.prompt_tokens, res.utilization.completion_tokensEvaluating the approaches

This block runs a direct, side-by-side comparability between Retrieval-Augmented Era (RAG) and brute-force context stuffing utilizing the identical person question. Within the RAG strategy, the system first retrieves the highest three most related paperwork based mostly on semantic similarity, builds a centered immediate utilizing solely these chunks, after which sends that condensed context to the mannequin. It additionally prints similarity scores, token counts, and latency, permitting us to look at how a lot context is definitely required to reply the query successfully.

In distinction, the context-stuffing strategy constructs a immediate that features all 10 paperwork, no matter relevance, and sends the whole corpus to the mannequin. By measuring enter tokens, output tokens, and response time for each strategies below an identical situations, we isolate the architectural distinction between selective retrieval and brute-force loading. This makes the trade-offs in effectivity, price, and efficiency concrete slightly than theoretical.

QUERY = "How do I request a refund and the way lengthy does it take" DIVIDER = "─" * 65

print(f"n{'='*65}")

print(f" QUERY: {QUERY}")

print(f"{'='*65}n")

# ── RAG ──────────────────────────────────────────────────────────────────────

print("[ APPROACH 1 ] RAG (retrieve then cause)")

print(DIVIDER)

chunks = retrieve(QUERY, okay=3)

rag_prompt = build_rag_prompt(QUERY, chunks)

print(f"Prime-{len(chunks)} retrieved chunks:")

for c in chunks:

preview = c["doc"]["content"][:75].substitute("n", " ")

print(f" • {c['doc']['title']:<40} similarity: {c['score']:.4f}")

print(f" "{preview}..."")

print(f"nTotal tokens being despatched to LLM: {count_tokens(rag_prompt)}n")

rag_answer, rag_latency, rag_in, rag_out = call_llm(rag_prompt)

print(f"Reply:n{textwrap.fill(rag_answer, 65)}")

print(f"nTokens → enter: {rag_in:>6,} | output: {rag_out:>4,} | complete: {rag_in+rag_out:>6,}")

print(f"Latency → {rag_latency:,.0f} msn")

# ── Strategy 2: Context Stuffing ──────────────────────────────────────────────

print("[ APPROACH 2 ] Context Stuffing (dump every thing, then cause)")

print(DIVIDER)

stuffed_prompt = build_stuffed_prompt(QUERY)

print(f"Sending all {len(DOCS)} paperwork ({count_tokens(stuffed_prompt):,} tokens) to the LLM ...n")

stuff_answer, stuff_latency, stuff_in, stuff_out = call_llm(stuffed_prompt)

print(f"Reply:n{textwrap.fill(stuff_answer, 65)}")

print(f"nTokens → enter: {stuff_in:>6,} | output: {stuff_out:>4,} | complete: {stuff_in+stuff_out:>6,}")

print(f"Latency → {stuff_latency:,.0f} msn")The outcomes present that each approaches produce an accurate and practically an identical reply — however the effectivity profile could be very completely different.

With RAG, solely the three most related paperwork had been retrieved, leading to 278 tokens despatched to the mannequin (285 precise immediate tokens). The full token utilization was 347, and the response latency was 783 ms. The retrieved chunks clearly prioritized the Refund Coverage, which instantly accommodates the reply, whereas the remaining two paperwork had been secondary matches based mostly on semantic similarity.

With context stuffing, all 10 paperwork had been injected into the immediate, growing the enter measurement to 775 tokens and complete utilization to 834 tokens. Latency practically doubled to 1,518 ms. Regardless of processing greater than twice the enter tokens, the mannequin produced basically the identical reply.

The important thing takeaway shouldn’t be that stuffing fails — it really works at small scale — however that it’s inefficient. RAG achieved the identical output with lower than half the tokens and roughly half the latency. As corpus measurement grows from 10 paperwork to hundreds, this hole compounds dramatically. What seems innocent at 768 tokens turns into prohibitively costly and gradual at 500k+ tokens. That is the financial and architectural argument for retrieval: optimize sign earlier than reasoning.

token_ratio = stuff_in / rag_in

latency_ratio = stuff_latency / rag_latency

COST_PER_1M = 2.5

rag_cost = (rag_in / 1_000_000) * COST_PER_1M

stuff_cost = (stuff_in / 1_000_000) * COST_PER_1M

print(f"n{'='*65}")

print(f" HEAD-TO-HEAD SUMMARY")

print(f"{'='*65}")

print(f" {'Metric':<30} {'RAG':>10} {'Stuffing':>10}")

print(f" {DIVIDER}")

print(f" {'Enter tokens':<30} {rag_in:>10,} {stuff_in:>10,}")

print(f" {'Output tokens':<30} {rag_out:>10,} {stuff_out:>10,}")

print(f" {'Latency (ms)':<30} {rag_latency:>10,.0f} {stuff_latency:>10,.0f}")

print(f" {'Price per name (USD)':<30} ${rag_cost:>9.6f} ${stuff_cost:>9.6f}")

print(f" {DIVIDER}")

print(f" {'Token multiplier':<30} {'1x':>10} {token_ratio:>9.1f}x")

print(f" {'Latency multiplier':<30} {'1x':>10} {latency_ratio:>9.1f}x")

print(f" {'Price multiplier':<30} {'1x':>10} {token_ratio:>9.1f}x")

print(f"{'='*65}")The pinnacle-to-head comparability makes the trade-offs express. Context stuffing required 2.7× extra enter tokens, practically 2× the latency, and a couple of.7× the price per name—whereas producing basically the identical reply as RAG. The output token rely remained comparable, that means the extra expense got here completely from pointless context.

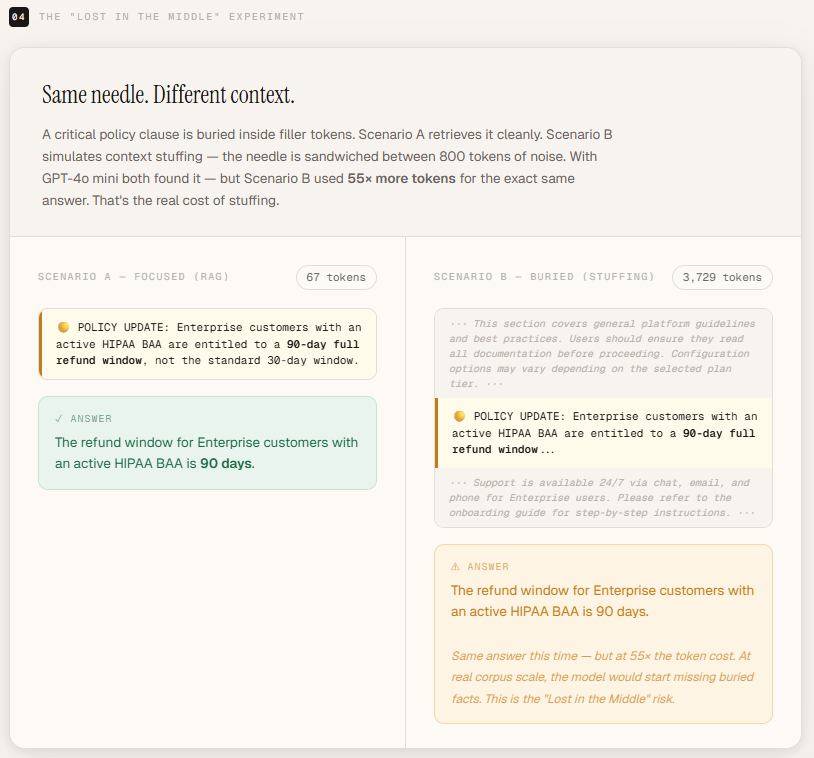

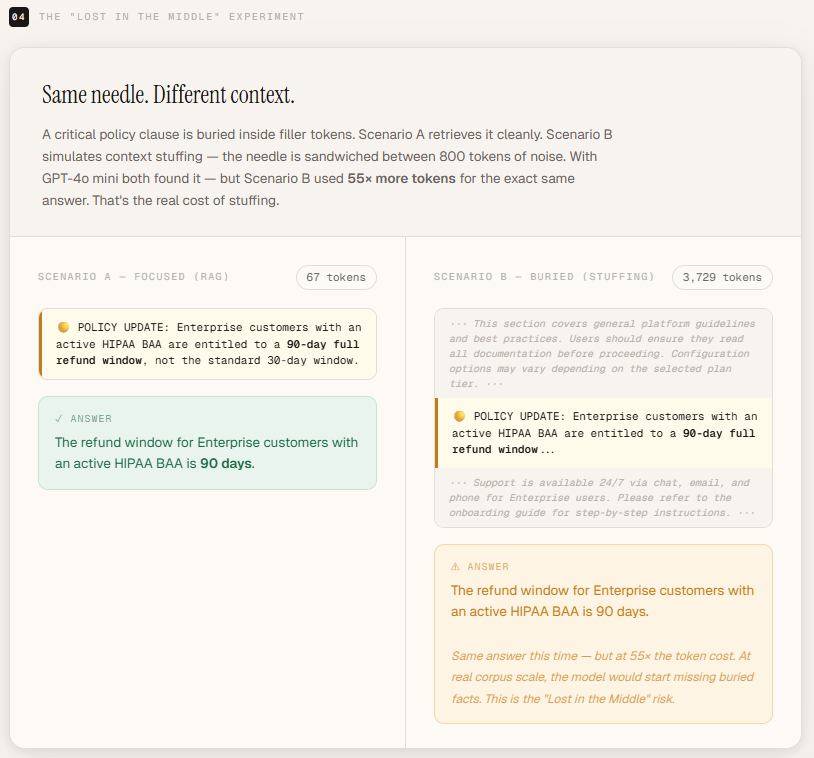

Misplaced within the Center Impact

To display the “Misplaced within the Center” impact, we create a managed setup the place a crucial coverage replace—the needle—states that Enterprise prospects with an lively HIPAA BAA are entitled to a 90-day refund window as an alternative of the usual 30 days. This clause instantly solutions the question however is deliberately buried inside roughly 800 tokens of irrelevant filler textual content designed to simulate a bloated, overstuffed immediate. By asking, “What’s the refund window for Enterprise prospects with a HIPAA BAA?”, we will check whether or not the mannequin reliably extracts the buried clause when it’s surrounded by noise, illustrating how massive context alone doesn’t assure correct consideration or retrieval.

NEEDLE = (

"POLICY UPDATE: Enterprise prospects with an lively HIPAA BAA "

"are entitled to a 90-day full refund window, not the usual 30-day window."

)

# ~800 tokens of irrelevant padding to simulate a bloated doc

FILLER = (

"This part covers common platform pointers and finest practices. "

"Customers ought to guarantee they learn all documentation earlier than continuing. "

"Configuration choices might differ relying on the chosen plan tier. "

"Please consult with the onboarding information for step-by-step directions. "

"Assist is on the market 24/7 through chat, electronic mail, and cellphone for Enterprise customers. "

) * 30NEEDLE_QUERY = "What's the refund window for Enterprise prospects with a HIPAA BAA?"def run_lost_in_middle():

print(f"n{'='*65}")

print(" 'LOST IN THE MIDDLE' EXPERIMENT")

print(f"{'='*65}")

print(f"Question : {NEEDLE_QUERY}")

print(f"Needle: "{NEEDLE[:65]}..."n")

# Situation A: Targeted (simulates an excellent RAG retrieval)

prompt_a = (

f"You're a useful assist assistant. "

f"Reply the query utilizing the context under.nn"

f"CONTEXT:n{NEEDLE}nn"

f"QUESTION: {NEEDLE_QUERY}"

)

# Situation B: Buried (simulates stuffing -- needle is in the course of noise)

buried = f"{FILLER}nn{NEEDLE}nn{FILLER}"

prompt_b = (

f"You're a useful assist assistant. "

f"Reply the query utilizing the context under.nn"

f"CONTEXT:n{buried}nn"

f"QUESTION: {NEEDLE_QUERY}"

)

print(f"[ A ] Targeted context ({count_tokens(prompt_a):,} enter tokens)")

ans_a, _, _, _ = call_llm(prompt_a)

print(f"Reply: {ans_a}n")

print(f"[ B ] Needle buried in filler ({count_tokens(prompt_b):,} enter tokens)")

ans_b, _, _, _ = call_llm(prompt_b)

print(f"Reply: {ans_b}n")

print("─" * 65)On this experiment, each setups return the proper reply — 90 days — however the distinction in context measurement is critical. The centered model required solely 67 enter tokens, delivering the proper response with minimal context. In distinction, the stuffed model required 3,729 enter tokens, over 55× extra enter, to reach on the similar reply.

At this scale, the mannequin was nonetheless in a position to find the buried clause. Nevertheless, the end result highlights an vital precept: correctness alone shouldn’t be the metric — effectivity and reliability are. As context measurement will increase additional, consideration diffusion, latency, and value compound, and retrieval precision turns into more and more crucial. The experiment reveals that enormous context home windows can nonetheless succeed, however they achieve this at dramatically larger computational expense and with higher threat as paperwork develop longer and extra advanced.

I’m a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I’ve a eager curiosity in Information Science, particularly Neural Networks and their software in numerous areas.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s tendencies immediately: learn extra, subscribe to our publication, and grow to be a part of the NextTech neighborhood at NextTech-news.com