How do you construct one open mannequin that may reliably perceive textual content, pictures, audio and video whereas nonetheless working effectively? A group of researchers from Harbin Institute of Know-how, Shenzhen launched Uni-MoE-2.0-Omni, a totally open omnimodal massive mannequin that pushes Lychee’s Uni-MoE line towards language centric multimodal reasoning. The system is skilled from scratch on a Qwen2.5-7B dense spine and prolonged right into a Combination of Consultants structure with dynamic capability routing, a progressive supervised and reinforcement studying recipe, and about 75B tokens of fastidiously matched multimodal information. It handles textual content, pictures, audio and video for understanding and might generate pictures, textual content and speech.

Structure, unified modality encoding round a language core

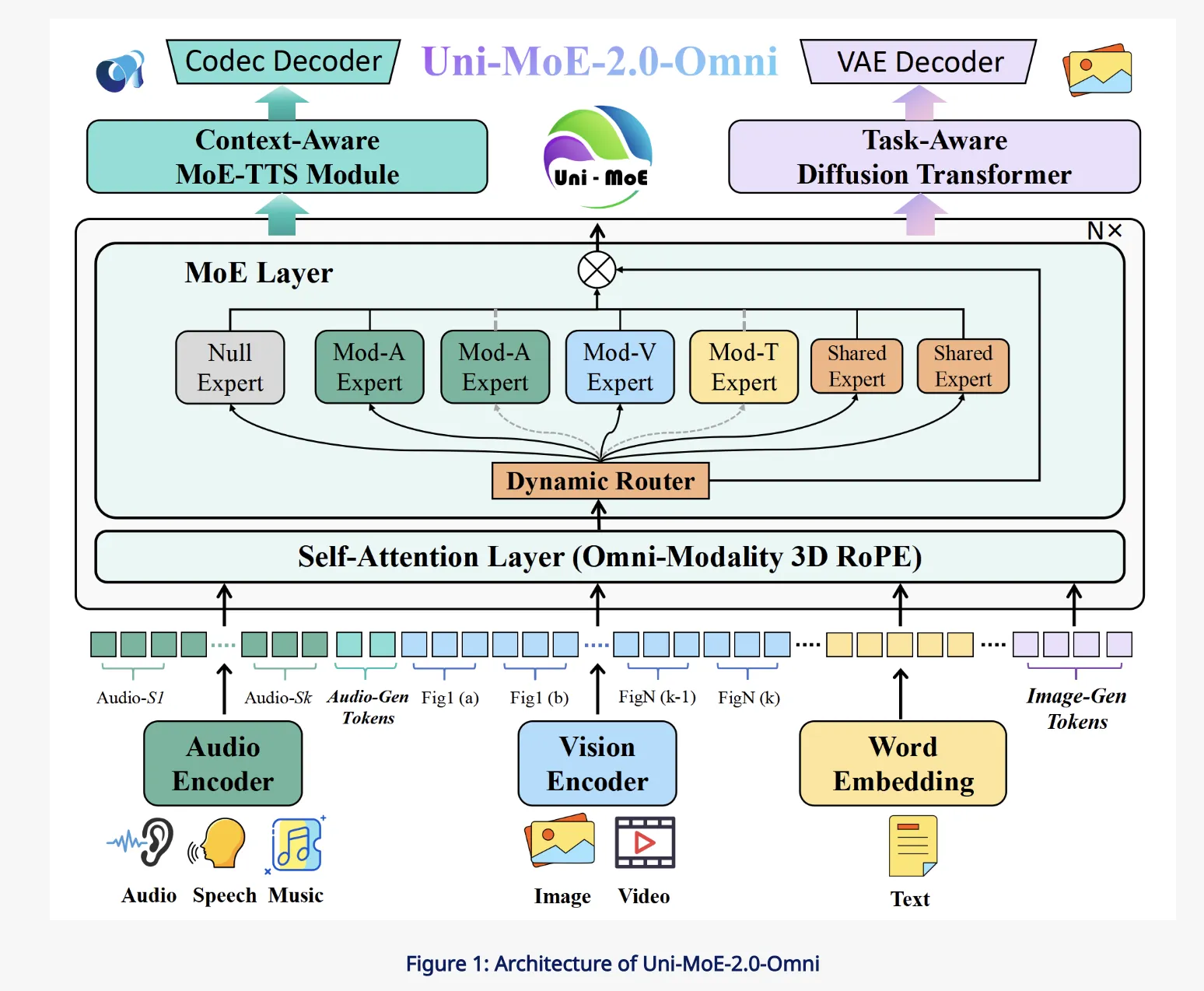

The core of Uni-MoE-2.0-Omni is a Qwen2.5-7B model transformer that serves as a language centric hub. Round this hub, the analysis group connect a unified speech encoder that maps various audio, together with environmental sound, speech and music, into a standard illustration area. On the imaginative and prescient aspect, pre-trained visible encoders course of pictures and video frames, then feed token sequences into the identical transformer. For technology, a context conscious MoE based mostly TTS module and a job conscious diffusion transformer deal with speech and picture synthesis.

All modalities are transformed into token sequences that share a unified interface to the language mannequin. This design means the identical self consideration layers see textual content, imaginative and prescient and audio tokens, which simplifies cross modal fusion and makes the language mannequin the central controller for each understanding and technology. The structure is designed to assist 10 cross modal enter configurations, reminiscent of picture plus textual content, video plus speech and tri modal combos.

Omni Modality 3D RoPE and MoE pushed fusion

Cross modal alignment is dealt with by an Omni Modality 3D RoPE mechanism that encodes temporal and spatial construction immediately into the rotary positional embeddings. As an alternative of solely utilizing one dimensional positions for textual content, the system assigns three coordinates to tokens, time, top and width for visible and audio streams, and time for speech. This offers the transformer an specific view of when and the place every token happens, which is necessary for video understanding and audio visible reasoning duties.

The Combination of Consultants layers exchange commonplace MLP blocks with an MoE stack that has three professional varieties. Empty consultants act as null capabilities that permit computation skipping at inference time. Routed consultants are modality particular and retailer area information for audio, imaginative and prescient or textual content. Shared consultants are small and all the time energetic, offering a communication path for common info throughout modalities. A routing community chooses which consultants to activate based mostly on the enter token, giving specialization with out paying the complete price of a dense mannequin with all consultants energetic.

Coaching recipe, from cross modal pretraining to GSPO DPO

The coaching pipeline is organised into an information matched recipe. First, a language centric cross modal pretraining section makes use of paired picture textual content, audio textual content and video textual content corpora. This step teaches the mannequin to venture every modality right into a shared semantic area aligned with language. The bottom mannequin is skilled on round 75B open supply multimodal tokens and is provided with particular speech and picture technology tokens in order that generative behaviour might be realized by conditioning on linguistic cues.

Subsequent, a progressive supervised advantageous tuning stage prompts modality particular consultants grouped into audio, imaginative and prescient and textual content classes. Throughout this stage, the analysis group introduce particular management tokens in order that the mannequin can carry out duties like textual content conditioned speech synthesis and picture technology inside the identical language interface. After massive scale SFT (Supervised Nice-Tuning), an information balanced annealing section re-weights the combination of datasets throughout modalities and duties and trains with a decrease studying charge. This avoids over becoming to a single modality and improves stability of the ultimate omnimodal behaviour.

To unlock lengthy type reasoning, Uni-MoE-2.0-Omni provides an iterative coverage optimisation stage constructed on GSPO and DPO. GSPO makes use of the mannequin itself or one other LLM as a decide to judge responses and assemble desire alerts, whereas DPO converts these preferences right into a direct coverage replace goal that’s extra steady than commonplace reinforcement studying from human suggestions. The analysis group apply this GSPO DPO loop in a number of rounds to type the Uni-MoE-2.0-Pondering variant, which inherits the omnimodal base and provides stronger step-by-step reasoning.

Era, MoE TTS and job conscious diffusion

For speech technology, Uni-MoE-2.0-Omni makes use of a context conscious MoE TTS module that sits on prime of the language mannequin. The LLM emits management tokens that describe timbre, model and language, together with the textual content content material. The MoE TTS consumes this sequence and produces discrete audio tokens, that are then decoded into waveforms by an exterior codec mannequin, aligning with the unified speech encoder on the enter aspect. This design makes speech technology a firstclass managed technology job as a substitute of a separate pipeline.

On the imaginative and prescient aspect, a job conscious diffusion transformer is conditioned on each job tokens and picture tokens. Job tokens encode whether or not the system ought to carry out textual content to picture technology, enhancing or low degree enhancement. Picture tokens can seize semantics from the omnimodal spine, for instance from a textual content plus picture dialogue. Light-weight projectors map these tokens into the diffusion transformer conditioning area, enabling instruction guided picture technology and enhancing, whereas retaining the principle omnimodal mannequin frozen through the remaining visible advantageous tuning stage.

Benchmarks and open checkpoints

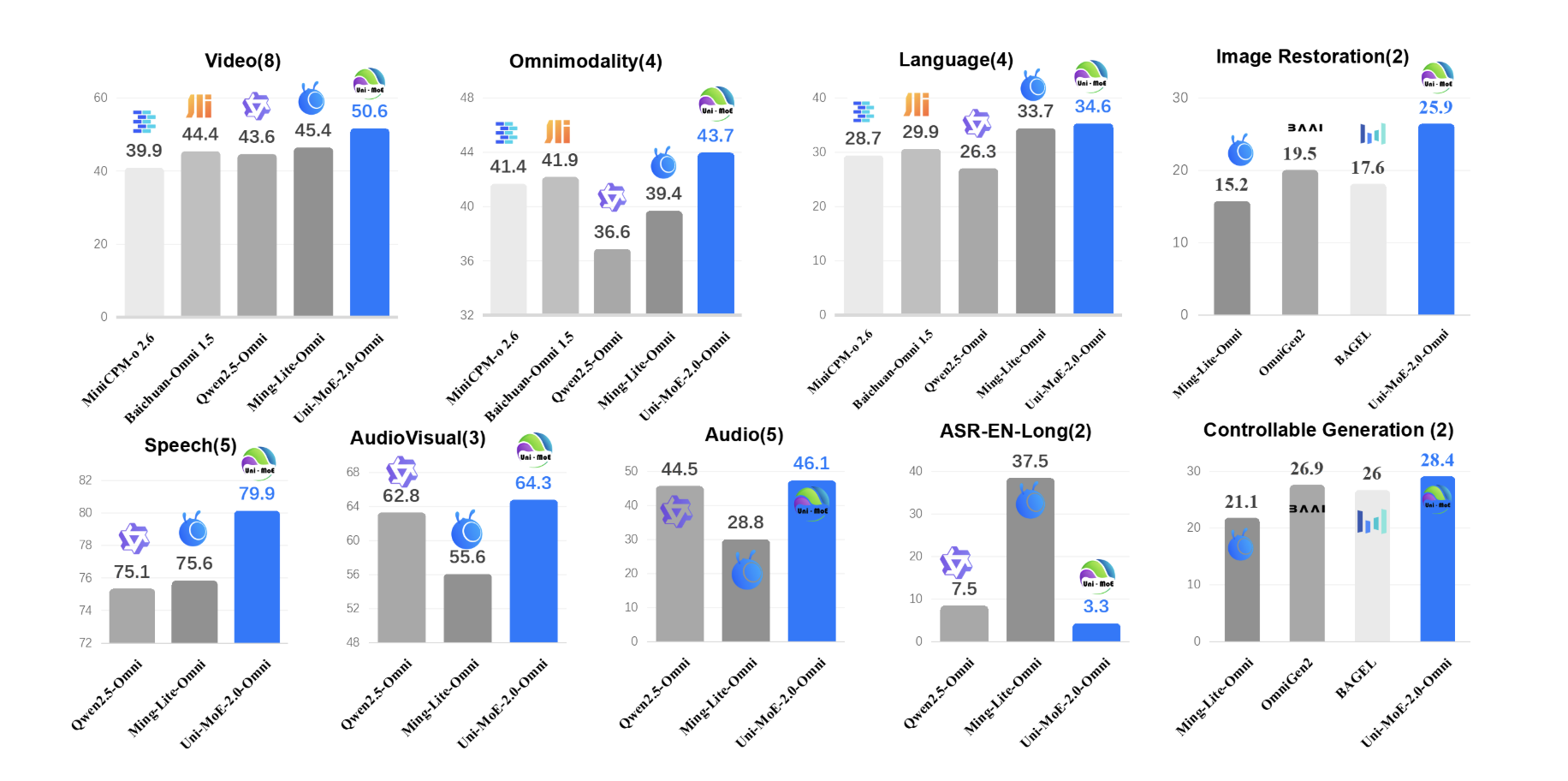

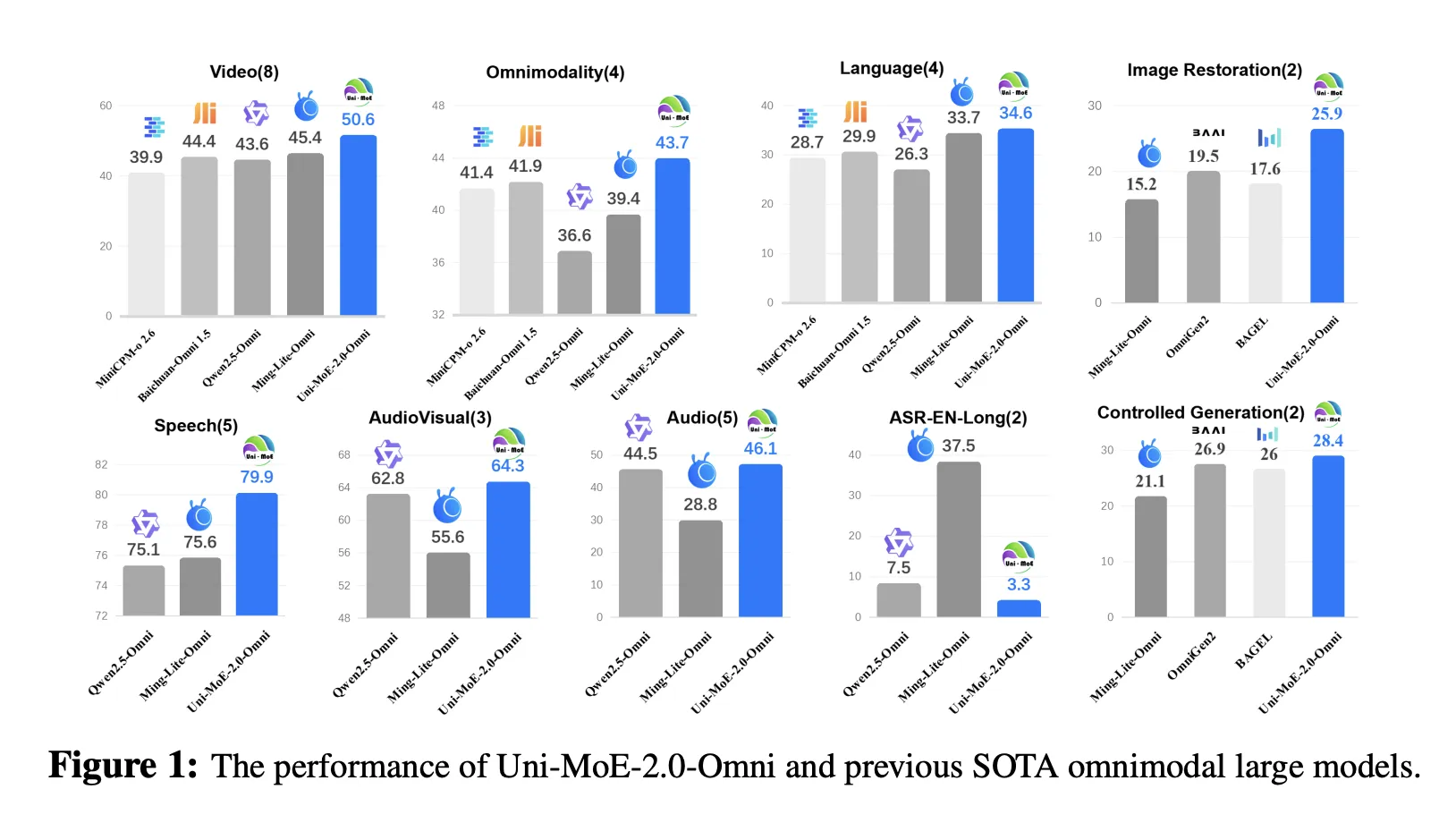

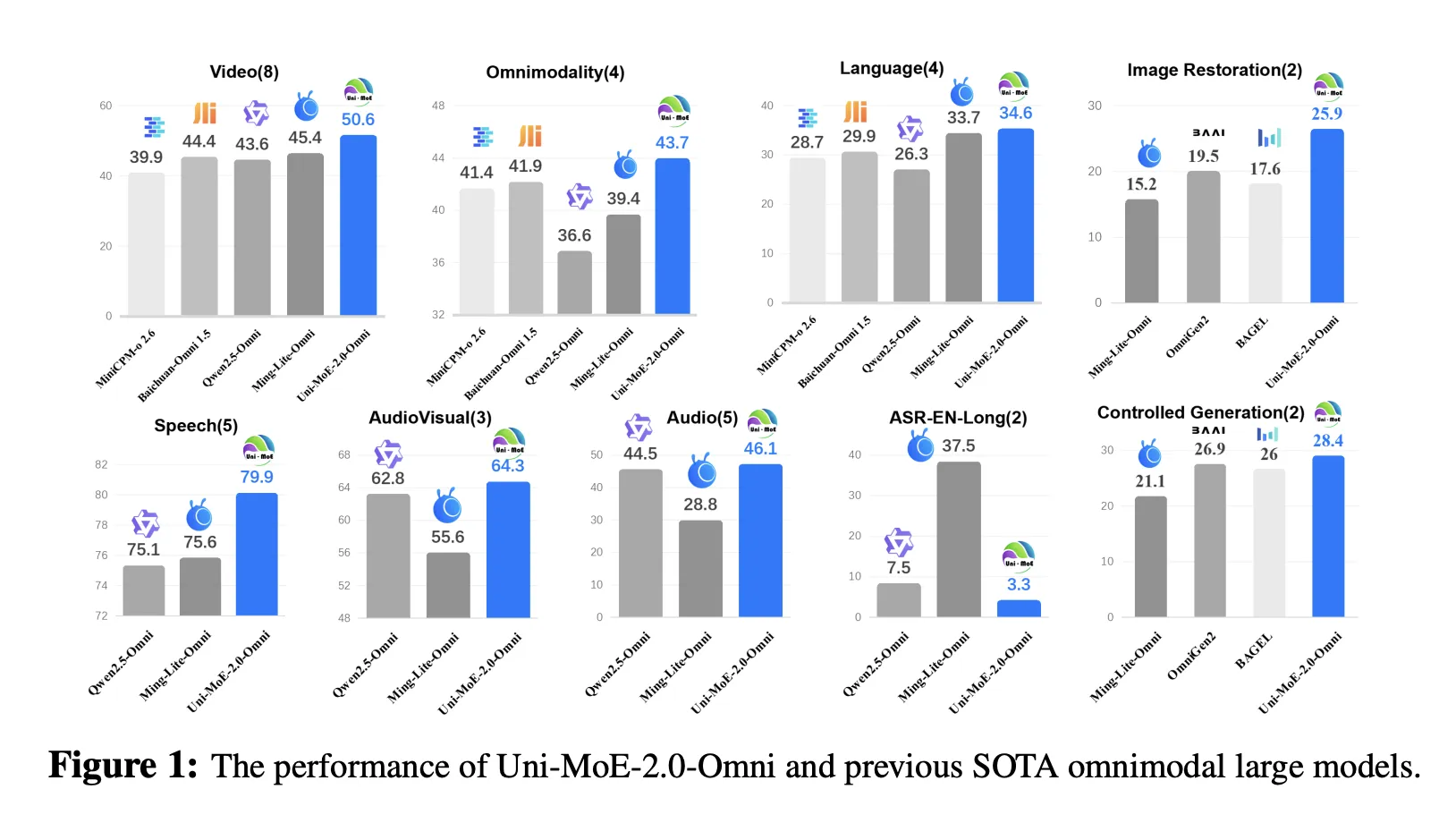

Uni-MoE-2.0-Omni is evaluated on 85 multimodal benchmarks that cowl picture, textual content, video, audio and cross or tri modal reasoning. The mannequin surpasses Qwen2.5-Omni, which is skilled on about 1.2T tokens, on greater than 50 of 76 shared benchmarks. Positive aspects embody about +7% common on video understanding throughout 8 duties, +7% common on omnimodality understanding throughout 4 benchmarks together with OmniVideoBench and WorldSense, and about +4% on audio visible reasoning.

For lengthy type speech processing, Uni-MoE-2.0-Omni reduces phrase error charge by as much as 4.2% relative on lengthy LibriSpeech splits and brings about 1% WER enchancment on TinyStories-en textual content to speech. Picture technology and enhancing outcomes are aggressive with specialised visible fashions. The analysis group reviews a small however constant achieve of about 0.5% on GEdit Bench in comparison with Ming Lite Omni, whereas additionally outperforming Qwen Picture and PixWizard on a number of low degree picture processing metrics.

Key Takeaway

- Uni-MoE-2.0-Omni is a totally open omnimodal massive mannequin constructed from scratch on a Qwen2.5-7B dense spine, upgraded to a Combination of Consultants structure that helps 10 cross modal enter varieties and joint understanding throughout textual content, pictures, audio and video.

- The mannequin introduces a Dynamic Capability MoE with shared, routed and null consultants plus Omni Modality 3D RoPE, which collectively stability compute and functionality by routing consultants per token whereas retaining spatio temporal alignment throughout modalities contained in the self consideration layers.

- Uni-MoE-2.0-Omni makes use of a staged coaching pipeline, cross modal pretraining, progressive supervised advantageous tuning with modality particular consultants, information balanced annealing and GSPO plus DPO based mostly reinforcement studying to acquire the Uni-MoE-2.0-Pondering variant for stronger lengthy type reasoning.

- The system helps omnimodal understanding and technology of pictures, textual content and speech by way of a unified language centric interface, with devoted Uni-MoE-TTS and Uni-MoE-2.0-Picture heads derived from the identical base for controllable speech and picture synthesis.

- Throughout 85 benchmarks, Uni-MoE-2.0-Omni surpasses Qwen2.5-Omni on greater than 50 of 76 shared duties, with round +7% beneficial properties on video understanding and omnimodality understanding, +4% on audio visible reasoning and as much as 4.2% relative WER discount on lengthy type speech.

Take a look at the Paper, Repo, Mannequin Weights and Venture Web page. Be happy to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be part of us on telegram as nicely.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the newest breakthroughs, get unique updates, and join with a worldwide community of future-focused thinkers.

Unlock tomorrow’s traits right now: learn extra, subscribe to our e-newsletter, and change into a part of the NextTech neighborhood at NextTech-news.com