For years, the expertise {industry} has operated underneath the shadow of a single, green-tinted big. NVIDIA, by way of a mixture of visionary management and the early realization that GPUs have been the key sauce for parallel processing, successfully “owned” the AI market earlier than most of us even knew there was an AI market to personal. However as any long-term observer of this {industry} is aware of, dominance typically breeds a sure form of deafness. When an organization stops listening to its clients as a result of it believes its product is the one recreation on the town, it creates a large opening for a disciplined, centered competitor.

That competitor is AMD, and their latest efficiency within the MLPerf Inference 6.0 benchmarks means that the window of NVIDIA’s absolute dominance is closing a lot sooner than the market initially anticipated.

The Important Significance of MLPerf

On the earth of expertise, we are sometimes drowned in “hero benchmarks” – rigorously curated, vendor-specific checks designed to make a product appear to be it’s breaking the legal guidelines of physics. Nonetheless, MLPerf is totally different. It’s the {industry} commonplace, offering a degree enjoying area the place {hardware} is examined in opposition to real-world AI workloads like Massive Language Fashions (LLMs), picture technology, and suggestion engines.

MLPerf issues as a result of it removes the “advertising fluff.” For IT decision-makers and cloud suppliers who’re spending billions on infrastructure, MLPerf is the survival information. It measures not simply uncooked velocity, however effectivity and scalability. AMD’s latest outcomes, significantly with the Intuition MI325X accelerators, reveal that they aren’t simply collaborating within the AI race anymore; they’re now setting the tempo in key metrics like Llama-3 efficiency and latency.

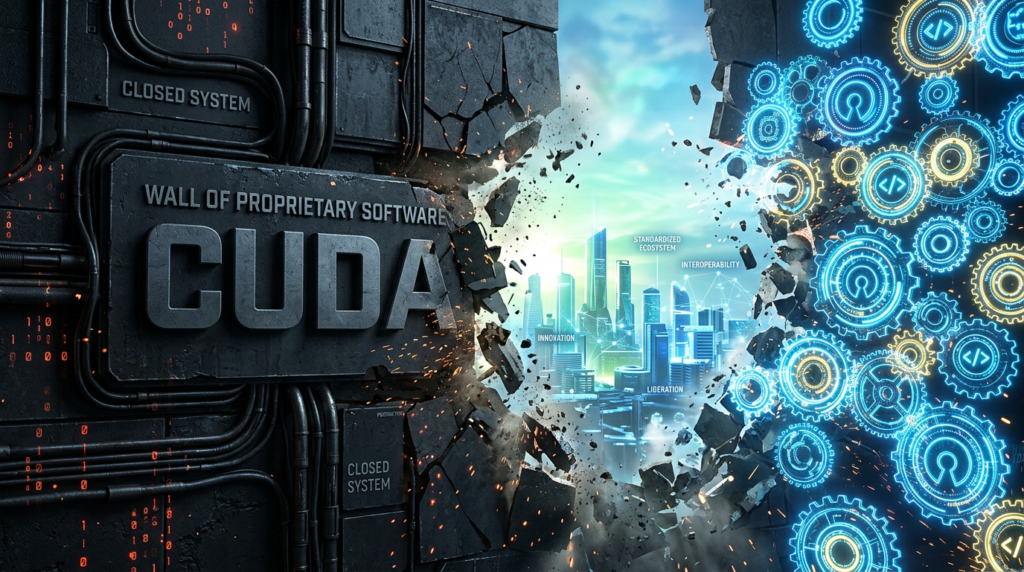

The NVIDIA Publicity: A Downside of Listening

NVIDIA is presently ready just like the place Intel was within the early 2000s or the place IBM was within the late Eighties. When you’ve gotten 90% market share, you are likely to dictate phrases quite than negotiate them. I’ve been listening to a rising refrain of complaints from enterprise clients relating to NVIDIA’s proprietary “moat.” Between the excessive price of entry, the complexities of the CUDA software program stack, and a perceived lack of flexibility in assembly particular buyer wants, NVIDIA is more and more seen as a “tax” on AI progress.

Jensen Huang has carried out an excellent job constructing a powerhouse, however there’s a rising sentiment that NVIDIA is concentrated by itself roadmap on the expense of what the shoppers are literally asking for: decrease TCO (Complete Value of Possession), open requirements, and higher availability. By locking clients right into a closed ecosystem, NVIDIA has inadvertently turned the {industry} towards open options.

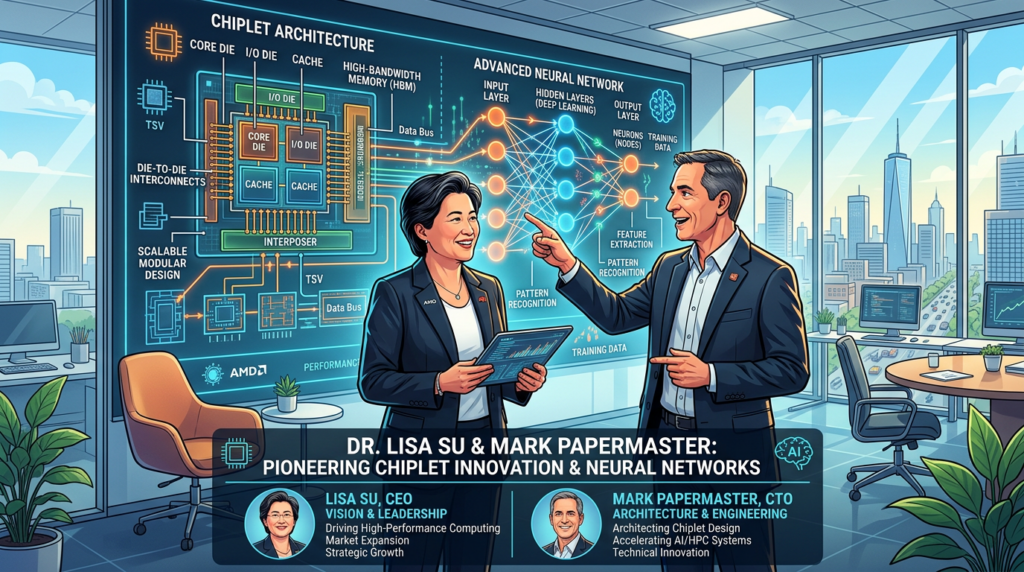

The Renaissance of AMD: Su and Papermaster

To grasp why AMD is now the first menace to NVIDIA, it’s important to look again on the management of Dr. Lisa Su and CTO Mark Papermaster. When Lisa Su took over, AMD was successfully on life assist. She made the arduous name to pivot away from low-margin markets and double down on high-performance computing.

Mark Papermaster’s architectural management can’t be overstated. By specializing in a “chiplet” structure and a constant, multi-generational roadmap, AMD was capable of out-maneuver Intel within the information middle with EPYC. Now, they’re making use of that very same disciplined execution to AI with the ROCm software program platform and the Intuition line.

In contrast to NVIDIA, AMD has leaned closely into “open” ecosystems. By making ROCm extra accessible and making certain it performs nicely with industry-standard frameworks like PyTorch and JAX, AMD is listening to the shoppers who’re uninterested in being locked right into a single vendor’s proprietary silo. AMD is profitable as a result of they’re performing like a associate, whereas NVIDIA is performing like a sovereign.

AMD’s AI Efficiency: Closing the Hole

AMD’s efficiency in MLPerf 6.0 isn’t simply an incremental enchancment; it’s a breakthrough. The Intuition MI325X is displaying outstanding features in HBM3E reminiscence capability and bandwidth, that are the first bottlenecks for contemporary generative AI. Whereas NVIDIA’s H200 and Blackwell chips are spectacular, the AMD MI325X is delivering comparable, and in some circumstances superior, inference efficiency for the most recent Llama-3 fashions.

That is important as a result of the AI market is shifting from coaching to inference. Whereas coaching massive fashions takes huge energy, the long-term income in AI is in working these fashions (inference). If AMD can present a more cost effective, open, and equally highly effective inference engine, the financial argument for staying with NVIDIA begins to crumble.

The Altering AI Panorama of 2026

This 12 months has marked a transition from “AI Hype” to “AI Actuality.” In 2024 and 2025, corporations have been shopping for each GPU they may discover, no matter value or match. In 2026, we’re seeing the “Nice Rationalization.” CFOs are actually asking for ROI. They’re trying on the energy payments for these huge clusters and demanding higher effectivity.

Over the remainder of the 12 months, we anticipate to see a surge in “Edge AI” and localized LLMs. The market is transferring away from huge, monolithic fashions towards specialised, environment friendly ones. This performs straight into AMD’s strengths in versatile, high-memory {hardware}. As enterprises understand they don’t want a large NVIDIA cluster to run a specialised inside mannequin, AMD’s worth proposition turns into plain.

The Aggressive Pivot

NVIDIA’s main protection has at all times been CUDA. Nonetheless, the {industry} is transferring towards “software-defined {hardware}.” Frameworks like OpenAI’s Triton and the expansion of the Unified Accelerator Basis (UXL) are successfully neutralizing the CUDA benefit. As soon as the software program barrier is gone, the competitors comes right down to {hardware} efficiency, energy effectivity, and value—areas the place AMD has traditionally excelled.

Wrapping Up

The MLPerf 6.0 outcomes are a “shot throughout the bow” for NVIDIA. They affirm that AMD, underneath the regular hand of Lisa Su and the technical brilliance of Mark Papermaster, has reached efficiency parity in crucial AI workloads.

NVIDIA stays a formidable opponent, however its lack of give attention to buyer flexibility and its insistence on a closed ecosystem is making a vacuum that AMD is very happy to fill. For the primary time within the AI period, there’s a reputable alternative. And because the market shifts towards inference and cost-efficiency, that alternative is more and more trying like AMD.

On this {industry}, you both take heed to your clients otherwise you watch them go away. AMD is listening. NVIDIA, it appears, continues to be too busy listening to its personal hype.

Elevate your perspective with NextTech Information, the place innovation meets perception.

Uncover the most recent breakthroughs, get unique updates, and join with a world community of future-focused thinkers.

Unlock tomorrow’s tendencies at present: learn extra, subscribe to our e-newsletter, and change into a part of the NextTech neighborhood at NextTech-news.com